Introducing DigitalOcean AI-Native Cloud for Production AI Workloads

CEO, DigitalOcean

- Updated:

- 4 min read

The AI industry has a compounding bottleneck, and it isn’t the models. It’s inference.

What used to be a single model call has become a system of continuous interaction. Applications now orchestrate multiple models, retrieve and synthesize data, execute tools, and repeat this cycle in production. These are no longer stateless requests. They are dynamic systems that behave more like infrastructure than software features.

Four shifts are redefining what infrastructure has to do:

- Inference has overtaken training as the center of gravity

- Reasoning models are becoming the default

- Autonomous agents are running at scale

- Open-source models are reaching quality parity at a fraction of the cost

Most stacks were never designed for this. Hyperscalers expose hundreds of services that still need to be stitched together. Inference providers sit on top of someone else’s compute, adding another layer of margin. GPU vendors give you silicon, but not a system.

Inference has quietly become the most expensive and least owned layer of the modern stack. Every new capability is layered onto a fragmented foundation, and complexity compounds underneath it.

Eventually, you stop having a model problem. You have a stack problem.

Today at Deploy 2026, we revealed DigitalOcean’s AI-Native Cloud, a full-stack system for production AI workloads.

Our AI-Native Cloud builds on DigitalOcean’s core cloud across compute, storage, networking, and managed services, and extends it with capabilities designed for how AI systems actually run in production.

The goal is simple: reduce the stack so builders can focus on building, not stitching systems together.

Open source is not an add-on here, it’s the foundation. We removed unnecessary abstraction layers, eliminated margin stacking across vendors, and gave developers direct access to the primitives they need to build and scale AI systems.

This isn’t theoretical. Customers like Workato run a trillion automation tasks on DigitalOcean at 67% lower cost. Character.ai handles over a billion queries per day with 2x inference throughput. Hippocratic AI powers 20M+ patient interactions with 40% lower latency. The AI-Native Cloud is already running in production.

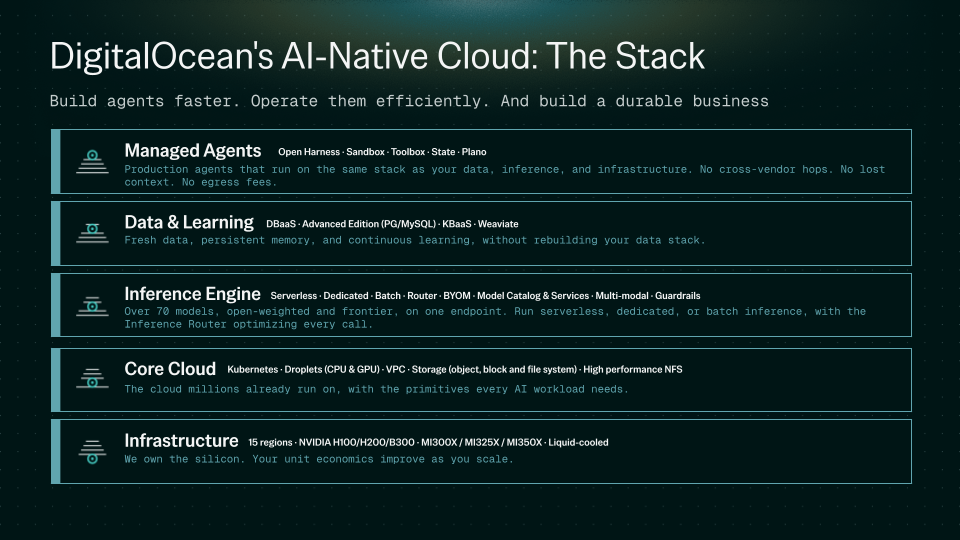

A five-layer stack for modern AI systems

AI applications are not single systems. They are composed of interacting layers that must work together continuously.

The DigitalOcean AI-Native Cloud brings these into a unified system across five layers:

What’s new in the DigitalOcean AI-Native Cloud

These are not conceptual layers, they are live systems. Today we’re expanding our offering with production capabilities across inference, data, and storage to make it work at scale.

Inference Router (Public Preview) A policy-aware control plane that routes every request based on cost, latency, quality, and residency constraints. Instead of hardcoding model logic, teams define intent once and the system optimizes execution across providers and deployment types.

Early users are already seeing impact. LawVo runs 130+ AI agents processing 500M+ tokens per week and reduced inference costs by 42% after switching to DigitalOcean with no code changes.

Dedicated Inference and Bring Your Own Model

Run custom and fine-tuned models on dedicated GPU infrastructure with full control over performance, scaling, and configuration. Deploy models from sources like Hugging Face or your own environments, and operate high-throughput workloads with a pre-tuned inference stack and managed orchestration, without the complexity of Kubernetes.

Expanded Models and Services

Run and evaluate text, image, audio, and video models through a single system. A continuously refreshed catalog includes 25+ new models and Day 0 access to select releases, including NVIDIA Nemotron 3 Nano Omni, available first on DigitalOcean. The highly efficient open multimodal model unifies vision, speech, language, and tool use, with NVIDIA TensorRT-LLM tuned at the kernel level. Built-in evaluations let teams benchmark quality, cost, and latency before production.

PostgreSQL & MySQL Advanced Edition (Public Preview)

Managed PostgreSQL and MySQL Advanced Edition deliver hyperscaler-grade reliability and scale, available alongside Standard Edition.

Managed Weaviate (Private Preview)

Production-ready vector infrastructure without the operational overhead, with native integration to Serverless Inference and predictable pricing. Sign up for early access.

Knowledge Bases

A fully managed RAG service that handles ingestion, chunking, embedding, retrieval, and reranking, with MCP support for agent frameworks. Customers are moving from prototype to production in days.

Built to simplify, without limiting flexibility

The advantage isn’t any single layer, it’s how they work together.

When agents, inference, and data run on the same system, optimization compounds automatically across performance and cost. The stack becomes self-reinforcing instead of fragmented.

At the same time, flexibility remains. Open APIs and compatibility with existing tools make it easy to adopt new models, integrate external systems, and evolve architectures as needs change.

Looking ahead

The shift from on-prem to cloud created AWS. The shift from cloud to SaaS created Salesforce. The shift from cloud-native to AI-native and agent-native applications will create the next great infrastructure company. We intend to be that company.

Five layers. One platform. Open at every layer. Start building today.

About the author

As Chief Executive Officer, Paddy Srinivasan drives the strategic direction for DigitalOcean. With over 25 years of experience in technology leadership and a proven track record of delivering customer-centric solutions, Srinivasan brings invaluable expertise to further DigitalOcean's mission of simplifying cloud computing.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.Related Articles

The Agentic Era Demands a New Class of Infrastructure: DigitalOcean Acquires Katanemo Labs

Vinay Kumar, DigitalOcean Chief Product & Technology Officer

- April 2, 2026

- 3 min read

Run Advanced Reasoning on DigitalOcean with Arcee AI's Trinity Large-Thinking

- April 1, 2026

- 3 min read

Now Available: DigitalOcean Cloud Security Posture Management (CSPM)

- April 1, 2026

- 2 min read