- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

AI/ML Technical Content Strategist

Agentic Coding models are one of the obvious and most impressive applications of LLM technologies, and their development has gone hand in hand with massive impacts to markets and job growth. There are numerous players vying to create the best new LLM for all sorts of applications, and many would argue no company and their products in this space have more of a significant impact than OpenAI.

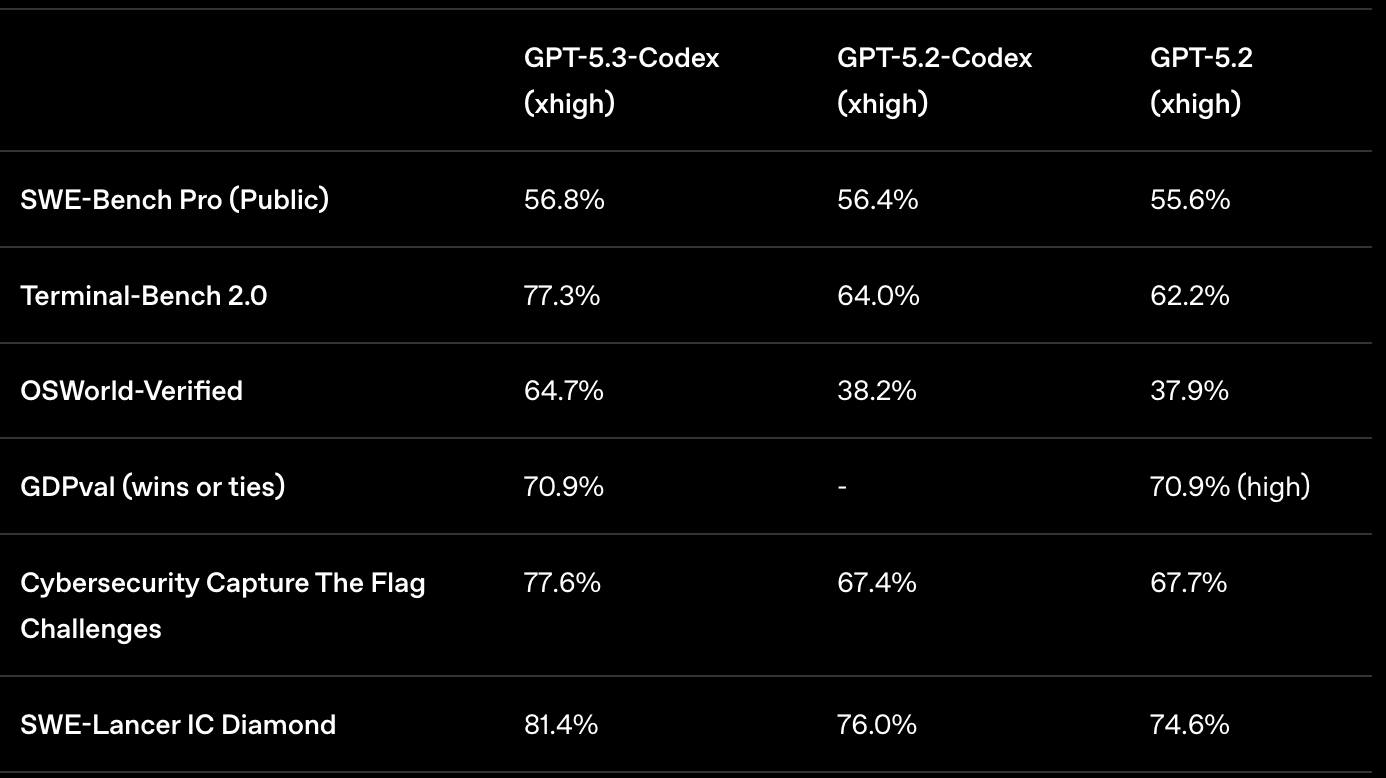

GPT‑5.3‑Codex is a truly impressive installment in this quest to create the best model. OpenAI promises that GPT-5.3-Codex is their most capable Codex model yet, advancing both coding performance and professional reasoning beyond GPT-5.2-Codex. Benchmark results show state-of-the-art performance on coding and agentic benchmarks like SWE-Bench Pro and Terminal-Bench, reflecting stronger multi-language and real-world task ability. Furthermore, the model is ~25% faster than GPT-5.2-Codex for Codex users thanks to infrastructure and inference improvements. Overall, GPT‑5.3‑Codex might be the most powerful agentic coding model ever released (Source).

So let’s see what it can do. Now available on the DigitalOcean AI Platform and all OpenAI ChatGPT and Codex resources, we can test the model to see how it performs. In this tutorial, we will show how to use Codex to write a completely new project from scratch. We are going to make a Z-Image-Turbo Real-Time image-to-image application using GPT‑5.3‑Codex, without any user coding! Follow along to learn what GPT‑5.3‑Codex has to offer, how to use GPT‑5.3‑Codex for yourself, and a guide to vibe coding new web applications from scratch!

Key Takeaways

- State-of-the-Art Agentic Performance: GPT-5.3-Codex delivers impressive results across software engineering and agentic tasks, outperforming GPT-5.2-Codex in reasoning, multi-language capability, and real-world coding evaluations like SWE-Bench Pro and Terminal-Bench 2.0.

- Getting Started with GPT-5.3-Codex on the DigitalOcean AI Platform is easy: All you need is access to the DigitalOcean Platform to begin integrating your LLM’s calls seamlessly into your workflows at scale.

- From Prototype to Production in Record Time: With roughly 25% improved speed and real-time interactive steering, GPT-5.3-Codex feels less like a static generator and more like a responsive engineering partner capable of iterating, debugging, and refining projects alongside you. By handling scaffolding, architecture decisions, edge cases, and deployment-ready details, GPT-5.3-Codex can dramatically compress development timelines, making it possible to ship fully functional applications from scratch more quickly than ever (Source).

GPT‑5.3‑Codex Overview

GPT-5.3-Codex is a major agentic coding model upgrade that combines stronger reasoning and professional knowledge with enhanced coding performance, runs about 25 % faster than GPT-5.2-Codex, and excels on real-world and multi-language benchmarks like SWE-Bench Pro and Terminal-Bench. It’s designed to go beyond simple code generation to support full software lifecycle tasks (e.g., debugging, deployment, documentation) and lets you interact and steer it in real time while it’s working, making it feel more like a collaborative partner than a generator. It also has expanded capabilities for long-running work and improved responsiveness, with broader availability across IDEs, CLI, and apps for paid plans. (Source)

As we can see from the table above, GPT‑5.3‑Codex is a major step forward over GPT‑5.2‑Codex across software engineering, agentic, and computer use benchmarks. This, paired with the marked improvement in efficiency, make for an incredible indicator of how great this model is. We think this is a significant upgrade to previous GPT Codex model users, as well as new users looking for a powerful agentic coding tool to aid their process.

Getting Started with GPT-5.3-Codex

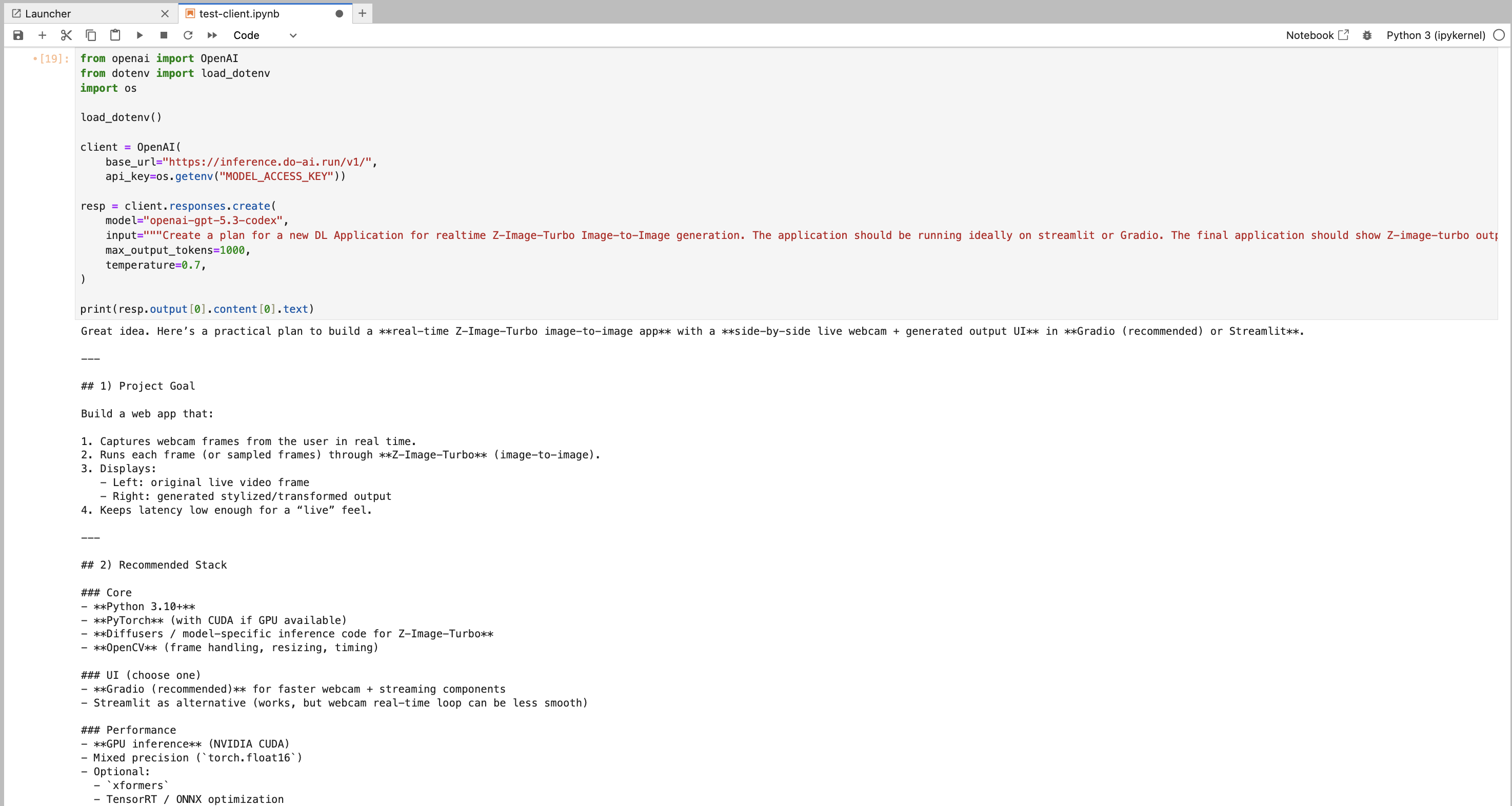

There are two ways to get started with GPT-5.3-Codex that we recommend to developers. First, is accessing the model with Serverless Inference through the DigitalOcean AI Platform. With Serverless Inference, we can Pythonically integrate the LLM generations into any pipeline. All you need to do is create a model access key, and begin generating! For more information on getting started, check out the official documentation.

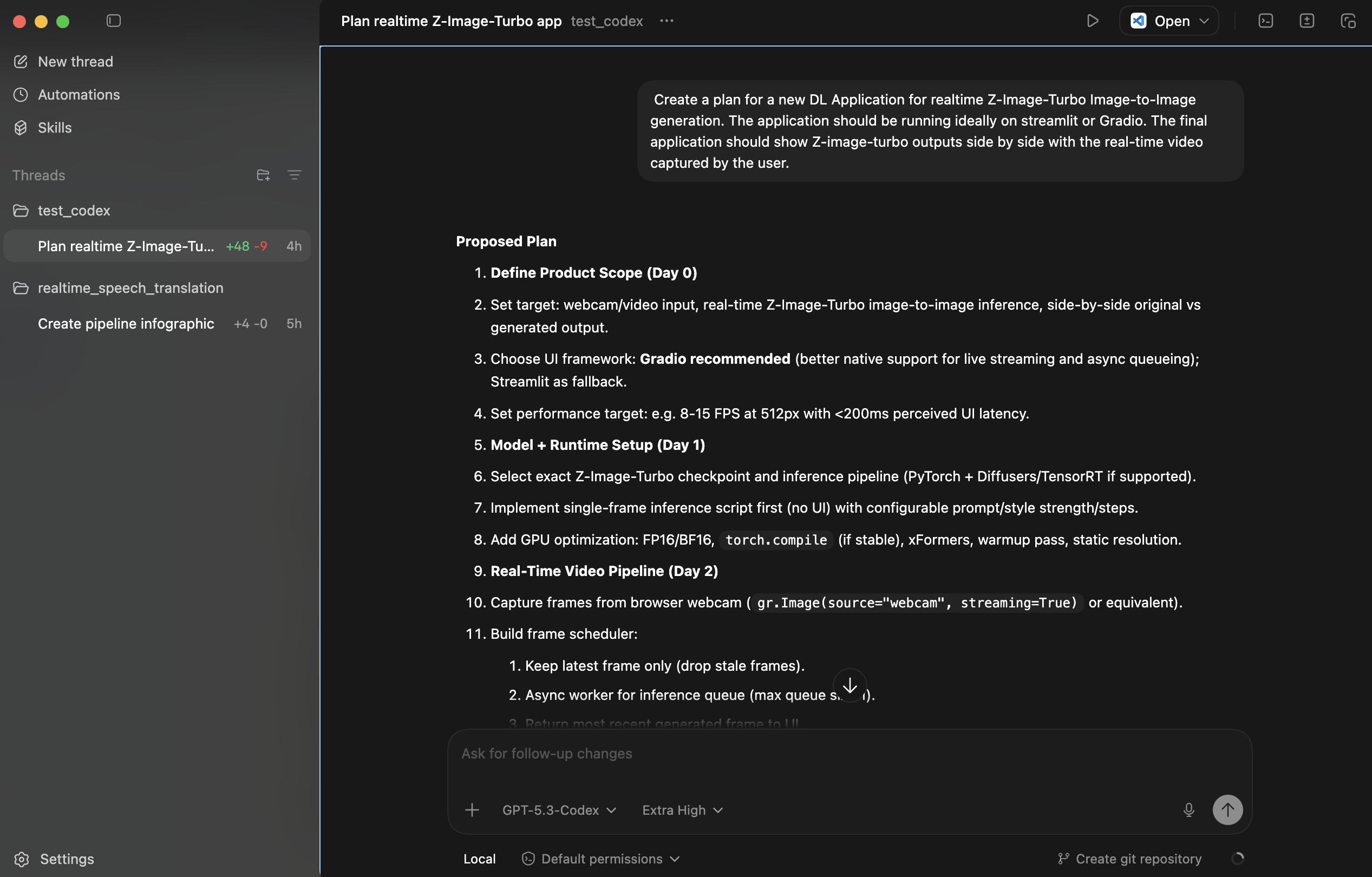

The other way to get started quickly is the official OpenAI Codex application. It’s easy to get started with Codex on your local machine. Simply download the application onto your computer, and launch it. You will then be prompted to log in to your account. From there, simply choose which project you wish to work in, and you’re ready to get started!

Vibe Coding a Z-Image-Turbo Web Application with GPT‑5.3‑Codex

So now that we have heard about how GPT‑5.3‑Codex performs, let’s see it in action. For this experiment, we sought to see how the model performed on a relatively novel assignment that has a basis in past applications. In this case, we asked it to create a real-time image-to-image pipeline for Z-Image-Turbo that uses webcam footage as image input.

To do this, we created a blank new directory/project space to work in. We then asked the model to create a skeleton of the project to begin, and then iteratively added in the missing features on subsequent queries. Overall, we were able to create a full working version of the application with just 5 prompts and 30 minutes of testing. This extreme speed made it possible to ship the project in less than a day, from inspiration to completion. Now let’s take a closer look at the application project itself.

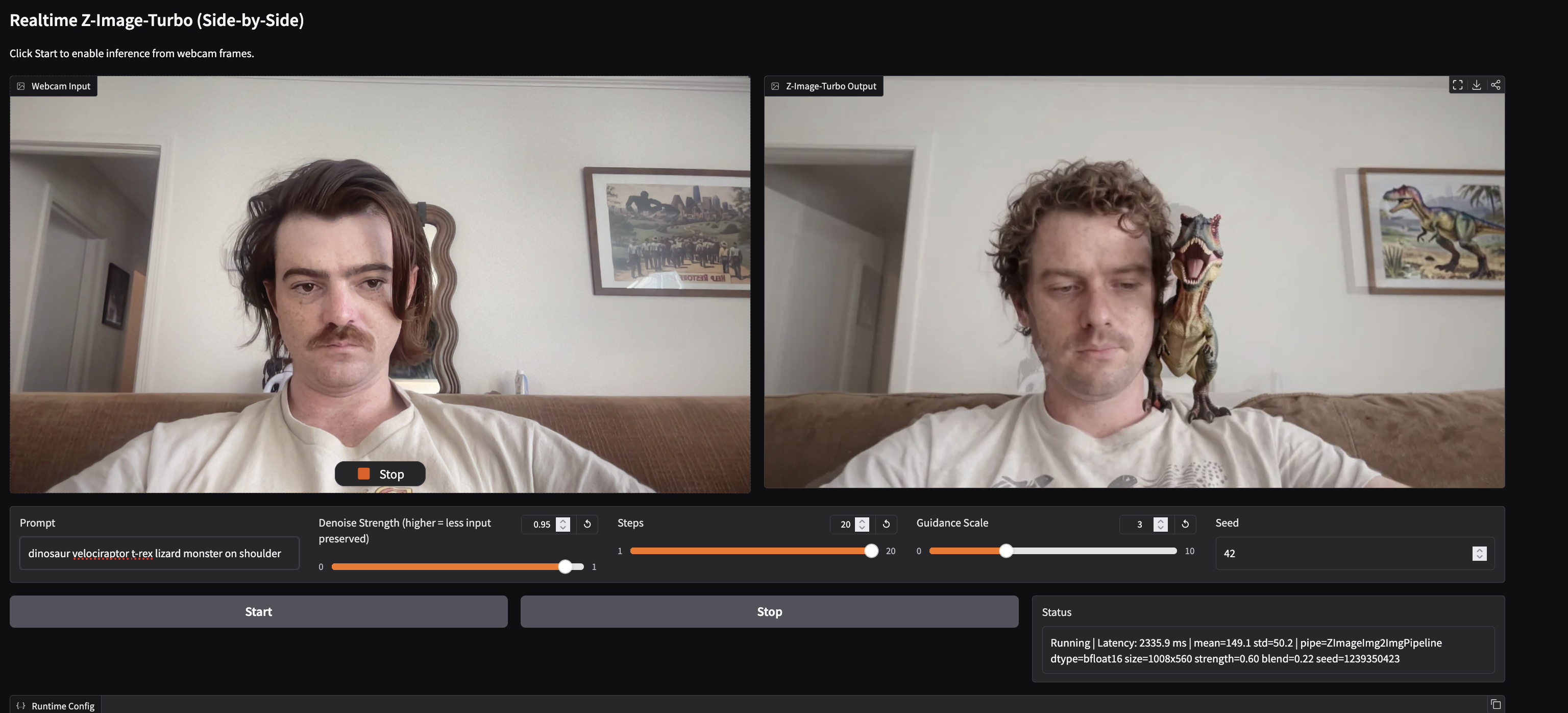

This project, which is available on GitHub, is a real-time webcam-driven image-to-image generation application built in Python around a Gradio interface and a dedicated Z-Image-Turbo inference engine, where the UI in app.py presents side-by-side live input and generated output panes, parameter controls, and explicit Start/Stop gating so inference only runs when requested, while the backend in inference.py loads Tongyi-MAI/Z-Image-Turbo via ZImageImg2ImgPipeline, introspects the pipeline signature to bind the correct image-conditioning argument, enforces true img2img semantics instead of prompt-only generation, and executes inference in torch.inference_mode() with dynamic argument wiring so behavior adapts to the installed diffusers API. Critically, it can compute per-frame target resolution from webcam aspect ratio, snapping dimensions to a model-friendly multiple (default 16), and caps both sides below 1024, then applies post-generation safeguards that made the app stable in practice: dtype strategy (auto preferring bf16 then fp32, avoiding fp16 black-frame failure modes), degenerate-output detection with automatic float32 recovery, robust PIL/NumPy/Tensor output decoding and normalization, effective-strength clamping to preserve source structure, frame-hash seed mixing so scene changes influence results, and configurable structure-preserving input blending, all parameterized in config.py and documented in the README.md, with runtime status reporting latency plus internal diagnostics (pipe, dtype, size, effective strength, blend, seed, warnings) so you can observe exactly how each frame is being processed.

Closing Thoughts

GPT-5.3-Codex feels less like an incremental update and more like a meaningful shift in how developers interact with code. The combination of stronger reasoning, benchmark gains seen in testing, and a noticeable speed improvement makes it clear that agentic coding is maturing into something even more production-ready. What once required hours of boilerplate, debugging, and manual wiring can now be orchestrated through iterative prompts and high-level direction. As we demonstrated with the Z-Image-Turbo real-time application, a fully functional project can move from blank directory to working prototype in much less time traditionally required. While the actual results and performance benefits you experience will vary based on specific project requirements, complexity, and individual developer workflows, we are confident that GPT-5.3-Codex provides a substantial upgrade and a meaningful step forward in agentic coding capability, as evidenced by its stronger reasoning and measurable benchmark gains.

We recommend trying out GPT-5.3-Codex in all contexts, especially with DigitalOcean’s AI Platform!

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- Key Takeaways

- GPT‑5.3‑Codex Overview

- Getting Started with GPT-5.3-Codex

- Vibe Coding a Z-Image-Turbo Web Application with GPT‑5.3‑Codex

- Closing Thoughts

Join the many businesses that use DigitalOcean AI Platform.

Reach out to our team for assistance with GPU Droplets, 1-click LLM models, AI Agents, and bare metal GPUs.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.