- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

By Ajeet Raina and Anish Singh Walia

Introduction

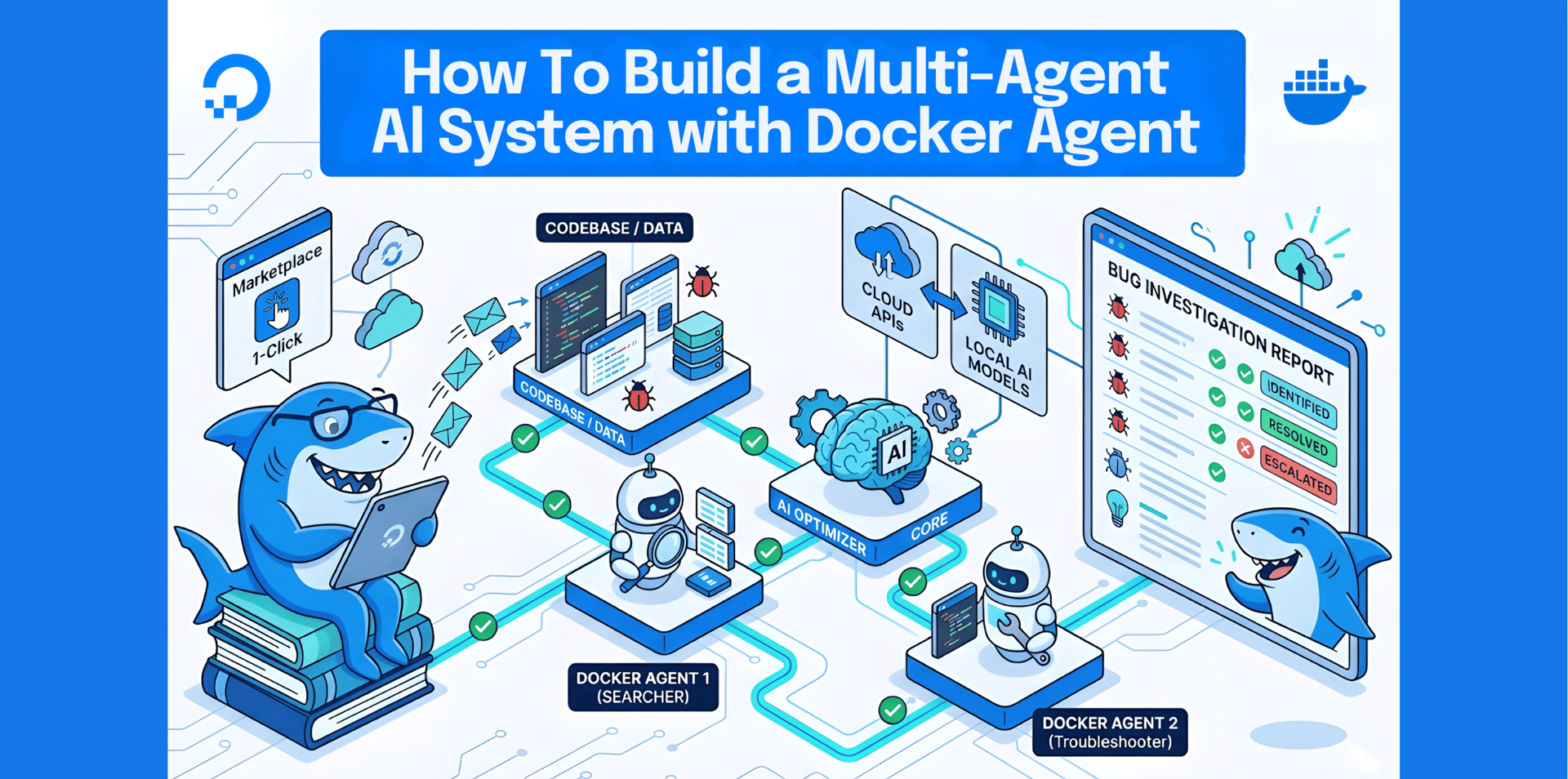

Docker Agent is an open-source framework from Docker for building and running multi-agent AI systems. It lets developers define teams of AI agents using YAML configuration, with no Python orchestration code required. Each agent can use its own model (cloud APIs like OpenAI and Anthropic, or local models through Docker Model Runner), along with specialized instructions and tools such as filesystem access, web search, and custom MCP integrations. Docker Agent also supports distributing agents as OCI artifacts through Docker Hub, so teams can share and deploy agent configurations instantly.

This tutorial walks you through spinning up a DigitalOcean Droplet with Docker Agent pre-installed using the Docker Agent 1-Click App from the DigitalOcean Marketplace. After creating your Droplet, you will build a Bug Investigator: a multi-agent system where specialized agents collaborate to analyze errors, research solutions, fix code, and generate tests. You will also learn how to configure both cloud API and local model inference. Since this 1-Click Droplet is based on a standard Ubuntu 24.04 Droplet, you can follow any DigitalOcean Docker tutorials after you finish this guide.

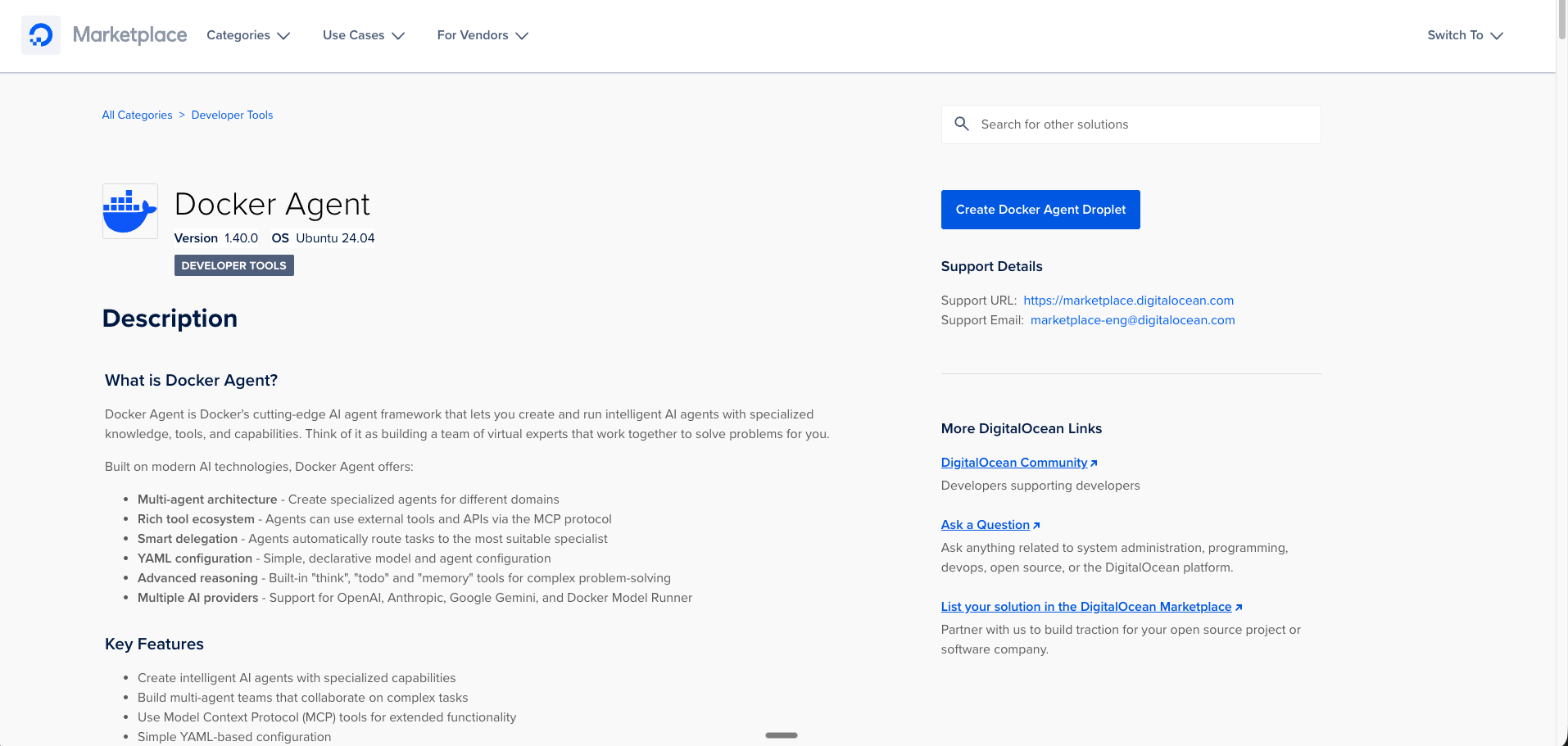

Note: The 1-Click image ships Docker Agent v1.9.10 under its previous name cagent. Starting with Docker Desktop 4.63 and Docker Agent v2. x, the CLI was renamed from cagent to docker agent. All commands in this tutorial use cagent because that is the version pre-installed on the Marketplace image. The concepts, YAML configuration, and multi-agent architecture are identical across both versions.

You can access the Docker Agent 1-Click App from the DigitalOcean Marketplace.

Key takeaways

- The Docker Agent 1-Click Droplet on DigitalOcean reduces deployment to under five minutes with Docker Agent v1.9.10 pre-installed on Ubuntu 24.04.

- Multi-agent architectures split complex tasks across focused specialists. In this tutorial, the Tester agent automatically generated unit tests without any explicit prompting.

- Docker Agent supports cloud APIs (OpenAI, Anthropic) and local models through Docker Model Runner for air-gapped or privacy-sensitive environments.

- Local inference with Qwen3 8B correctly diagnosed bugs but took roughly three minutes on CPU, compared to about five seconds with cloud APIs. For faster local inference, consider DigitalOcean GPU Droplets.

- Agents are distributable as OCI artifacts through Docker Hub, enabling instant sharing and deployment across teams.

What you will build

Understanding agentic AI workflows

Traditional AI interactions are simple request-response exchanges: you ask a question, the model answers. Agentic AI workflows are different. An AI agent can take actions autonomously: reading files, searching the web, writing code, and deciding what to do next based on results. Instead of just answering “here is how to fix that bug,” an agent can actually fix it.

Multi-agent systems take this further. Rather than one agent trying to do everything, specialized agents collaborate on complex tasks. Think of it like a development team: one person investigates issues, another researches solutions, another writes code, and another tests. Each brings focused expertise, and together they solve problems more effectively than a single generalist would.

A multi-agent system (MAS) consists of multiple AI agents working collectively to perform tasks on behalf of a user or another system. MAS is a system of autonomous agents that cooperate and coordinate through structured communication. Docker Agent handles this coordination layer through YAML-defined agent hierarchies.

The bug investigator architecture

For this tutorial, you will build a multi-agent debugging system with four specialists:

┌─────────────────────────────────────────┐

│ BUG INVESTIGATOR (Root) │

│ Analyzes & Coordinates │

└───────────────┬─────────────────────────┘

│

┌─────────┼─────────┐

▼ ▼ ▼

┌───────────┐ ┌───────┐ ┌───────────┐

│ RESEARCHER│ │ FIXER │ │ TESTER │

│ Web Search│ │ Writes│ │ Validates │

│ Find Docs │ │ Code │ │ & Tests │

└───────────┘ └───────┘ └───────────┘

- Investigator (Root Agent): Analyzes error messages, identifies root causes, and coordinates the other agents

- Researcher: Searches documentation and finds similar issues and solutions

- Fixer: Writes corrected code with proper implementation

- Tester: Generates test cases to validate fixes

The Investigator coordinates everything. It analyzes errors, delegates research, gets fixes written, and validates with tests. Each agent has its own model and toolset defined in YAML, with no orchestration code required.

Prerequisites

Before starting this tutorial, make sure you have the following:

- A DigitalOcean account with available credits or a payment method configured

- An API key from OpenAI or Anthropic (for cloud-based inference)

- An SSH client or web browser access to the DigitalOcean console. If you are new to SSH, review SSH Essentials: Working with SSH Servers, Clients, and Keys.

- Basic command-line familiarity with Git, SSH, and running shell commands

Optional for local model inference:

- Willingness to resize your Droplet to an 8GB RAM plan ($48/month)

Step 1: Create the Droplet

Navigate to the DigitalOcean Marketplace Docker Agent page and click Create Docker Agent Droplet.

Start with the $6/month tier (1GB RAM). That is plenty for cloud API agents since the actual AI inference runs on OpenAI or Anthropic servers, not your Droplet.

Figure 1: Navigate to the DigitalOcean Marketplace and select the Docker Agent 1-Click App

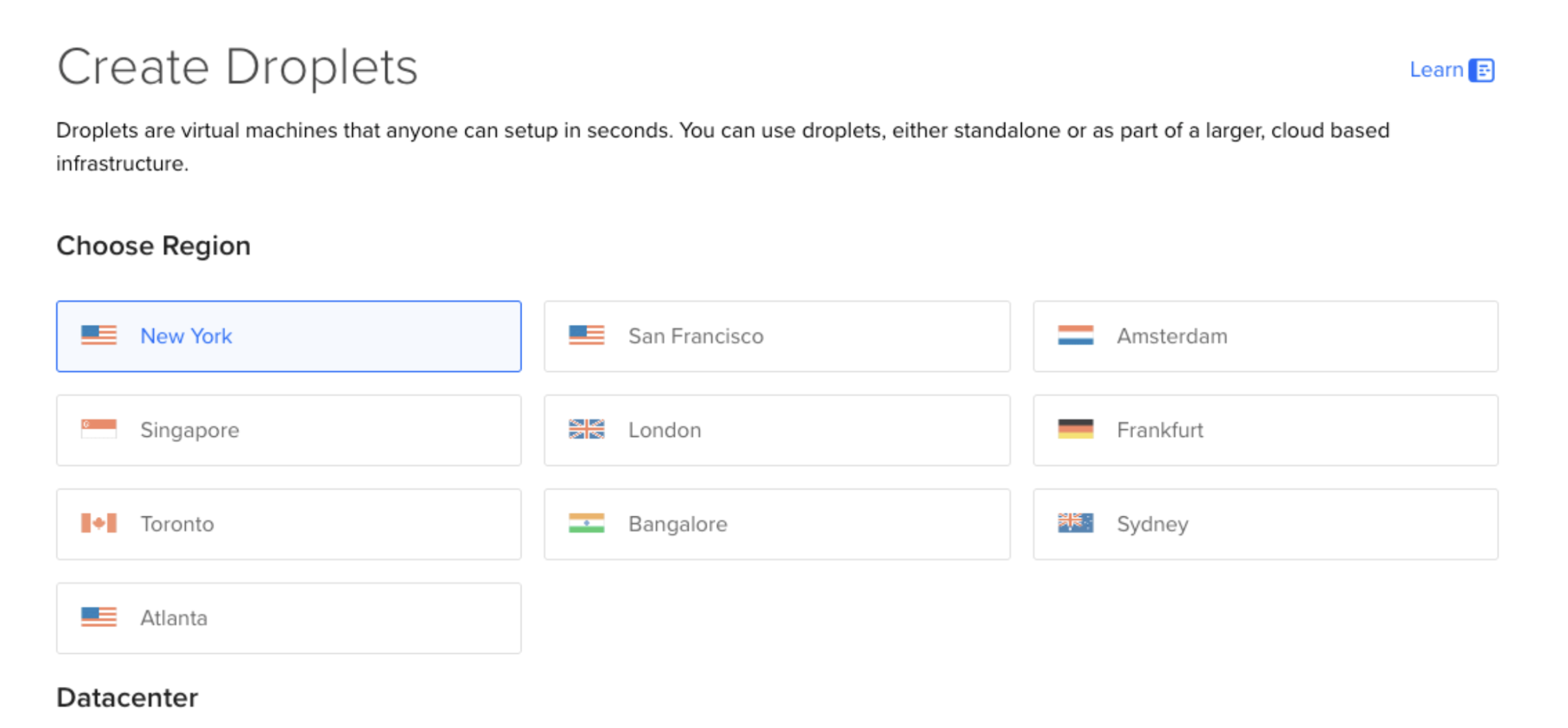

Step 2: Choose your preferred region

Select a datacenter region closest to your users or your location for reduced latency.

Figure 2: Select a datacenter region closest to your location for reduced latency

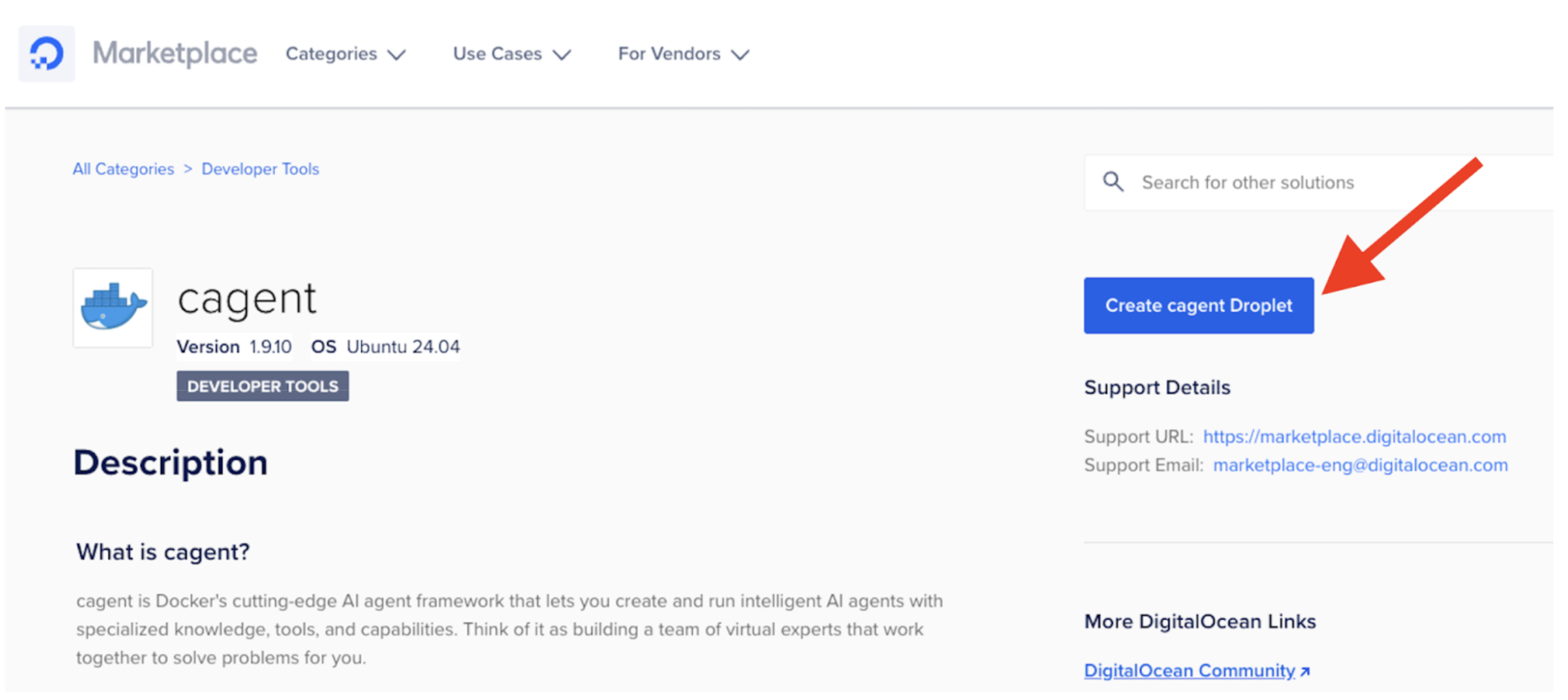

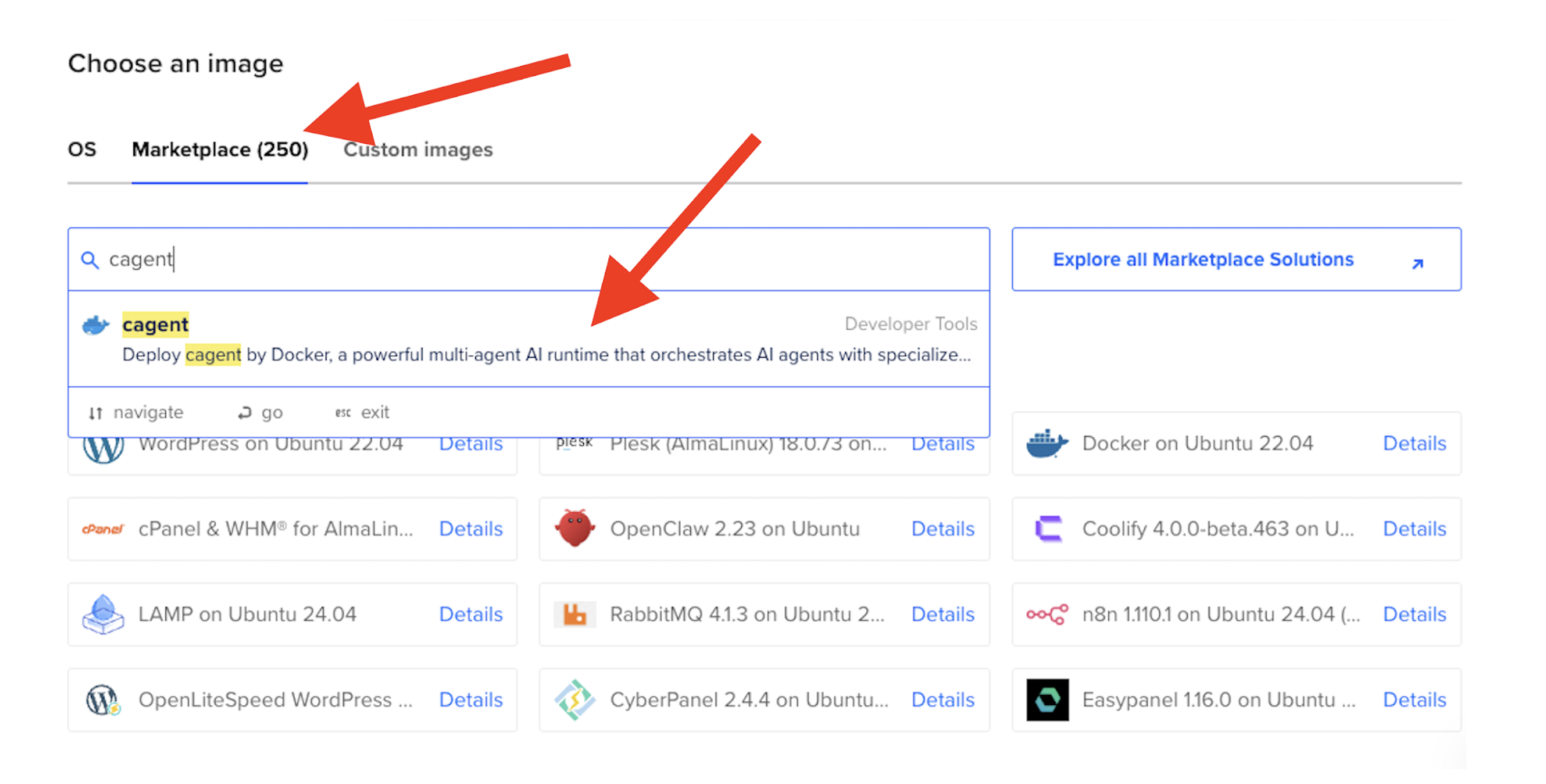

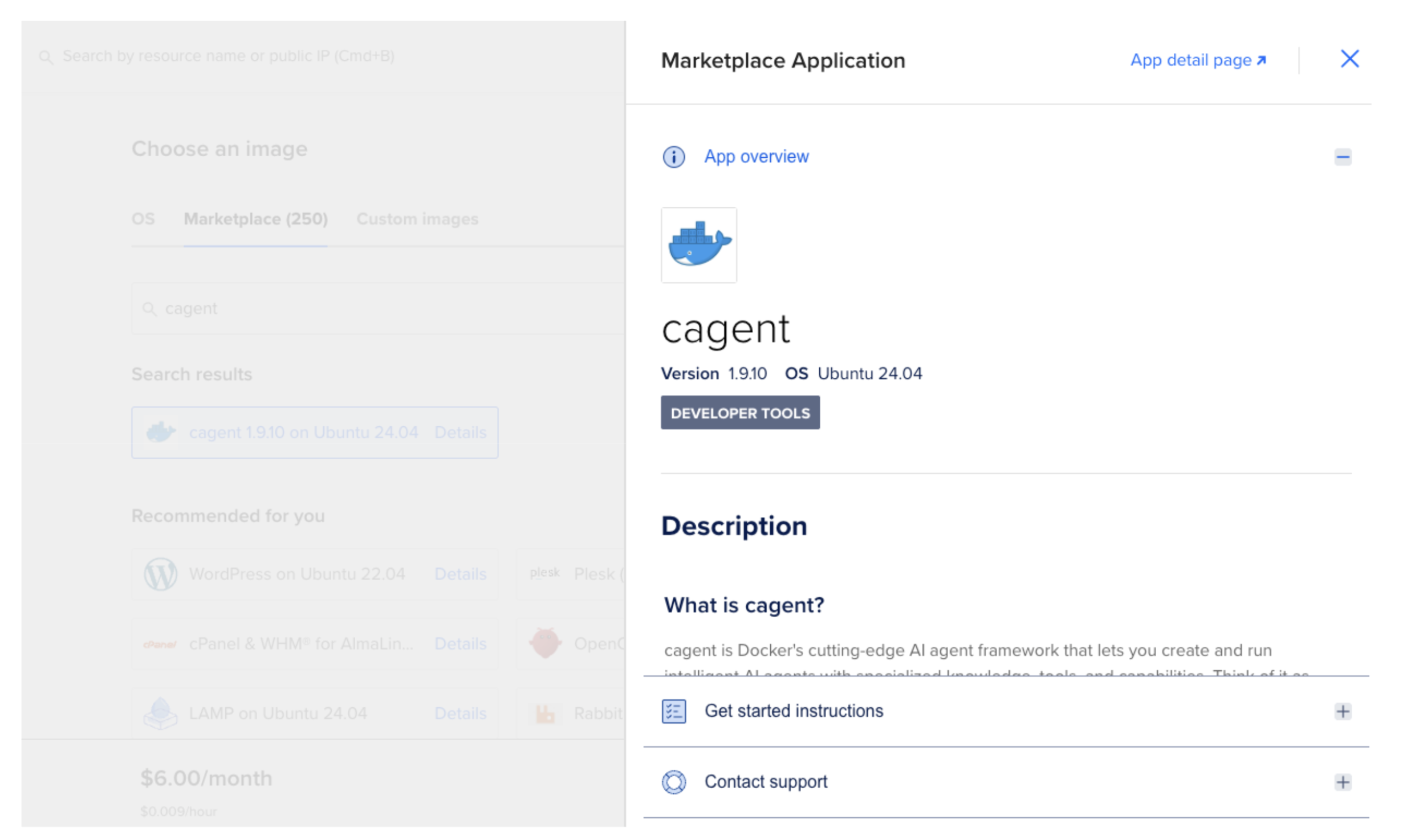

Step 3: Choose the Marketplace image

In the Choose an image section, select the Marketplace tab and search for “Docker Agent.”

Figure 3: Search for “Docker Agent” in the Marketplace tab

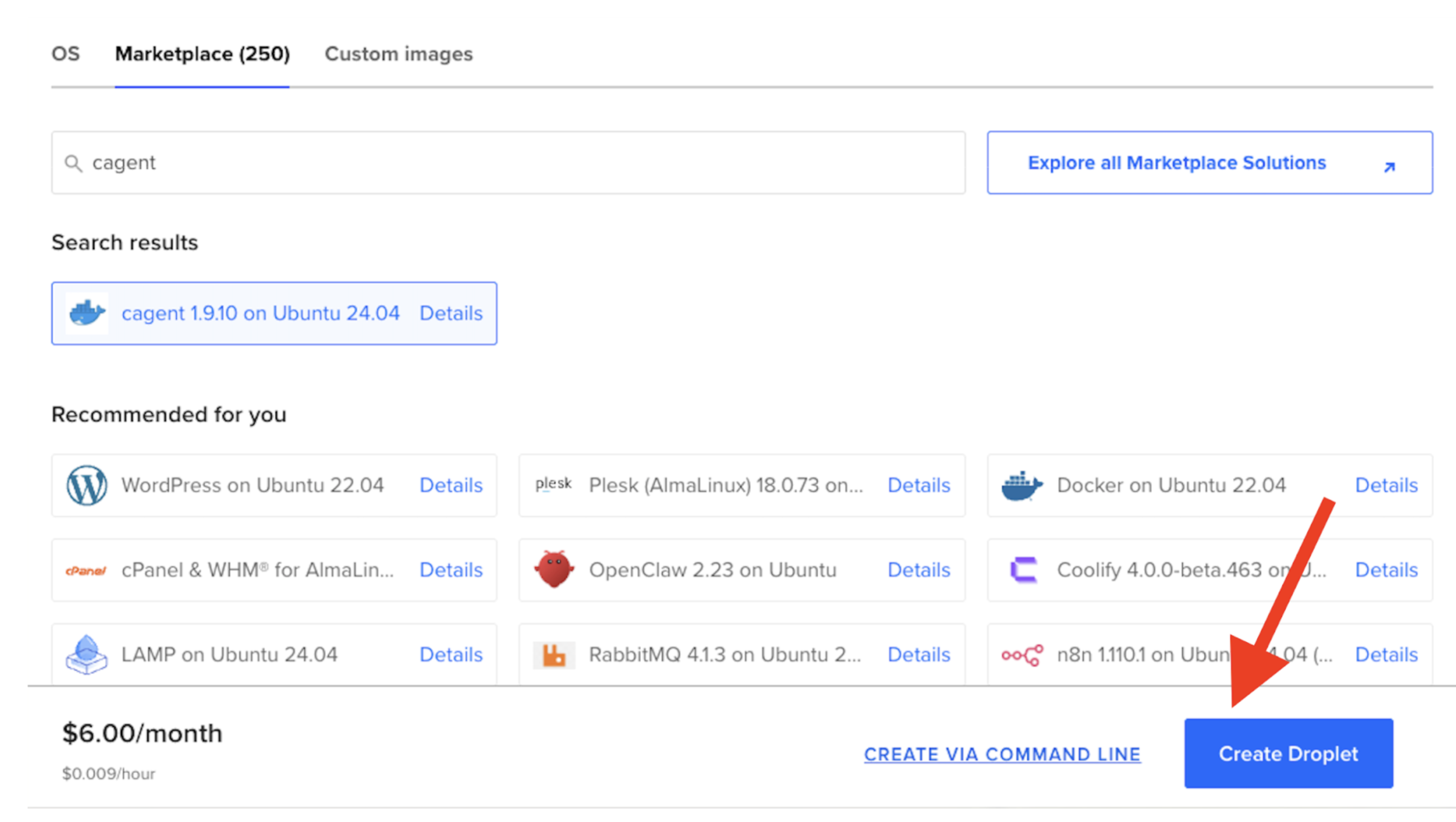

Step 4: Configure and launch

Review the Droplet size, add your SSH key, and click Create Droplet.

Figure 4: The Docker Agent 1-Click App page with the Create Droplet button

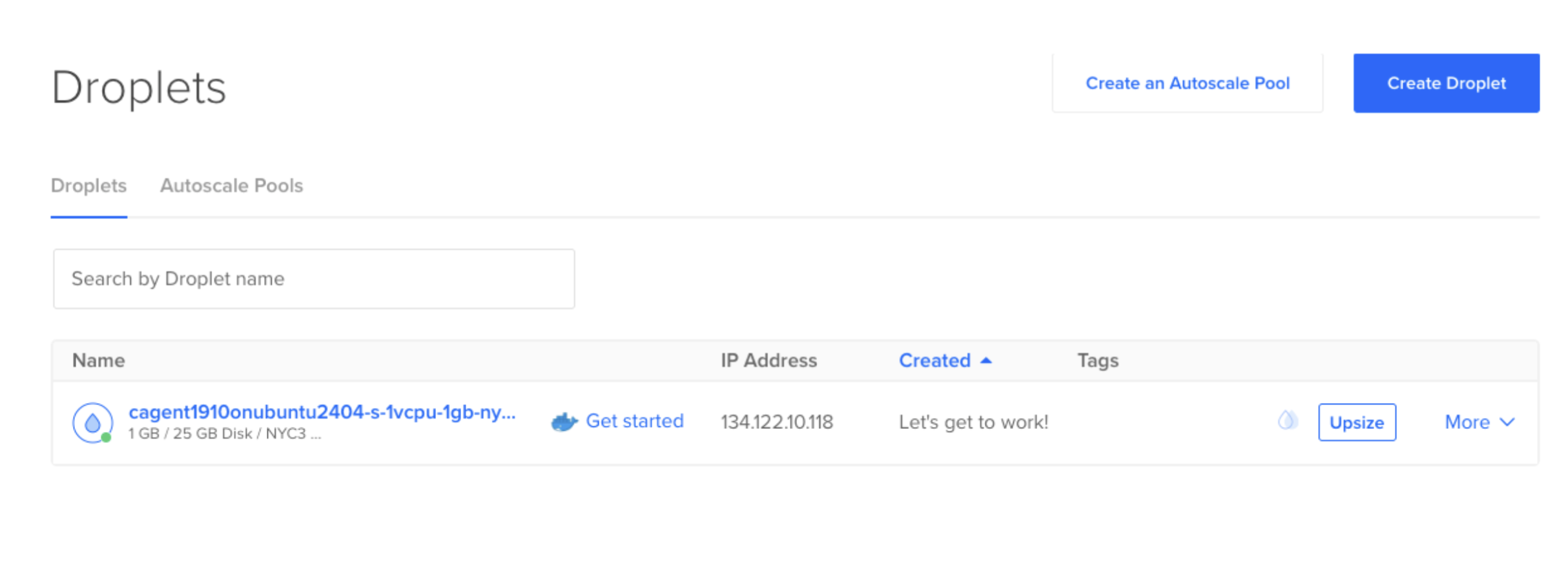

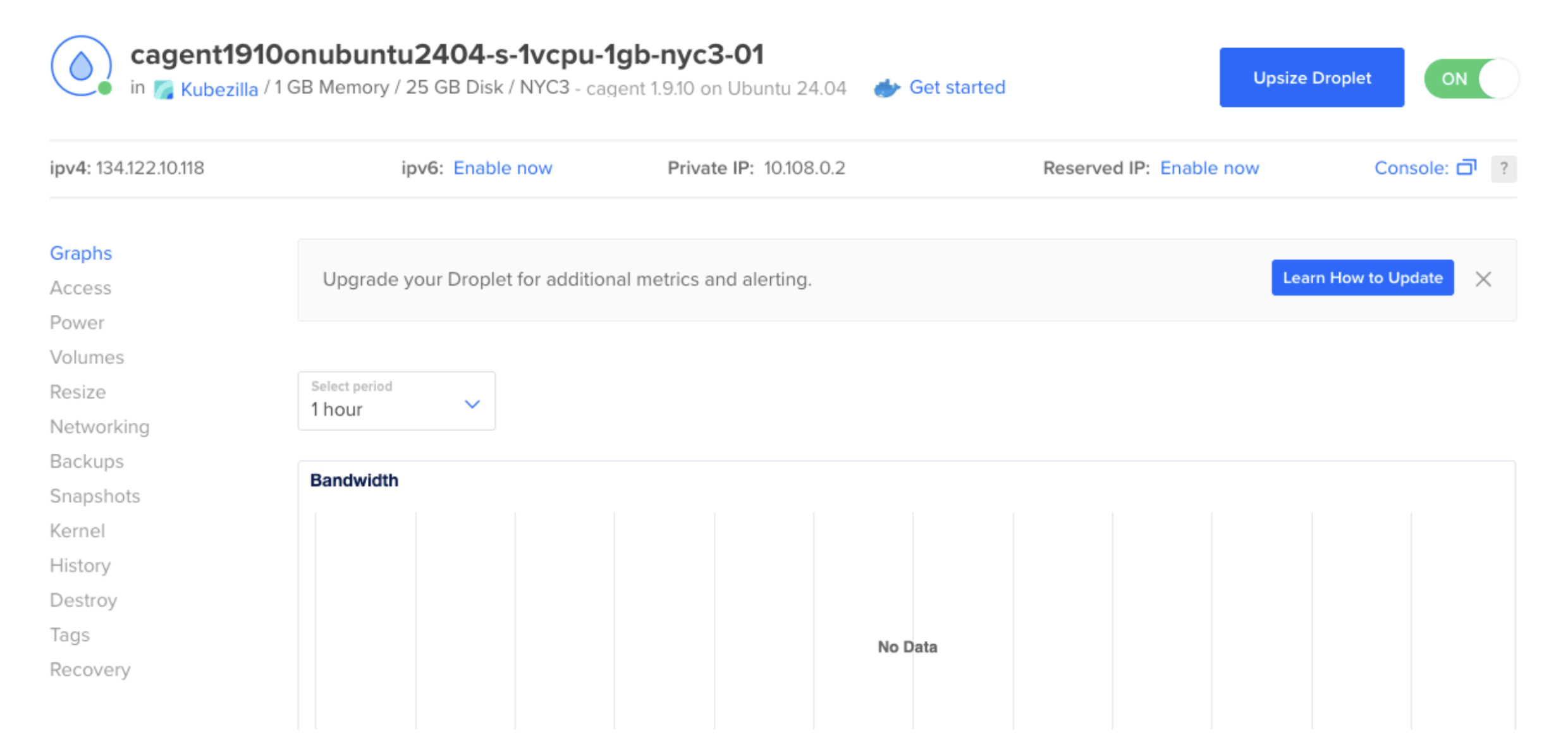

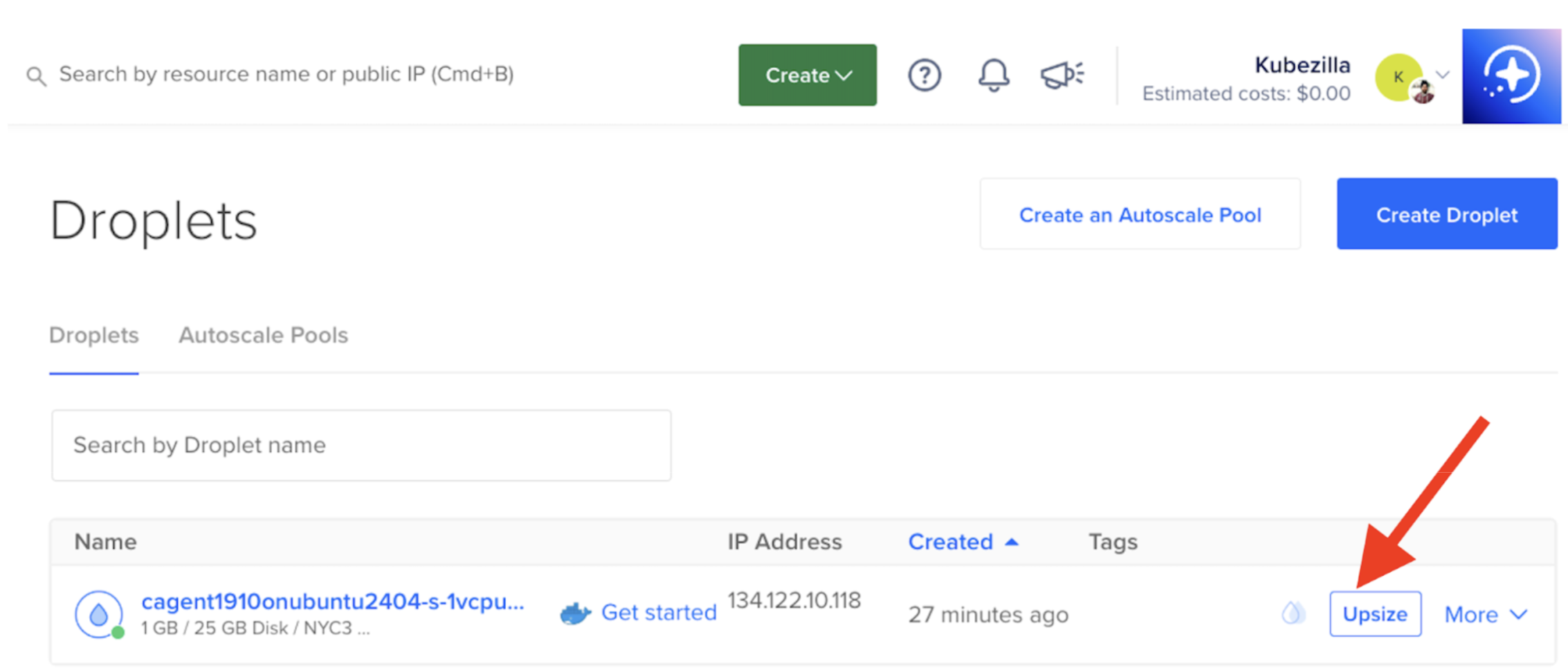

Your Docker Agent Droplet is now running.

Example output:

- IP:

134.122.10.118 - Specs: 1GB RAM / 1 vCPU / NYC3

That is the $6/month tier, which works fine for cloud API agents.

Figure 5: Your new Docker Agent Droplet with IP address and specifications

Step 5: Access and verify

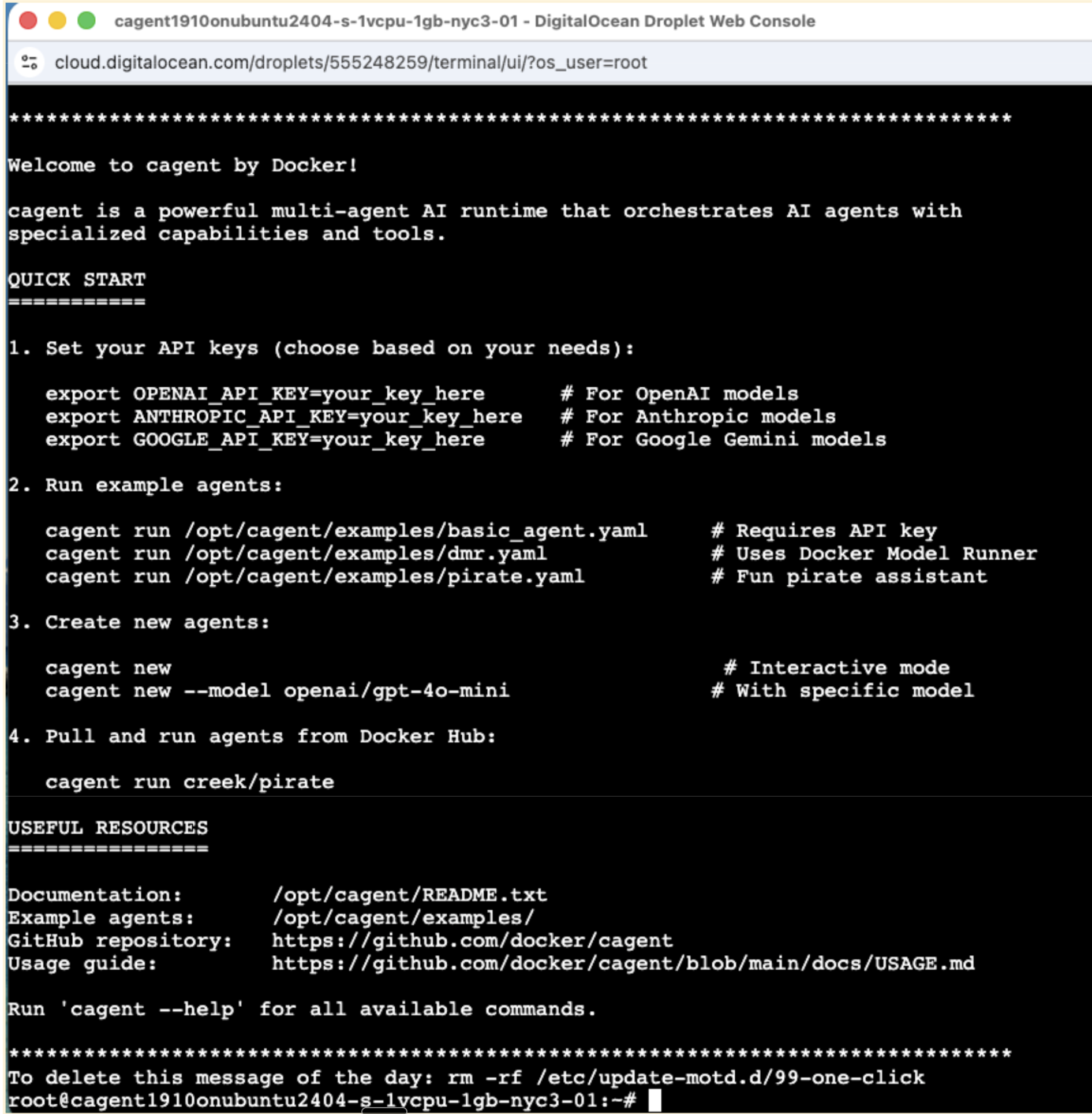

Option 1: Web Console (Easiest)

- Go to your Droplets page

- Click on your Docker Agent Droplet

- Click the Access tab on the left

- Click Launch Droplet Console

This opens a browser-based terminal with no SSH key needed.

Figure 6: Access the Droplet Console from the Access tab in your Droplet settings

Option 2: SSH Access

If you prefer SSH, you can reset the root password or use your SSH key:

- Click on your Droplet

- Go to the Access tab

- Click Reset Root Password

- Check your email for the new password

- SSH into your Droplet:

ssh root@YOUR_DROPLET_IP

Replace YOUR_DROPLET_IP with your Droplet’s actual IP address.

Step 6: Check the Docker Agent version

Verify that Docker Agent (cagent) is pre-installed:

cagent version

Expected output:

cagent version v1.9.10

Commit: 1782337c60dadcb39643f7c9e1a9798ea784c7aa

Step 7: Clone the bug investigator repository

git clone https://github.com/ajeetraina/bug-investigator-agent.git

cd bug-investigator-agent

Review the project structure:

tree

.

├── LICENSE

├── README.md

├── bug.txt

├── cagent-anthropic.yaml

├── cagent-local.yaml

├── cagent-openai.yaml

├── cagent.yaml

├── examples

│ └── bug-scenarios.md

├── scripts

│ └── deploy.sh

└── test-code

├── Dockerfile

├── app.py

├── deployment.yaml

├── index.js

└── main.go

4 directories, 14 files

The repository includes YAML configurations for three model providers: OpenAI, Anthropic, and local (Docker Model Runner). Each configuration defines the same multi-agent architecture, but targets a different inference backend.

Step 8: Configure and run with cloud APIs

Set the OpenAI API key:

export OPENAI_API_KEY=sk-proj-XXXXXXXXXXX

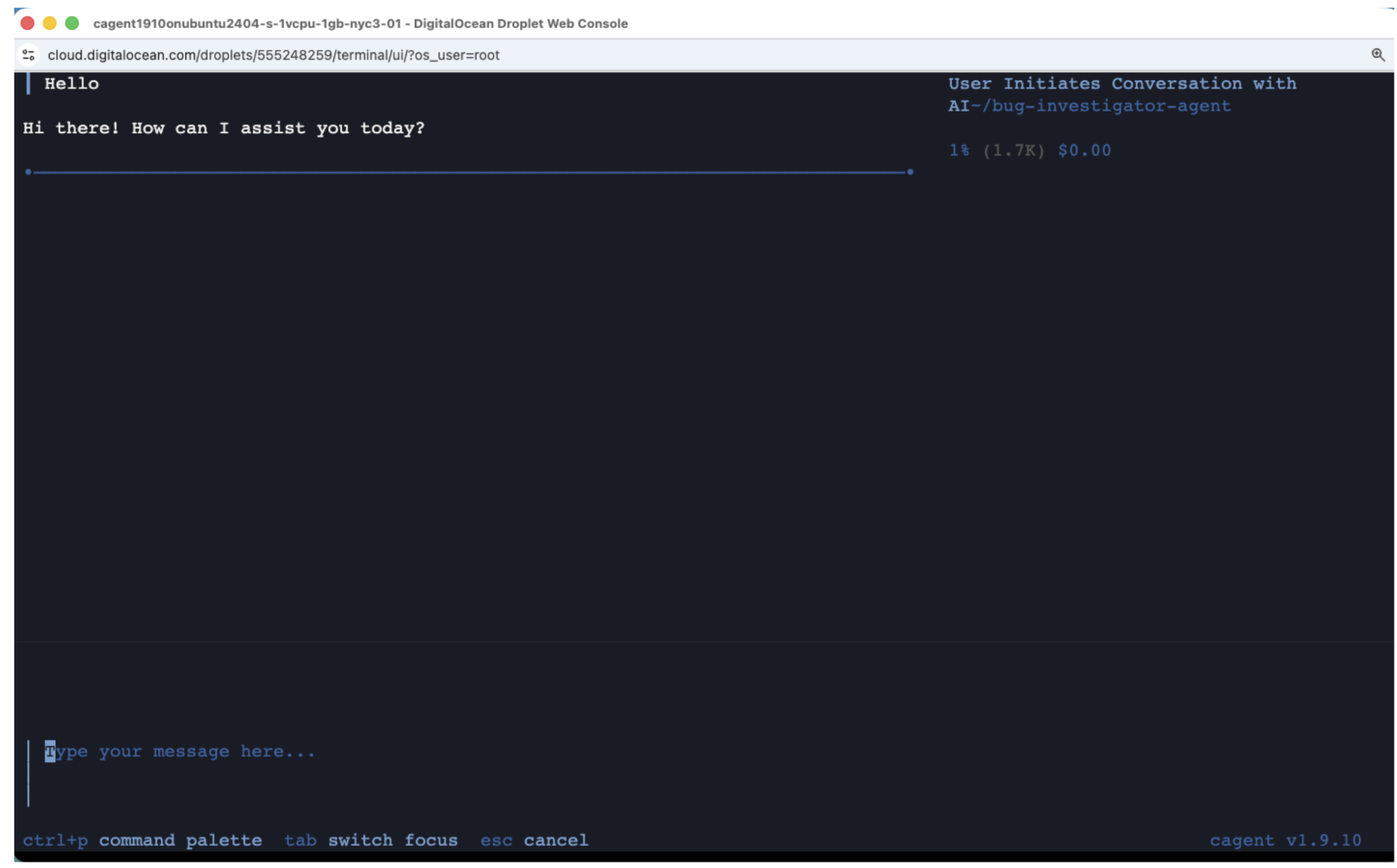

The repository includes cagent-openai.yaml configured for GPT-4o and GPT-4o-mini. Launch the agent:

cagent run ./cagent-openai.yaml

The cagent chat interface opens, ready to use the multi-agent Bug Investigator architecture. You are now in the agent’s interactive chat.

Step 9: Test with a Python bug

Paste the following buggy code into the agent chat:

I have this Python code that's crashingPoint.:

def calculate_average(numbers):

total = 0

for num in numbers:

total += num

return total / len(numbers)

result = calculate_average([])

print(result)

Error: ZeroDivisionError: division by zero

The agent processes this through its multi-agent workflow:

- Investigator analyzes the error and identifies an empty list as the root cause

- Fixer implements a guard clause

- Tester generates comprehensive test cases

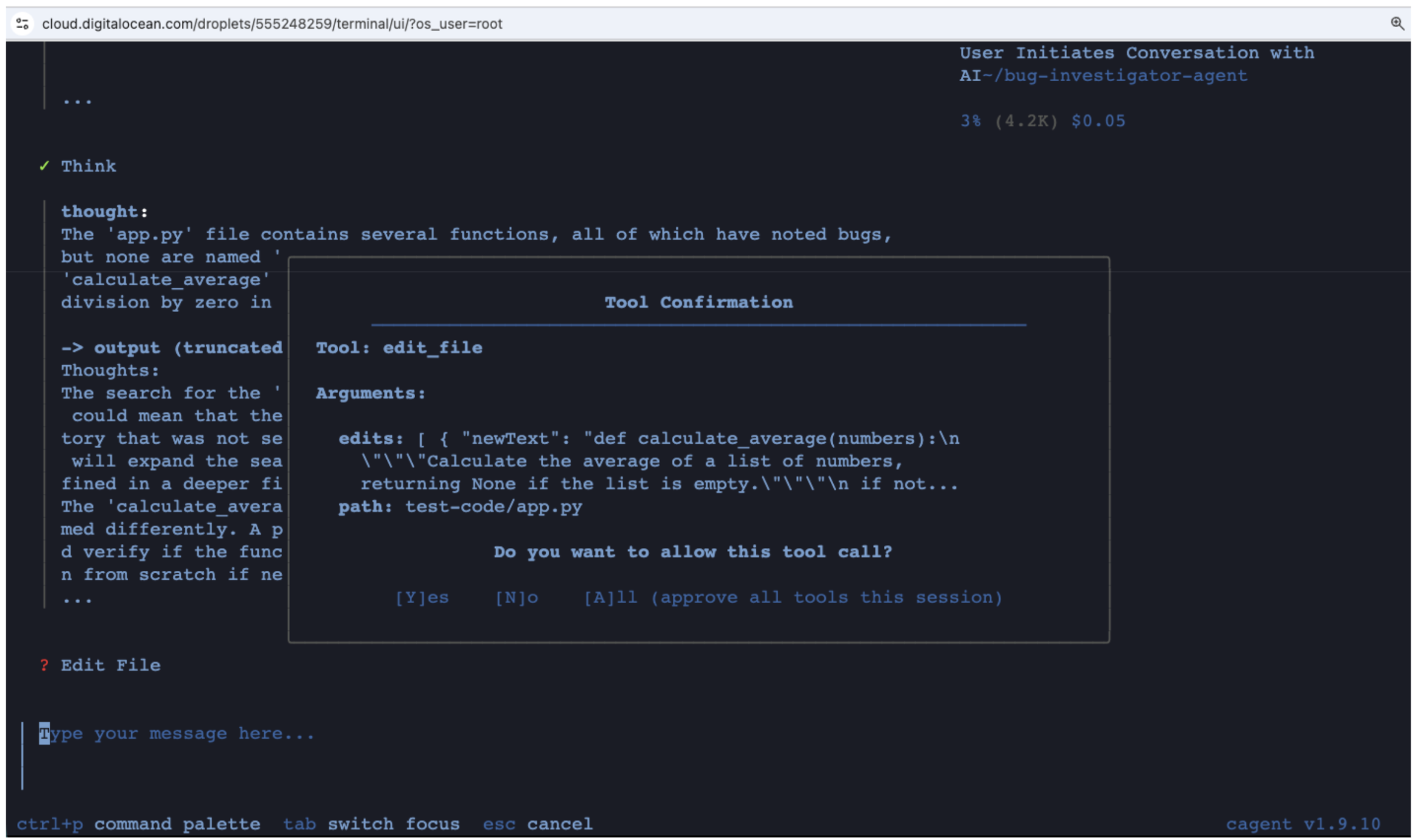

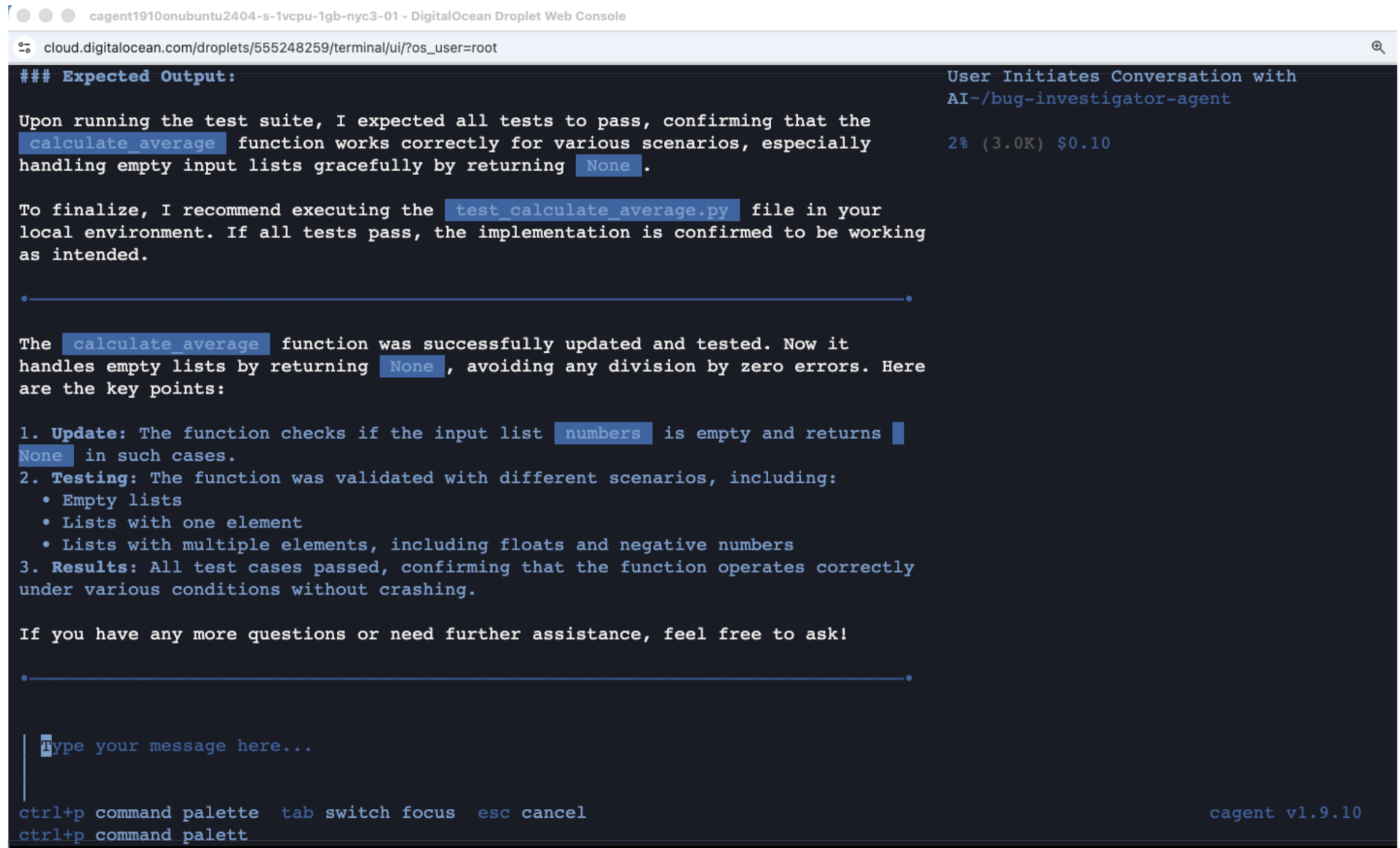

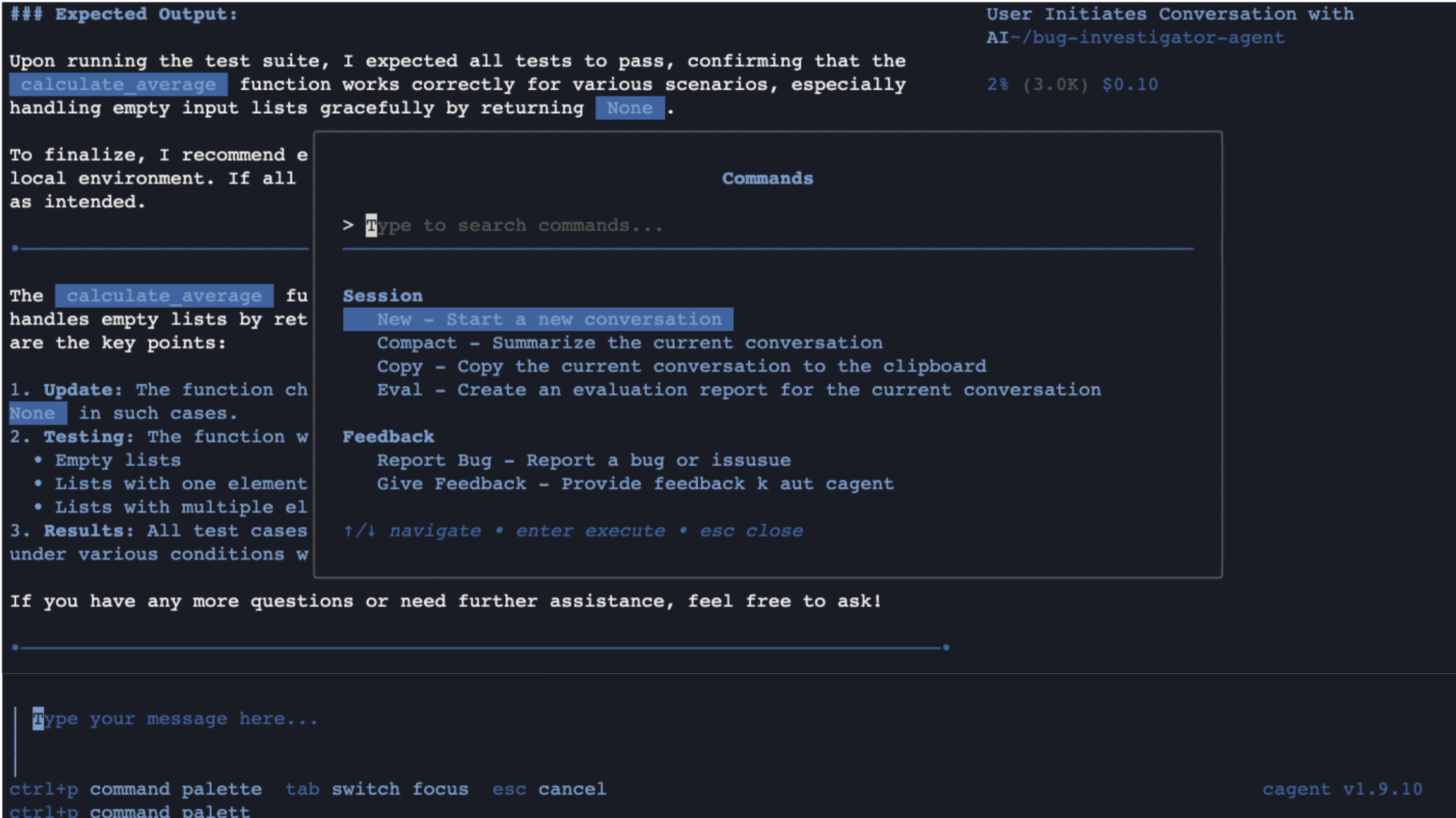

Figure 8: The Bug Investigator agent analyzing the ZeroDivisionError

Press Ctrl+p to open the command palette.

Figure 9: The Docker Agent command palette for additional actions

The agent created a tests/ directory with test_calculate_average.py.

After processing completes, verify the generated test file:

cat tests/test_calculate_average.py

Expected output:

import unittest

def calculate_average(numbers):

if not numbers:

return None

return sum(numbers) / len(numbers)

class TestCalculateAverage(unittest.TestCase):

def test_empty_list(self):

self.assertIsNone(calculate_average([]))

def test_single_element(self):

self.assertEqual(calculate_average([5]), 5)

def test_multiple_elements(self):

self.assertEqual(calculate_average([3, 5, 7]), 5.0)

def test_floats(self):

self.assertAlmostEqual(calculate_average([1.5, 2.5, 3.5]), 2.5)

def test_negative_numbers(self):

self.assertEqual(calculate_average([-1, -2, -3]), -2.0)

if __name__ == '__main__':

unittest.main()

The agent:

- Fixed the bug by adding

if not numbers: return None - Created five test cases:

- Empty list returns

None - Single element returns that element

- Multiple elements calculates the average

- Floats handles decimals correctly

- Negative numbers works correctly

- Empty list returns

Step 10: Run the tests

python3 tests/test_calculate_average.py

Expected output:

.....

----------------------------------------------------------------------

Ran 5 tests in 0.000s

OK

Here is a summary of what happened:

- Bug reported: ZeroDivisionError

- Agent diagnosed: Empty list causes division by zero

- Agent fixed: Added guard clause

- Agent tested: Five comprehensive test cases

- Tests pass: All five pass

Comparing cloud versus local model performance

Before diving into local model setup, here is how the two approaches compare for the same debugging task:

| Metric | Cloud API (GPT-4o) | Local Model (Qwen3 8B) |

|---|---|---|

| Response time | About 5 seconds | About 3 minutes (CPU) |

| Accuracy | Correct diagnosis and fix | Correct diagnosis and fix |

| Multi-agent coordination | Full support (all 4 agents) | Root agent only (simplified config) |

| Cost per request | $0.01 to $0.10 | $0.00 (Droplet cost only) |

| Data privacy | Code sent to external API | Code stays on your server |

| Minimum Droplet size | 1GB RAM ($6/month) | 8GB RAM ($48/month) |

Cloud APIs are fast and support the full multi-agent workflow. Local models keep your code private and cost nothing per request, but require more Droplet resources and run slower on CPU. For faster local inference, GPU Droplets provide significant speedups.

Configure local model inference (optional)

Cloud APIs are fast but send your code to external servers. For sensitive codebases or air-gapped environments, local inference keeps everything on your Droplet.

Your current Droplet is 1GB RAM. Local models need at least 4GB.

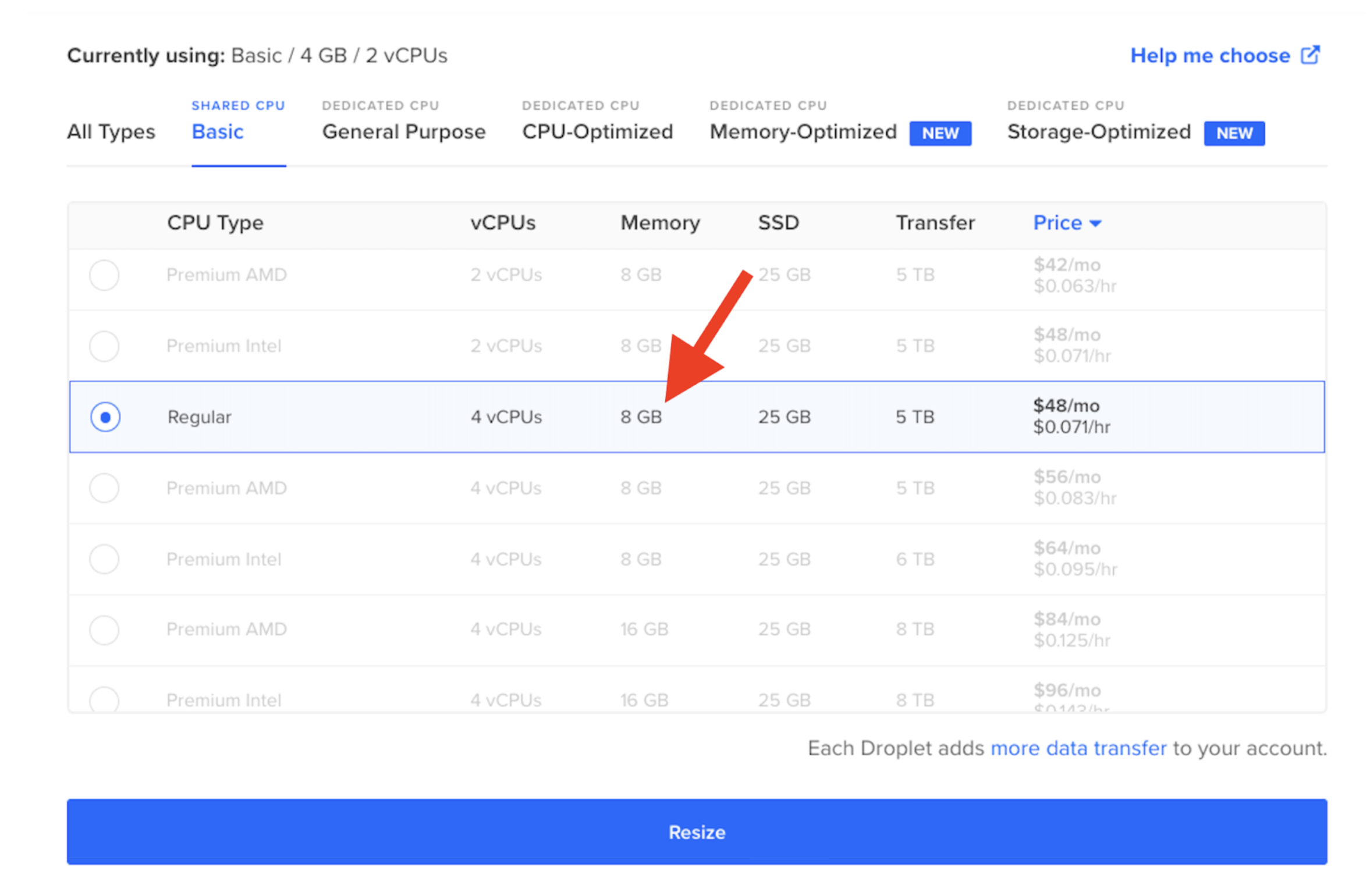

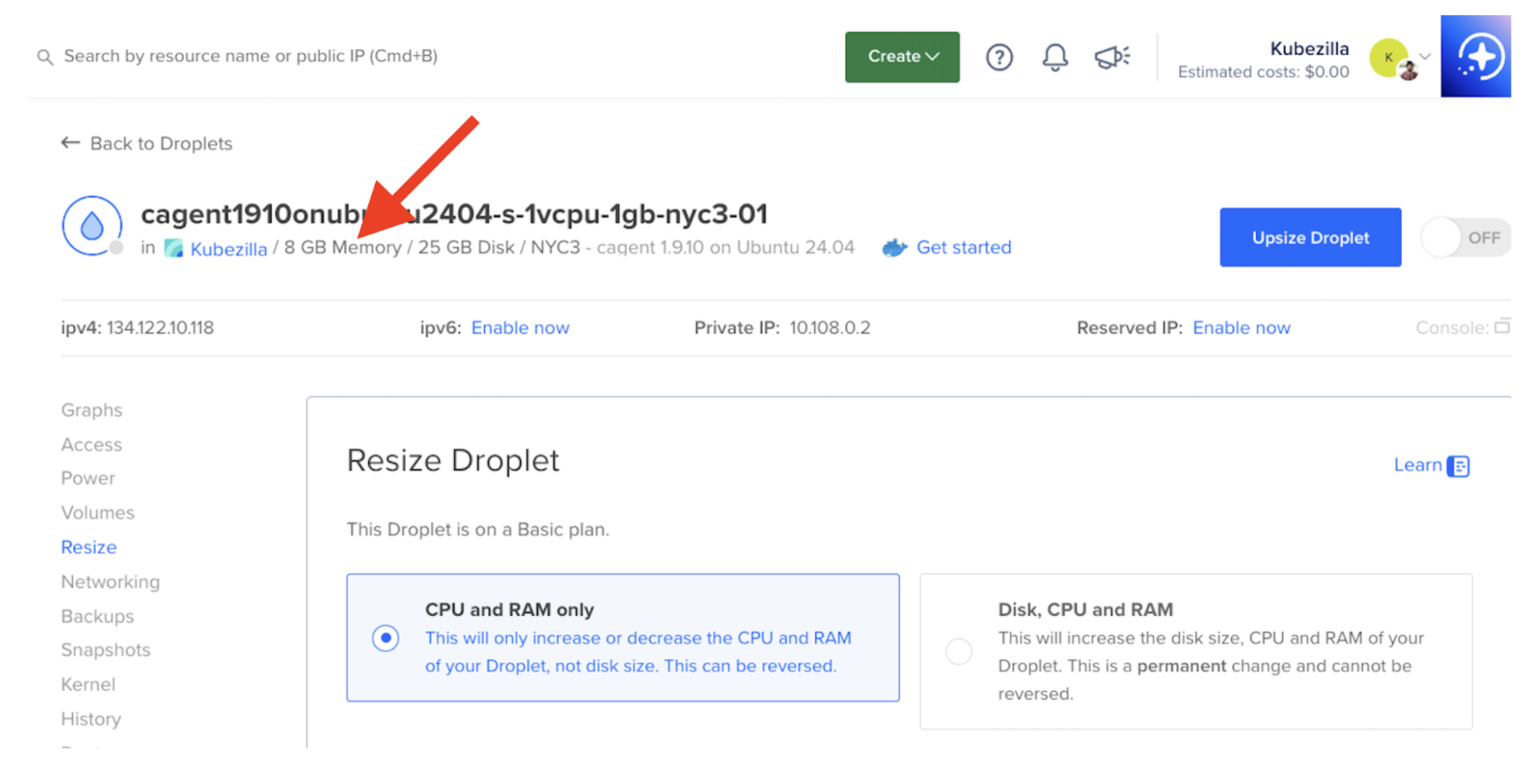

Resize the Droplet:

- Go to the DigitalOcean Console and click your Droplet

- Power off the Droplet

- Click Resize in the left menu

- Select the s-2vcpu-8gb plan ($48/month) for reliable local model performance

- Click Resize Droplet

For detailed instructions, see How To Resize Droplets.

Figure 10: Select the 8GB RAM plan for local model inference

Click the row, scroll down, and click Resize Droplet.

Figure 11: Confirm the Droplet resize operation

Power the Droplet back on and verify the new memory:

free -m

Expected output:

total used free shared buff/cache available

Mem: 7941 512 7189 4 477 7429

Swap: 0 0 0

Step 11: Install Docker Model Runner

Docker Model Runner lets you run AI models locally as part of the Docker ecosystem. Install it:

sudo apt-get update

sudo apt-get install -y docker-model-plugin

Verify the installation by listing available models:

docker model ls

Docker Model Runner downloads its runtime on first use. You will see output similar to:

latest: Pulling from docker/model-runner

...

Status: Downloaded newer image for docker/model-runner:latest

Creating model storage volume docker-model-runner-models...

Starting model runner container docker-model-runner...

MODEL NAME PARAMETERS QUANTIZATION ARCHITECTURE MODEL ID CREATED CONTEXT SIZE

The empty model list confirms that Docker Model Runner is installed and running. Next, you will pull a model.

Step 12: Pull a model optimized for tool calling

Model selection significantly impacts agentic task performance. Based on Docker’s tool-calling evaluation, here is how popular models compare:

| Model | Tool Calling F1 Score | Recommendation |

|---|---|---|

| GPT-4 | 0.974 | Best overall (cloud) |

| Qwen3 8B | 0.919 | Best local option |

| Gemma3 4B | 0.733 | Insufficient for reliable tool use |

Pull Qwen3 8B:

docker model pull ai/qwen3:8B-Q4_K_M

Verify the download:

docker model ls

Expected output:

MODEL NAME PARAMETERS QUANTIZATION ARCHITECTURE MODEL ID CREATED CONTEXT SIZE

qwen3:8B-Q4_K_M 8.19 B IQ2_XXS/Q4_K_M qwen3 79fa56c07429 10 months ago 4.68 GiB

TCP support is enabled by default for Docker Engine on port 12434.

Step 13: Update the local model configuration

The default cagent-local.yaml may reference a different model. Update it to use the Qwen3 model you pulled:

sed -i 's/ai\/gemma3:2B-Q4_0/ai\/qwen3:8B-Q4_K_M/g' cagent-local.yaml

Verify the change:

grep model cagent-local.yaml

Expected output:

# docker model pull ai/qwen3:8B-Q4_K_M

models:

local-model:

model: ai/qwen3:8B-Q4_K_M

model: local-model

Review the full configuration:

version: "2"

models:

local-model:

provider: dmr

model: ai/qwen3:8B-Q4_K_M

max_tokens: 4096

agents:

root:

model: local-model

description: Debugging assistant that helps fix code issues

instruction: |

You are a helpful debugging assistant. When a developer shares an error:

1. **Analyze** the error message and stack trace

2. **Identify** the root cause

3. **Explain** what went wrong in simple terms

4. **Provide** a working fix with code

Be concise. Focus on actionable solutions.

toolsets:

- type: filesystem

- type: think

- type: todo

The local configuration uses a single root agent (rather than the multi-agent setup used with cloud APIs) because local models on CPU work best with focused, single-agent tasks.

Step 14: Run with local model

cagent run ./cagent-local.yaml

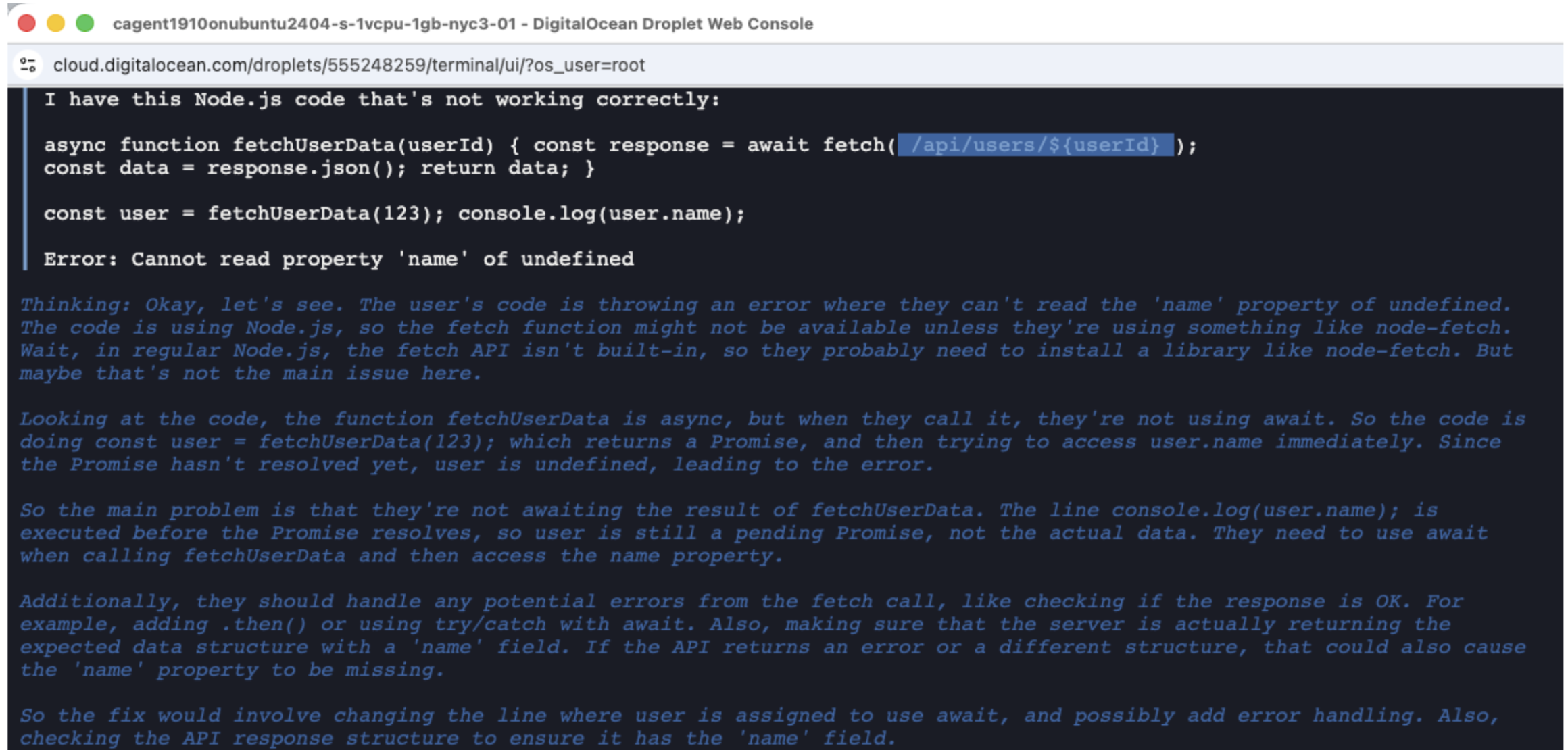

Try this JavaScript async bug:

I have this Node.js code that's not working correctly:

async function fetchUserData(userId) {

const response = await fetch(`/api/users/${userId}`);

const data = response.json();

return data;

}

const user = fetchUserData(123);

console.log(user.name);

Error: Cannot read property 'name' of undefined

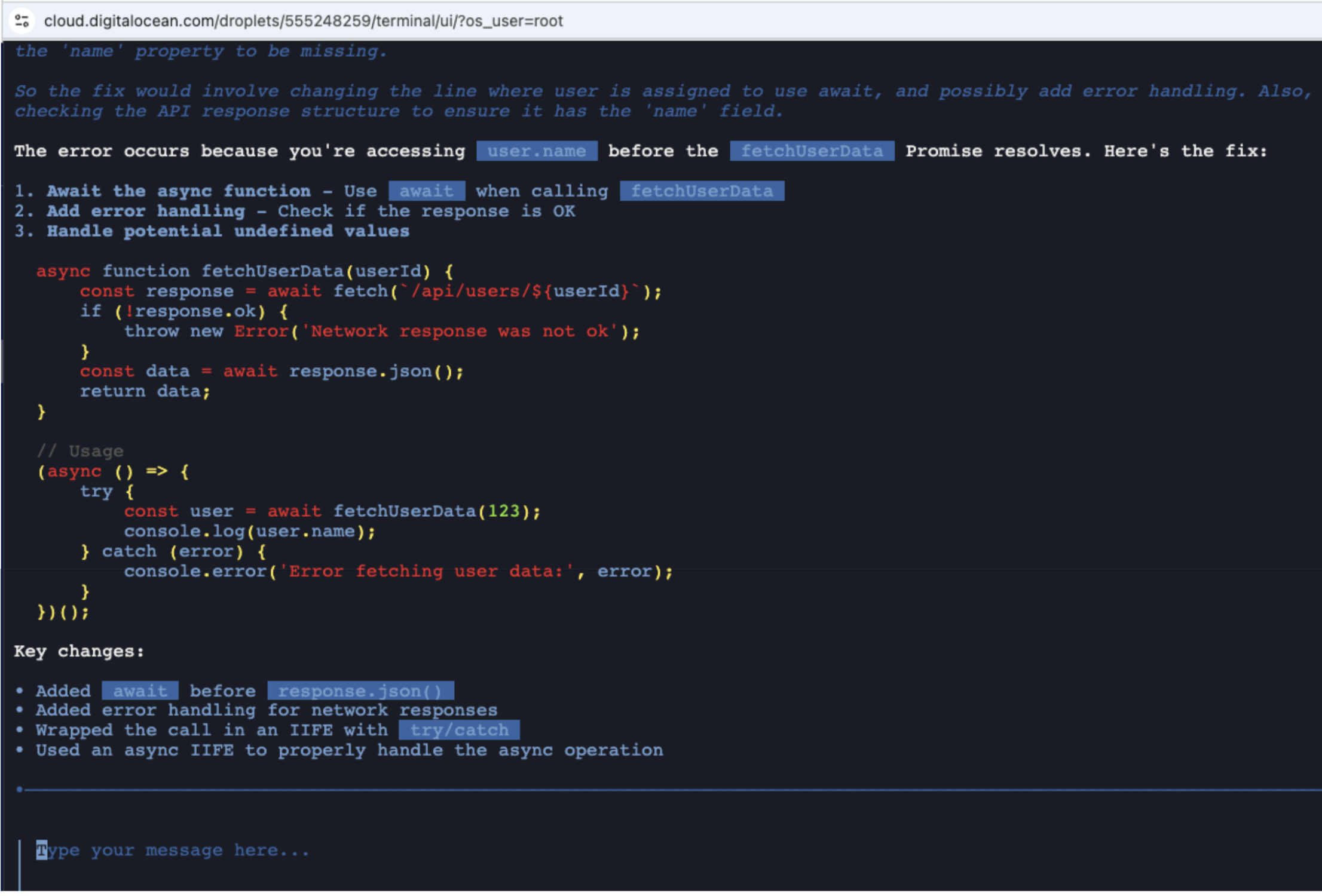

The local model correctly identifies the issue:

“The function

fetchUserDatais async, but when they call it, they are not using await. So the code is doingconst user = fetchUserData(123);which returns a Promise… Since the Promise has not resolved yet, user is undefined, leading to the error.”

Figure 12: Local model correctly diagnosing the missing await keywords

The local model’s diagnosis: the error occurs because user.name is accessed before the fetchUserData Promise resolves.

The agent suggested these key changes:

- Added

awaitbeforeresponse.json() - Added error handling for network responses

- Wrapped the call in an async IIFE with

try/catch - Proper async handling throughout

Cost: $0.00 (local model, no API charges)

Note: Local models on CPU work correctly but are slow (roughly two to five minutes per response). For production speed, use GPU Droplets or cloud APIs. For privacy-sensitive tasks where speed is not the top priority, CPU inference is a viable option.

Step 15: Push to Docker Hub

Share your agent configuration by publishing it as an OCI artifact:

docker login

cagent push ./cagent-openai.yaml docker.io/YOUR_DOCKERHUB_USERNAME/bug-investigator:latest

Replace YOUR_DOCKERHUB_USERNAME with your Docker Hub username. Follow the browser-based authentication flow when prompted.

Expected output:

Pushing agent ./cagent-openai.yaml to docker.io/YOUR_DOCKERHUB_USERNAME/bug-investigator:latest

Successfully pushed artifact to docker.io/YOUR_DOCKERHUB_USERNAME/bug-investigator:latest

Your Bug Investigator Agent is now live on Docker Hub.

Anyone with Docker Agent installed can pull and run it:

cagent run docker.io/YOUR_DOCKERHUB_USERNAME/bug-investigator:latest

Understanding the YAML configuration in depth

The YAML-based configuration is what makes Docker Agent different from other agent frameworks that require Python or JavaScript orchestration code. Here is the structure of the OpenAI configuration used in this tutorial:

version: "2"

models:

openai-main:

provider: openai

model: gpt-4o

max_tokens: 4096

openai-mini:

provider: openai

model: gpt-4o-mini

max_tokens: 4096

agents:

root:

model: openai-main

description: Bug investigator that analyzes errors and coordinates fixes

instruction: |

You are an expert bug investigator. When given an error:

1. Analyze the error message and stack trace

2. Identify the root cause

3. Delegate to researcher for documentation lookup

4. Delegate to fixer for code correction

5. Delegate to tester for test generation

sub_agents: [researcher, fixer, tester]

toolsets:

- type: filesystem

- type: think

researcher:

model: openai-mini

description: Searches documentation and finds solutions

instruction: |

Search for relevant documentation and similar issues.

Provide links and context for the fix.

toolsets:

- type: mcp

ref: docker:duckduckgo

fixer:

model: openai-main

description: Writes corrected code

instruction: |

Write minimal, targeted fixes for diagnosed bugs.

Include proper error handling.

toolsets:

- type: filesystem

- type: shell

tester:

model: openai-mini

description: Generates test cases

instruction: |

Generate comprehensive test cases for the fix.

Cover edge cases, positive cases, and negative cases.

toolsets:

- type: filesystem

Key points in this configuration:

- Two model tiers: The root agent and fixer use

gpt-4ofor complex reasoning, while the researcher and tester usegpt-4o-minito reduce costs. - Sub-agent delegation: The

sub_agentsfield on the root agent defines which agents it can delegate to. - Toolsets: Each agent gets only the tools it needs. The researcher gets web search (via MCP), the fixer gets filesystem and shell access, and the tester gets filesystem access to write test files.

- No orchestration code: The entire multi-agent workflow is defined declaratively in YAML.

Customizing agent behavior with MCP tools

The Model Context Protocol (MCP) is a standard for connecting AI models to external tools and data sources. Docker Agent integrates with MCP servers, letting you extend agent capabilities beyond the built-in toolsets.

For example, you can add GitHub integration to your bug investigator so it can read issues and create pull requests:

toolsets:

- type: mcp

ref: docker:github

config:

env:

GITHUB_TOKEN: ${GITHUB_TOKEN}

Or add a database tool so agents can query logs:

toolsets:

- type: mcp

ref: docker:postgres

config:

env:

DATABASE_URL: ${DATABASE_URL}

The Docker MCP Catalog provides pre-built MCP servers for common integrations including Slack, Jira, and various databases.

FAQs

1. What is a multi-agent AI system?

A multi-agent AI system consists of multiple AI agents working collectively to perform tasks. Each agent has a specialized role and set of tools, and a coordinator (the root agent) delegates work to the right specialist. In Docker Agent, you define this structure in YAML: the root agent receives the task, analyzes it, and routes subtasks to agents like a researcher, fixer, or tester. This is more effective than a single-agent approach for complex tasks because each agent stays focused on what it does best.

2. Is Docker Agent suitable for production use?

For internal team tooling and developer workflows, yes. The 1-Click Droplet on DigitalOcean provides a stable, reproducible environment for running agent teams. For customer-facing applications, add rate limiting, error handling, and human review of suggested fixes. The agent occasionally produces incorrect fixes, so treat it as a fast assistant that requires code review rather than a fully autonomous system.

3. Can I use Anthropic Claude instead of OpenAI?

Yes. The bug-investigator-agent repository includes cagent-anthropic.yaml pre-configured for Claude models. Set the ANTHROPIC_API_KEY environment variable and run:

export ANTHROPIC_API_KEY=sk-ant-XXXXXXXXXXX

cagent run ./cagent-anthropic.yaml

Docker Agent supports multiple providers, including OpenAI, Anthropic, Google Gemini, and local models through Docker Model Runner.

4. How does Docker Agent compare to other agent frameworks?

Docker Agent takes a declarative, YAML-first approach. Frameworks like LangGraph, CrewAI, and AutoGen require Python or JavaScript code to define agent workflows. Docker Agent does not need any programming language to define agents, their tools, or their coordination. The tradeoff is flexibility: code-based frameworks offer more control over agent logic, while Docker Agent prioritizes simplicity and portability. If your agents follow standard patterns (delegation, tool use, sub-agent coordination), the YAML approach is faster to set up and easier to share.

5. What are the ongoing costs for running this setup?

The costs break down as follows:

- Droplet: $6/month for cloud API agents (1GB RAM), or $48/month for local model inference (8GB RAM)

- OpenAI API: Roughly $0.01 to $0.10 per debugging session, depending on the model and token usage

- Local models: $0 per request (only the Droplet cost applies)

For teams running many debugging sessions per day, local models on a larger Droplet can be more cost-effective than cloud API charges. For occasional use, the $6/month Droplet with cloud APIs is the most economical option.

Conclusion

In this tutorial, you deployed a Docker Agent Droplet on DigitalOcean using the 1-Click Marketplace image, built a multi-agent bug investigator, tested it with real Python and JavaScript bugs, configured local model inference with Docker Model Runner, and published your agent to Docker Hub as a shareable OCI artifact.

The multi-agent architecture demonstrated here (investigator, researcher, fixer, and tester) is a pattern you can adapt to many workflows beyond debugging. The same YAML-based configuration works for code review pipelines, documentation generators, security scanners, or any task where specialized agents outperform a single generalist.

Docker Agent’s declarative approach to agent configuration means you can version control, share, and iterate on agent designs the same way you manage Docker Compose files or Kubernetes manifests. Combined with DigitalOcean’s Droplet infrastructure, you get a reproducible and portable environment for building agentic AI workflows.

Next steps

After completing this tutorial, consider these extensions:

- Add more specialized agents: Create a Security Analyzer or Performance Optimizer agent by adding new entries to the

agentssection of your YAML configuration - Integrate MCP tools: Add GitHub, Slack, or database tools through the Docker MCP Catalog

- Deploy as a service: Configure

systemdto run the agent continuously on your Droplet - Explore GPU Droplets: For faster local inference, DigitalOcean GPU Droplets provide significant speedups over CPU

- Try the Anthropic backend: The repository includes

cagent-anthropic.yamlfor Claude models

Resources

- Source code: github.com/ajeetraina/bug-investigator-agent

- Docker Hub: hub.docker.com/r/ajeetraina777/bug-investigator

- Docker Agent documentation: docs.docker.com/ai/docker-agent

- DigitalOcean Docker Agent Marketplace: marketplace.digitalocean.com/apps/cagent

- Docker tool-calling evaluation: docker.com/blog/local-llm-tool-calling-a-practical-evaluation

- Docker Model Runner documentation: docs.docker.com/ai/model-runner

- Types of AI agents: digitalocean.com/resources/articles/types-of-ai-agents

Try DigitalOcean for your next AI project

Ready to build your own multi-agent AI system? DigitalOcean Droplets provide a fast, affordable starting point. Deploy the Docker Agent 1-Click App in under five minutes, or explore GPU Droplets for accelerated local inference. Browse the full DigitalOcean Marketplace for more 1-Click Apps that simplify deployment.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author(s)

Ajeet Singh Raina is a Developer Advocate at Docker, helping developers build confidently from laptop to production. With over a decade of experience in DevOps and developer tooling, he has contributed documentation, applications, and workshops spanning Docker Desktop, Docker Compose, Docker Extensions, MCP, and Docker Agents.

I help Businesses scale with AI x SEO x (authentic) Content that revives traffic and keeps leads flowing | 3,000,000+ Average monthly readers on Medium | Sr Technical Writer(Team Lead) @ DigitalOcean | Ex-Cloud Consultant @ AMEX | Ex-Site Reliability Engineer(DevOps)@Nutanix

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- Introduction

- Key takeaways

- What you will build

- Prerequisites

- Step 1: Create the Droplet

- Step 2: Choose your preferred region

- Step 3: Choose the Marketplace image

- Step 4: Configure and launch

- Step 5: Access and verify

- Step 6: Check the Docker Agent version

- Step 7: Clone the bug investigator repository

- Step 8: Configure and run with cloud APIs

- Step 9: Test with a Python bug

- Step 10: Run the tests

- Comparing cloud versus local model performance

- Configure local model inference (optional)

- Step 15: Push to Docker Hub

- Understanding the YAML configuration in depth

- Customizing agent behavior with MCP tools

- FAQs

- Conclusion

- Try DigitalOcean for your next AI project

Deploy on DigitalOcean

Click below to sign up for DigitalOcean's virtual machines, Databases, and AIML products.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.