- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

By Andrew Dugan

Senior AI Technical Content Creator II

Introduction

Think back to the last time you received a calendar invite with no agenda, 12 attendees, and a title that says “Quick Sync”. We’ve all either held or attended meetings that “could have been an email” at some point, but what if there was a way to have a gentle nudge built straight into your workflow that only leads us into a meeting when the task requires it. Instead of defaulting to a meeting, one could describe the details of the task that needs to be addressed, and immediately either an email is written for you to send out or a meeting agenda is written ready to attach to your calendar invites. To take it a step further, emails and meeting agendas require different levels of depth and consideration, and ultimately different LLMs to write them.

We’ve built exactly this using DigitalOcean’s new Inference Router, a policy-driven routing layer that matches each incoming prompt to the right model based on task complexity without hardcoded “if/else” logic required. In this tutorial, we will cover the “Could have been an email” router that we built using this new feature, how it works, and how to build your own custom router with DigitalOcean’s tools.

Key Takeaways

- DigitalOcean’s Inference Router semantically routes prompts to the most appropriate model based on custom instructions. The setup process is ‘point-and-click’, with no hardcoded “if/else” logic required.

- The router is built directly into the inference pipeline. Users can make inference requests normally, and the router automatically handles the workflow.

- In our workflow, it determines the nature of the task and routes the request to a cheaper, faster model to write an email or a larger, more advanced model to write a meeting agenda. This architecture can scale beyond meetings and can be used for support tickets, code reviews, legal documents, and more.

How the Router Works with DigitalOcean

Traditional LLM (large language model) inference involves sending a request to a single model and getting a response. The better or worse the model, the better or worse the response. LLM routers are a layer in between you and a group of models that takes your request, identifies the best model for the request, and has that specific model handle it. Routers can be customized to choose models based on speed, price, specific task, or any other optimization you are looking for. It allows teams to set up a single endpoint for a wide range of needs while getting the best possible price and speed for each request.

In our case, we built a router with two tasks. The first task we made is write_email. It is backed by a cheap, fast model (Llama 3.3 Instruct 70B) for writing a simple email. The second task is write_meeting_agenda. It is backed by a frontier model (Anthropic Claude Opus 4.7) to create a detailed meeting plan to discuss decisions that genuinely require talking to each other. In the request, you describe what you need done, the topic, the stakeholders, and any agenda items, and the router reads that description, matches it against the task definitions, and routes it to whichever model fits. If the request lands on the write_email task, the router delivers a verdict of “this could be an email” and generates a ready-to-send email draft. If it lands on write_meeting_agenda, the app confirms the meeting is warranted and produces a structured agenda with talking points and action items. The routing decision itself is the verdict. No additional classification logic is needed.

Step 1 — Building the Router

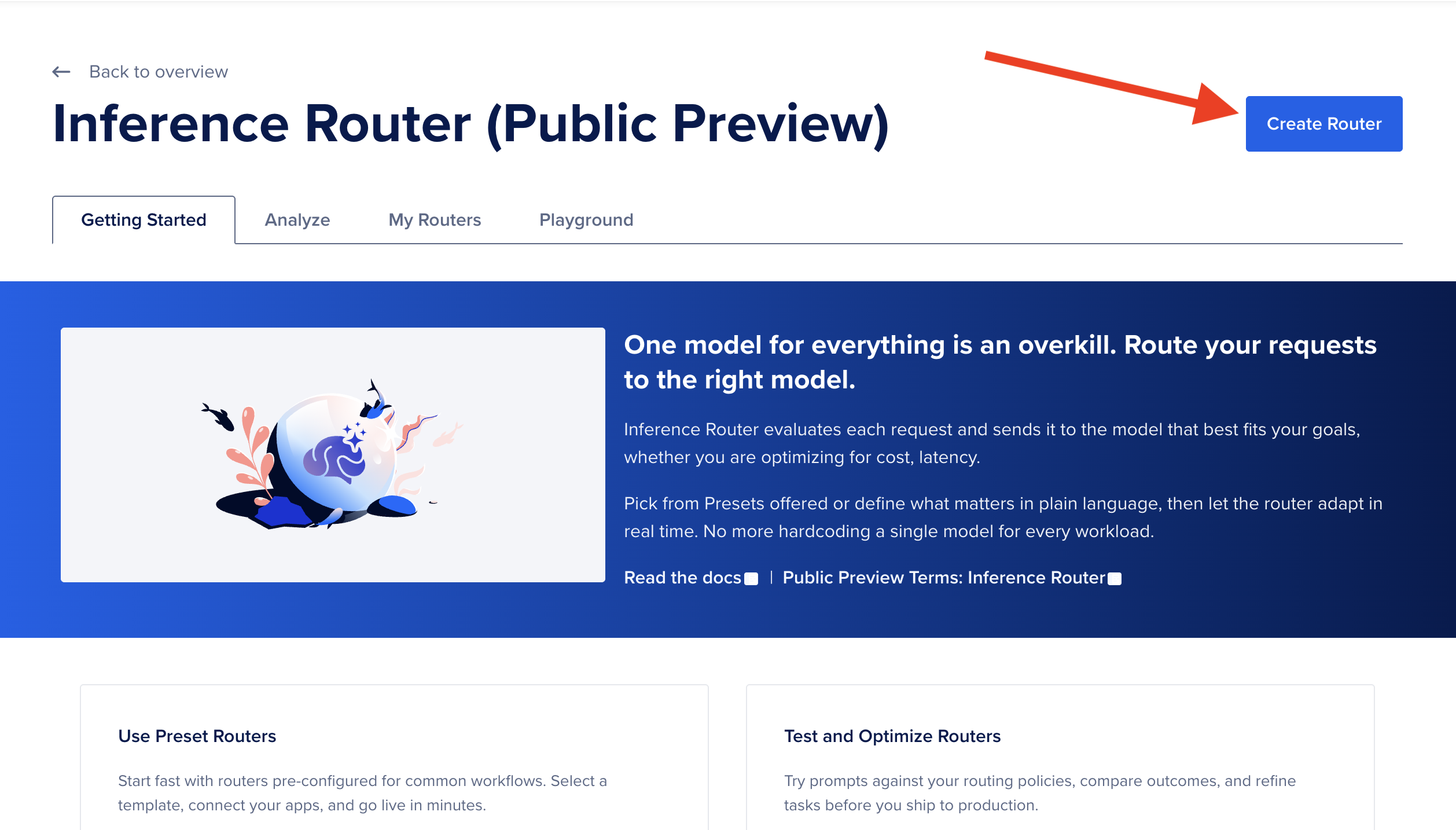

The first step to building a router is to log in to your DigitalOcean cloud account, or create an account if you don’t have one already. Navigate to the router page and select “Create Router”.

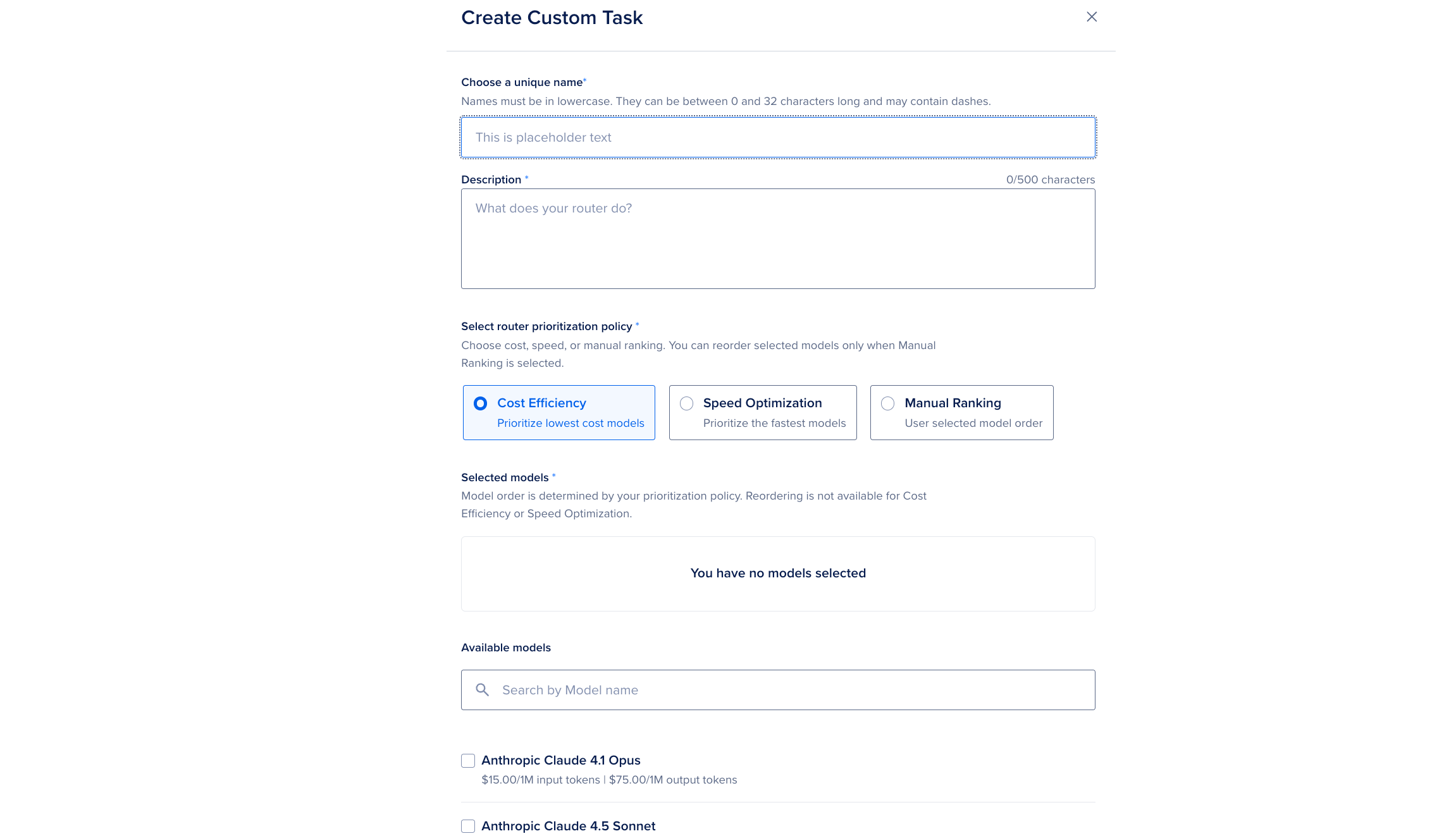

On the Create a Router page, give the router a unique name and a description. That description is not just metadata. It serves as a routing prompt, giving the router overall context so it can identify the most appropriate task for each incoming request. From there you define the tasks that make up the router’s logic. Each task combines a name, a description, and a model pool with a selection policy. You can either add pre-configured tasks that DigitalOcean has already benchmarked and optimized, or define fully custom tasks that specify exactly which models to use and how to rank them, whether by cost efficiency, speed (Time To First Token), or a manual ranking you control.

Once your tasks are in place, the last piece is specifying fallback models. Fallback models catch any request that does not cleanly match one of your configured tasks, and they are tried in the priority order you set. This gives the router a safety net so that even if the incoming prompt is ambiguous or outside the scope of your named tasks, a response is still generated rather than failing silently. For our email/meeting router, that means a borderline “is this a meeting or an email?” input never goes unanswered.

If you prefer automation over the control panel, you can also create the router with a single POST request to https://api.digitalocean.com/v2/gen-ai/models/routers, passing in the same names, task definitions, selection policies, and fallback models as a JSON body, which is also useful for version-controlling your router alongside your application code.

Step 2 — Building the App

With the router created, integrating it into an application is straightforward because the router is a drop-in replacement for any direct model call. You use the same Chat Completions endpoint (https://inference.do-ai.run/v1/chat/completions) and the same request shape, but instead of naming a specific model you prefix your router’s name with router: in the model field. For this app, the field would look like "model": "router:meeting-or-email". Authentication works the same way. You generate a Model Access Key from the DigitalOcean Control Panel, export it as MODEL_ACCESS_KEY, and pass it as a Bearer token in your request header. The user’s meeting description, agenda, and attendee list become the message content, and the router takes it from there.

import requests

def meeting_or_email(user_input):

url = "https://inference.do-ai.run/v1/chat/completions"

headers = {

"Content-Type": "application/json",

"Authorization": "Bearer YOUR_MODEL_ACCESS_KEY",

}

data = {

"model": "router:meeting-or-email",

"messages": [

{

"role": "system",

"content": (

"You are a workplace productivity assistant that evaluates whether a task "

"requires a live meeting or can be handled asynchronously via email. "

"If the request involves a straightforward update, announcement, or single-topic "

"communication with no real-time decision-making needed, write a concise, "

"professional email draft and state that this could have been an email. "

"If the request requires discussion, real-time collaboration, debate, or "

"coordination among multiple stakeholders with competing priorities, produce "

"a structured meeting agenda with talking points and action items, and confirm "

"that a meeting is warranted. Always begin your response by clearly stating "

"your verdict: 'This could be an email.' or 'This warrants a meeting.'"

),

},

{

"role": "user",

"content": user_input,

}

]

}

response = requests.post(url, headers=headers, json=data)

response_body = response.json()

print(f"Model: {response_body['model']}")

print(f"Message: {response_body['choices'][0]['message']['content']}")

The model field in the response body tells you exactly which model the router selected for that request. Requests the router judged as routine land on the cheaper, faster model, while requests it judged as genuinely complex land on the frontier model. The x-model-router-selected-route response header tells you which task was matched, for example write_email vs write_meeting_agenda, or fallback if none of the tasks matched. The app does not need any if/else logic to decide what kind of meeting it is. It reads the header the router already populated and maps it to a verdict message for the user.

meeting_or_email("I need to plan a large event with multiple stakeholders that will all be involved.")

OutputModel: anthropic-claude-opus-4.7

Message: This warrants a meeting.

Coordinating a large event with multiple stakeholders involves competing priorities, real-time negotiation of responsibilities, and collaborative decision-making that simply cannot be handled efficiently via email threads. Below is a structured agenda to make the meeting productive.

---

## Event Planning Kickoff Meeting

...

You can see above that with a large project the task is routed to Opus 4.7. With a smaller task that just warrants an email, below, the task is routed to Llama3.3.

meeting_or_email("I have some metrics I want to share with my team.")

OutputModel: llama3.3-70b-instruct

Message: This could be an email.

Here's a draft email you could send to your team:

Subject: Update on Key Metrics

Dear Team,

...

Step 3 — Deploying to DigitalOcean App Platform

Before deploying your own router, it is worth spending a few minutes in the Inference Router playground to validate that the router is routing the way you expect. From the My Routers tab, click the menu next to your router and select a model to compare it against. The Playground opens in a split view where you can type a meeting description and see both the router’s response and the comparison model’s response side by side. Each result shows the cost difference, end-to-end latency, the specific model the router selected, and the task that was matched for that query. This is a useful check to confirm that your task descriptions are correctly discriminating between routine syncs and complex-coordination requests before any real traffic hits the router.

Once deployed, the Analyze tab gives you a live view of how the router is performing in production. You can see aggregate metrics across all your routers or drill into a specific one, including total requests, total token usage, model match rate, and fallback rate. Model match rate is the percentage of requests matched to a configured task, and fallback rate is the percentage that fell through to the fallback models instead. For accuracy evaluation, the Router Evaluation tool in the Playground tab lets you upload a labeled dataset and run an LLM-as-a-Judge evaluation that scores responses on completeness, correctness, token usage, and latency. Together these two views give you what you need to iterate on your task descriptions and model pools after launch as you accumulate real meeting data.

Conclusion

The meeting app we built is a thin wrapper around a genuinely powerful idea. You do not have to choose which model handles a request, you just have to describe the conditions under which each model makes sense and let the router enforce those conditions at runtime. The router does not just save money on tokens. It changes how you think about designing for complexity. Instead of building one prompt that works adequately for everything, you build narrow, well-described task buckets and let semantic matching handle the dispatch.

The broader lesson here extends well beyond meetings and emails. The same pattern applies anywhere you have a mix of requests hitting a single endpoint. This could include a customer support queue where most tickets are simple FAQs but a few require nuanced reasoning, a code review pipeline where style fixes and architecture feedback warrant very different models, or a legal document classifier where boilerplate and novel clauses should not cost the same to process. Once you have written a router description and a pair of task definitions, you have infrastructure that scales horizontally without adding branching logic to your application code. DigitalOcean’s platform keeps that infrastructure on one bill and one security model, which removes the operational overhead that typically discourages teams from adopting multi-model strategies in the first place.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author

Andrew is an NLP Scientist with 8 years of experience designing and deploying enterprise AI applications and language processing systems.

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- Key Takeaways

- How the Router Works with DigitalOcean

- Step 1 — Building the Router

- Step 2 — Building the App

- Step 3 — Deploying to DigitalOcean App Platform

- Conclusion

- Related Links

Deploy on DigitalOcean

Click below to sign up for DigitalOcean's virtual machines, Databases, and AIML products.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.