- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

By Adrien Payong and Shaoni Mukherjee

As AI applications scale, more teams are relying on agents. Agents are autonomous software components that can think, plan, act, and interact with other agents. When designing large-scale multi-agent systems, architects have built two families of protocols to address distinct interoperability problems. Agent‑to‑Agent protocols define how one agent communicates with another agent. Model context protocols standardize how your AI application or agent connects to tools, resources, prompts, APIs, agents, or other systems. Understanding the distinction between the two will help you build secure, reliable, and scalable agent‑based systems.

In this article, we’ll examine both protocols. We’ll define them, compare them, highlight their security considerations, list their pros and cons, and discuss a real-world workflow that uses them. By the end, you’ll know when to use each protocol and how to leverage them together.

Key Takeaways

- Different layers, complementary roles: Think of A2A as solving inter‑agent communication primitives (delegation, discovery, streaming), while MCP solves agent/app–to‑tool grounding, and contextualizes integration. They’re perfectly complementary: in most cases, you’ll want both.

- Choose A2A for multi‑agent orchestration: Consider A2A if you want to orchestrate multiple autonomous agents that coordinate as peers while preserving agent boundaries and execute tasks asynchronously. It is ideal for complex workflows such as a research assistant, customer support swarm, or planning pipeline.

- Choose MCP for tool integration: Use MCP when your AI model or agent needs to access external data, tools, or prompts in a consistent way. MCP is a drop‑in layer for IDE assistants, chatbots, and other host applications.

- Layer protocols thoughtfully: Many architectures layer the protocols. For example, a planner agent might reach out to other agents via A2A, which in turn uses MCP to fetch tools. Separating tool integration from agent orchestration logic can make your system more secure and easier to maintain.

- Mind the security context: Keep in mind that because A2A and MCP operate at different layers, each will have distinct security implications. Limit privileges, authenticate properly, validate inputs, and audit operations.

What Is A2A (Agent‑to‑Agent)?

A2A is an open standard that defines how agents should communicate with one another. Its goals include capability discovery, task delegation, modality negotiation, and secure information sharing. It enables autonomous agents to communicate while preserving privacy and proprietary internal processes.

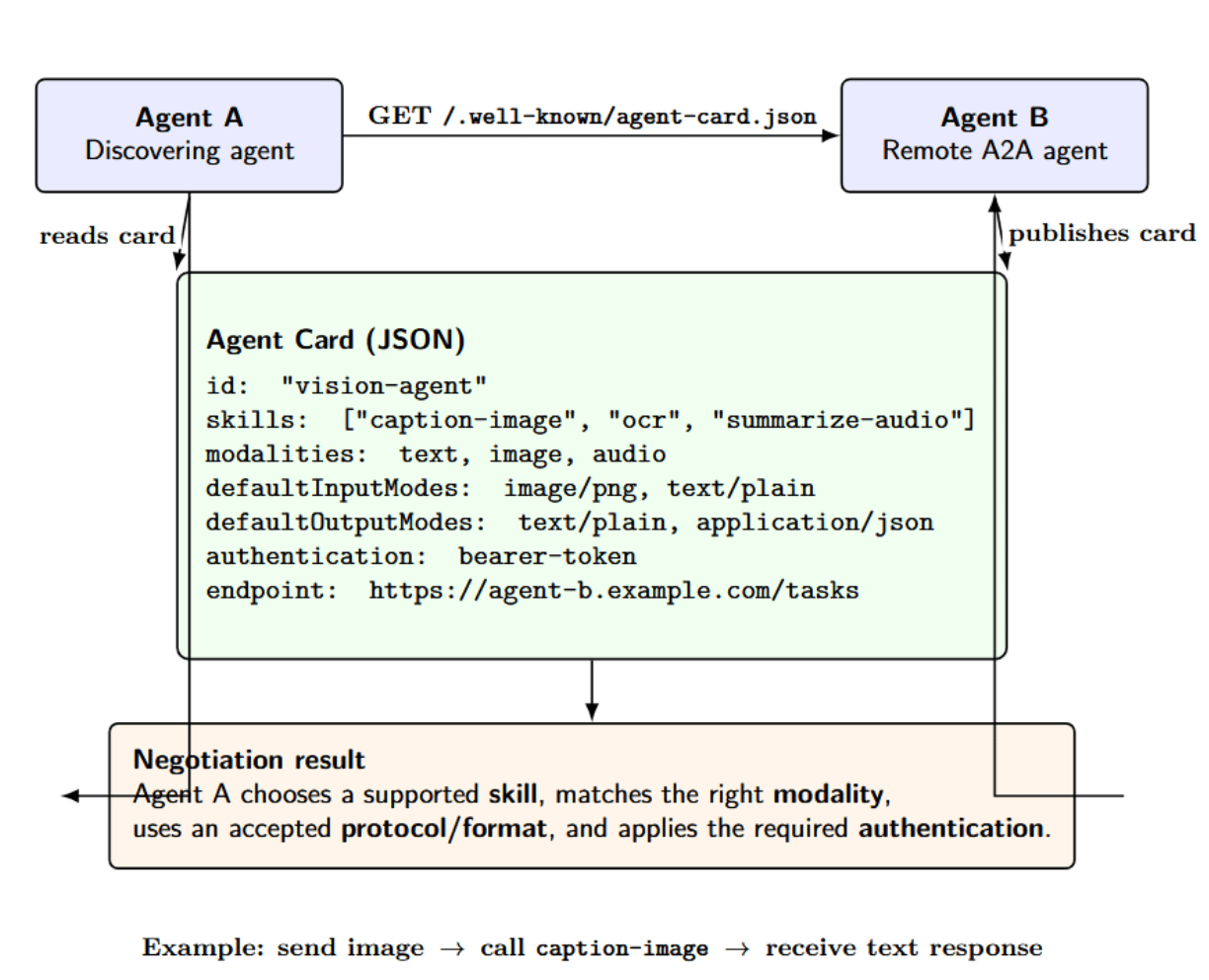

Agents that support A2A publish an agent card (a structured descriptor) advertising what capabilities, modalities, and endpoints they support. Other agents can discover cards and delegate tasks without understanding how the target agent is implemented internally. Primary goals of A2A include:

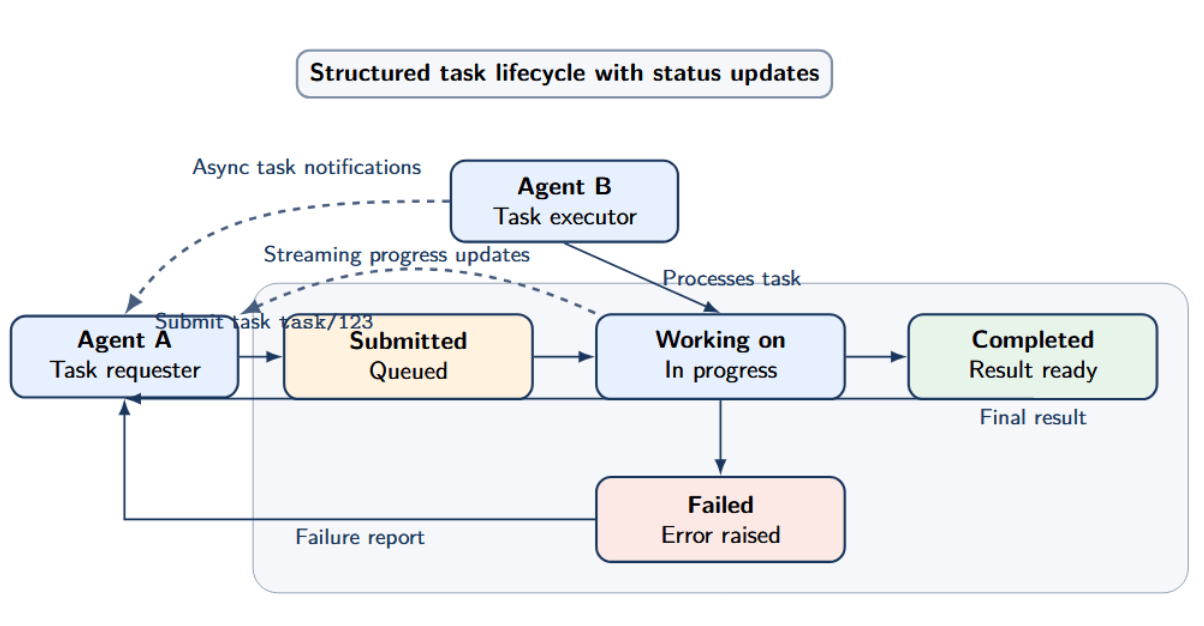

1. Task‑centric communication: Agents communicate using structured tasks with well-defined lifecycle states (submitted, working on, completed, failed). Agents can send updates by streaming long‑running tasks or sending asynchronous task notifications.

2. Discovery and negotiation: Agents publish agent cards in well-known locations such as /.well‑known/agent‑card.json. These cards detail actions an agent supports, modalities they accept and work with (text, images, audio), and the protocols they will accept.

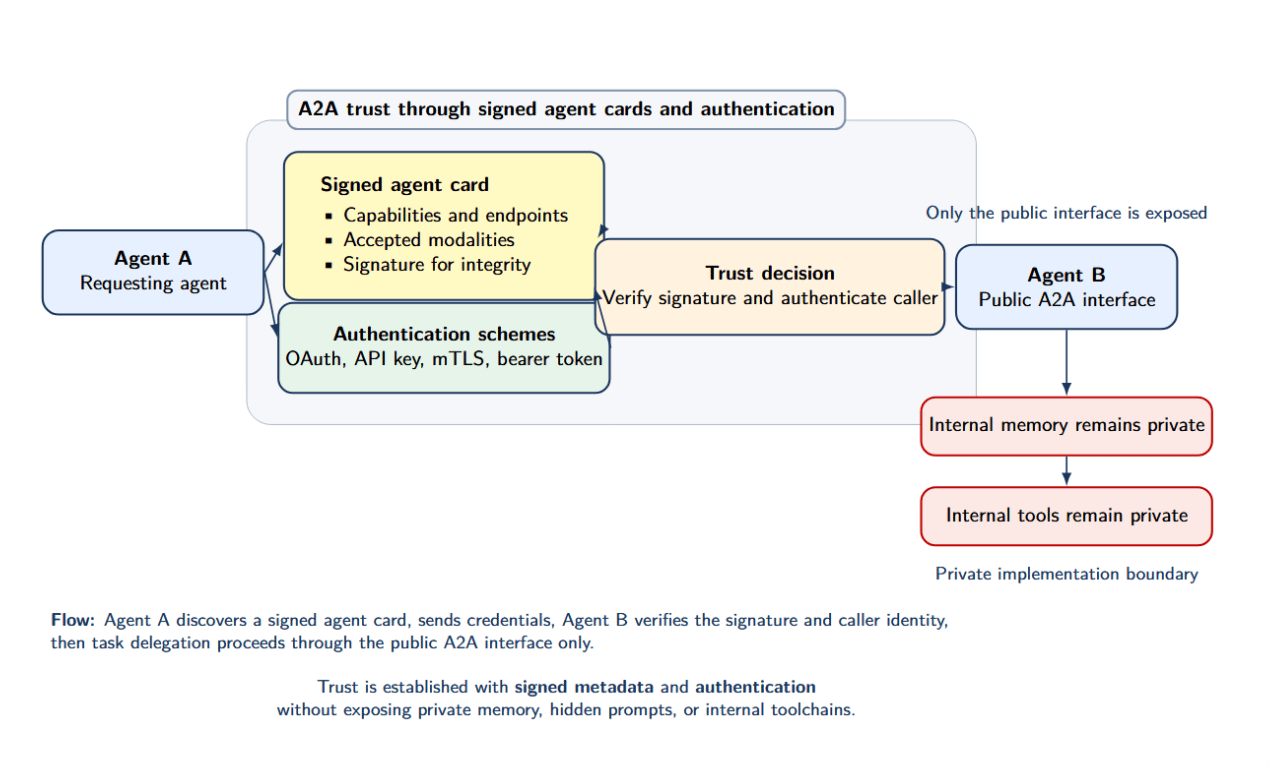

3. Security and authentication: A2A can support signed agent cards and use multiple authentication schemes. This enables agents to trust each other without having access to internal memory or tools.

4. Layered design: A2A runs on top of transports like HTTP or websockets. It can interoperate with other protocols.

Key A2A Concepts

- Agent Card: An agent card is a JSON document that describes an agent’s ID, supported modalities (text, image, audio), tool capabilities, authentication schemes, endpoints, etc.

- Task: It is the fundamental unit of work. A client agent submits a task with descriptive metadata (e.g., inputs required, expected outputs) and the server agent executes that task, responding with updates about its status and final results. Tasks may be synchronous (single response) or asynchronous (streaming or polling interval).

- Capabilities: Capabilities describe functions an agent can perform, like “translate text”, “generate image”, or “summarize document”. Capabilities can express preconditions and postconditions, include authentication requirements, and optionally describe expected latency.

- Status Codes: Agents may communicate standardized Status Codes to allow robust orchestration and error handling. For example: submitted, queued, running, succeeded, failed.

Why A2A Exists

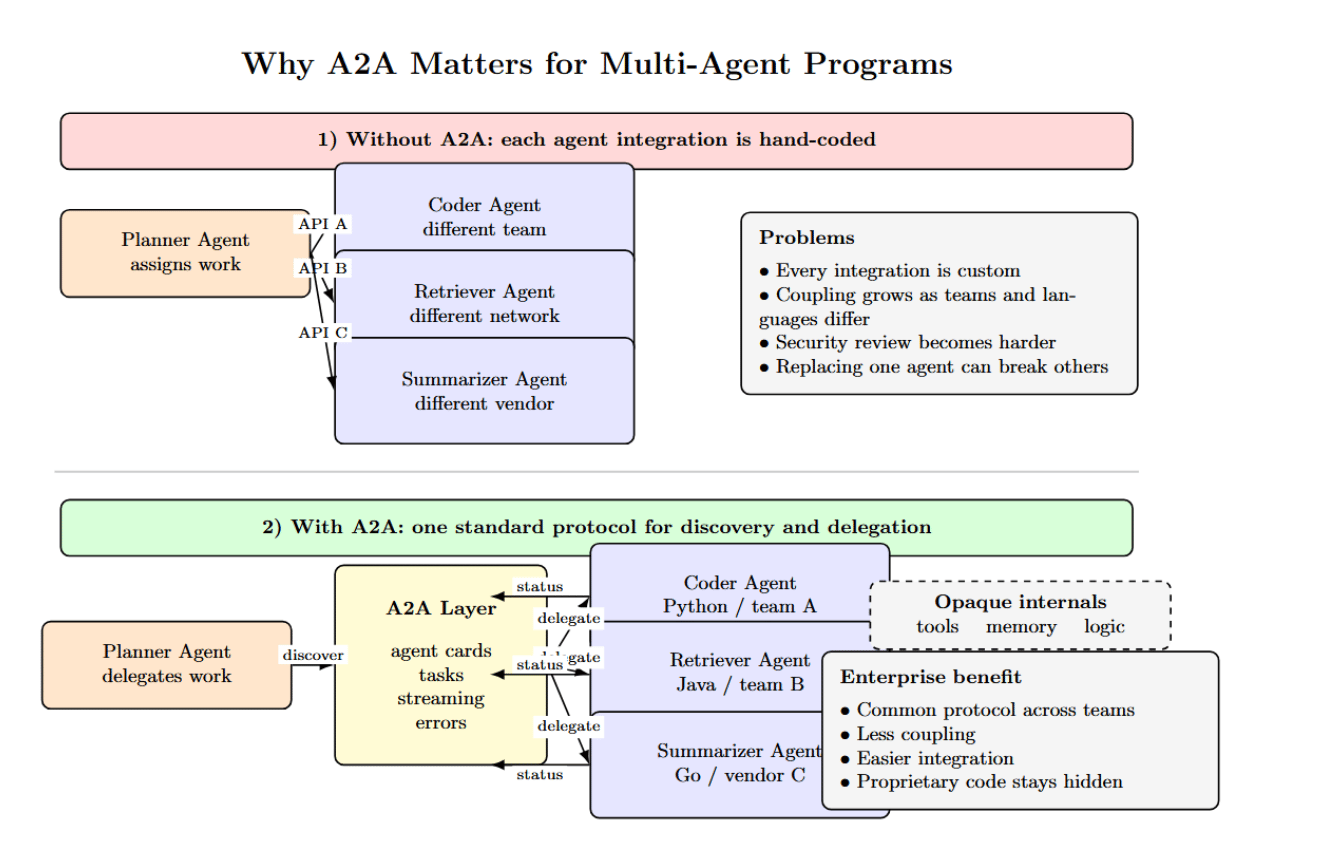

Single‑agent programs naturally call functions internally. However, monolithic programs get brittle as organizations scale. Software teams will eventually reach a point where they want to split duties: a planner agent assigns tasks to a coder agent, a retriever agent, and a summarization agent. These agents reside on separate networks, use different programming languages, or are managed by distinct teams or vendors within an organization. To work together without a universal bus standard such as A2A, agents must hard‑code APIs into other agents, resulting in tight coupling and security concerns.

A2A aims to fill this gap by standardizing how agents announce themselves, negotiate tasks, stream outputs, and handle errors in a framework-agnostic and language-neutral manner. A2A is particularly well-suited to enterprise deployment where teams or different vendors want to design their own custom agents with proprietary internal code.

What Is MCP (Model Context Protocol)?

The Model Context Protocol is an open standard that allows AI applications or agents to access external resources. While Autonomous Agents communicate with each other via A2A, MCP enables AI models to access tools, resources, and prompts in a standard way. The MCP specification outlines a host‑client‑server model. At a high level:

- Host: It owns the user experience for end-users. Hosts connect to AI models. Example hosts include IDE plugins, chat interfaces, or agent orchestrators.

- MCP Client: A library that the host integrates, which performs communications with MCP servers. It manages details like authentication, transport specifics, session state, and message framing.

- MCP Server: A service that exposes capabilities (tools, resources, prompts). It might be a local process (e.g., a Python server started by the host) or a remote HTTP service.

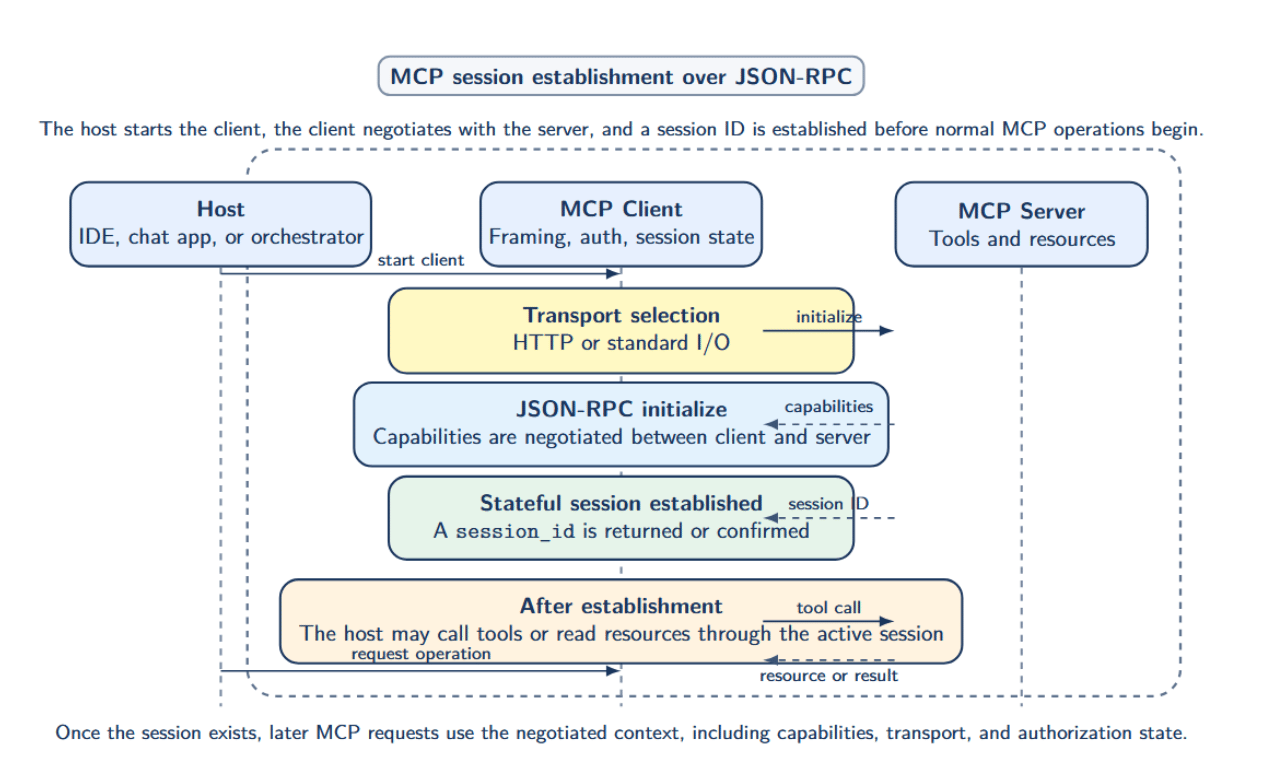

MCP uses JSON‑RPC as its transport and defines a stateful session establishment model.

The host, when starting a client, negotiates capabilities with servers, declares what transports it supports (HTTP, standard I/O), and obtains a session ID. After session establishment, the host may call tools or read resources.

Core MCP Primitives

- Tool: A function or operation that can be called by the client. Tools are defined by schemas for their input and output, as well as a name and description. Tools may also specify optional parameters. Tools might implement everything from database queries to filesystem operations, search APIs, and workflows. When a tool is invoked over MCP, the client sends arguments conforming to the input schema. The server executes the operation and returns results or an error.

- Resource: Resources are referenced pieces of structured data made available by the server. Resources could be a file, a schema, a document, or an application’s own custom knowledge base. The client may enumerate resources and read them from the server in a normalized format (binary or structured). MCP allows resources to be streamed to support large resources.

- Prompt: They are reusable templates of text or code that the host can fetch and insert variables to direct the AI model. Having prompts served dynamically allows prompting workflows for retrieving domain-specific instructions or query templates, etc.

- Authorization: Authorization of MCP when using HTTP transports leverages OAuth 2.1 and conforms to secure metadata discovery. For transports using standard I/O (such as locally running servers invoked through subprocess), MCP defines how tokens are passed through. This prevents external servers from acting on behalf of the user without permission or making arbitrary requests.

- Session: The interaction between an MCP client and server occurs within a session, which has its own context. This includes the negotiated capabilities, call quotas, and resource pointers. Sessions enable a consistent state across multiple calls and support stateful features such as conversation history.

Why MCP Exists

AI models require context to return helpful answers. This context could be local files, remote APIs, database records, or customized prompts. Without a standard, developers must build custom connectors for each LLM model or hard-code environment‑specific logic into the model interface. MCP separates the model from external data and tool access. Tools and resources exposed to an MCP server can be consistently consumed by any compliant host. This enables LLM agent interoperability across different models or frameworks by sharing the same definition of capabilities.

Key Differences Between A2A and MCP

While both protocols support AI agent workflows, they operate at different layers:

| Dimension | A2A | MCP |

|---|---|---|

| Primary purpose | Agent-to-agent communication, discovery, delegation, streaming | Agent/app-to-tool and context communication |

| Main actors | Two (or more) autonomous agents | Host, MCP client, MCP server |

| Core unit | Task (work assignment with lifecycle) | Tool, resource, prompt |

| Discovery model | Agent cards and capability discovery | Capability negotiation plus tool/resource listing |

| Typical use case | Delegating work to specialist agents | Exposing APIs, files, DBs, workflows to models |

| Communication style | Messaging, streaming, asynchronous task updates | JSON-RPC sessions, tool calls, resource reads |

| Internal opacity | Strongly preserves agent boundaries; no access to internal memory/tools unless explicitly exposed | Focuses on structured capability exposure; internal state not specified. |

| Best fit | Multi-agent orchestration, inter-agent coordination | Tool interoperability, context plumbing, agent integration |

Use A2A if you have multiple agents that need to work together as peers. Utilize MCP when you have an AI application or agent that needs standardized access to tools and external data. Use them together when architecting a complex multi‑agent solution that requires both delegation and tool access patterns. (An agent using A2A may delegate work to other specialized agents that use MCP to call tools and fetch resources.)

Real‑World Agent Workflow

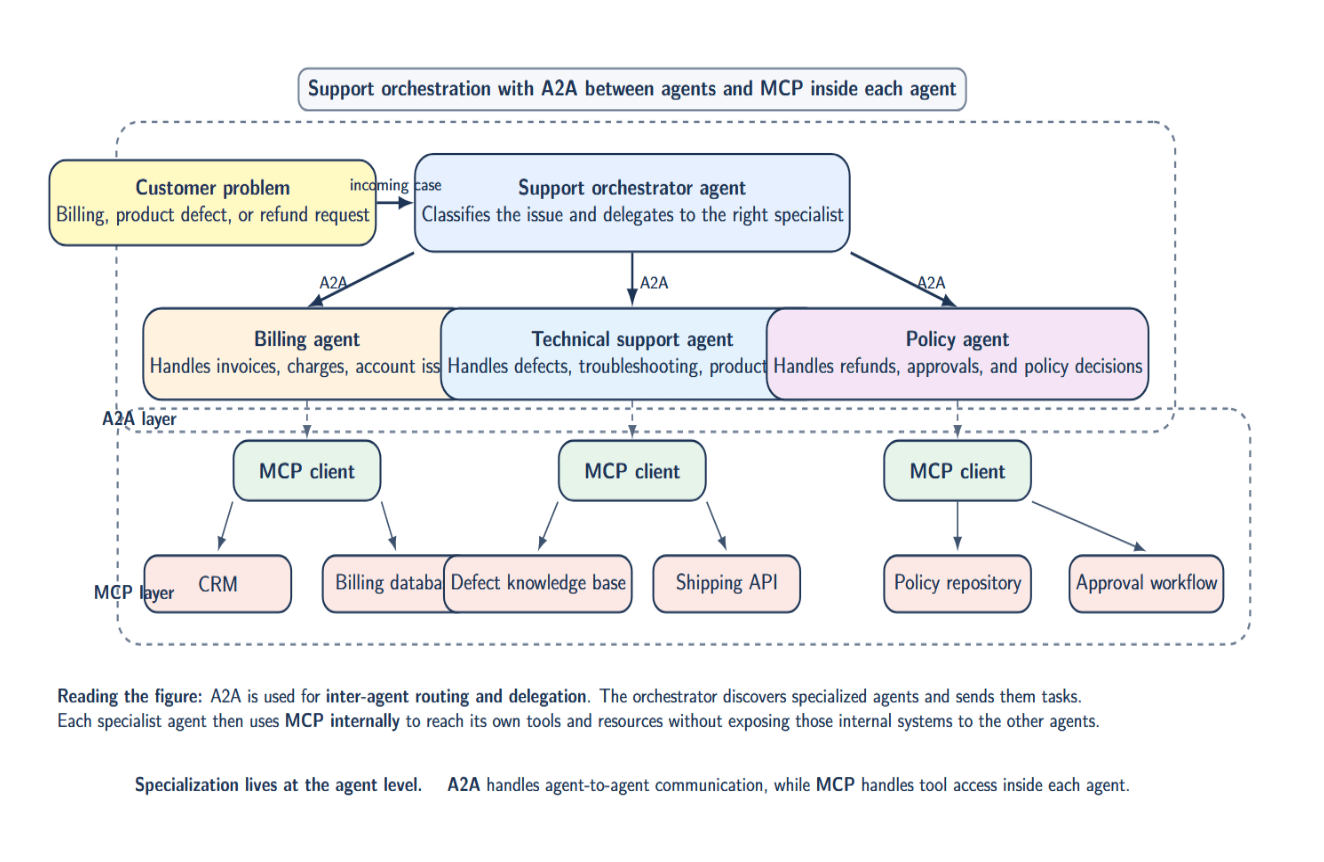

Suppose a support orchestrator agent receives a customer issue.

It assigns billing queries to a billing agent, issues with product defects to a technical support agent, and refund approvals to a policy agent. The orchestrator discovers and communicates with these agents using A2A, and they may use MCP internally to call their CRM, policy repository, or shipping API. The specialization is at the agent level. A2A routes inter‑agent communication; MCP handles tool access.

A2A vs MCP in Python

People commonly search for “A2A vs MCP in Python,” trying to find real-world code examples. Here are simplified patterns.

Minimal MCP server in Python

from mcp.server.fastmcp import FastMCP

# 1. Instantiate a FastMCP server with a namespace

mcp = FastMCP("my_app")

# 2. Expose a tool via MCP

@mcp.tool()

def get_customer_balance(customer_id: str) -> str:

"""Return the customer's current balance."""

# In practice, fetch from a database or API

return "Balance: $120"

if __name__ == "__main__":

# 3. Start the MCP server with stdio transport (via CLI or wrapper)

mcp.serve() # or however the FastMCP server starts the stdio loop

With the FastMCP class, you can declare tools using decorators. The docstring for each tool becomes its description. When the host calls for get_customer_balance through the MCP client, it gets a structured response.

Conceptual A2A pattern in Python

# 1. Expose a specialist agent via A2A (run this via CLI)

# adk api_server --a2a --module check_prime_agent

# 2. Discover and consume the remote agent via its agent card

from google.cloud import agent

remote_agent = agent.RemoteA2aAgent(

name="prime_checker",

description="Checks whether a number is prime",

agent_card="https://specialist.company.com/a2a/check_prime_agent/.well-known/agent.json",

)

# 3. Delegate a task to the remote agent

result = remote_agent.invoke(

tool_name="prime_checking",

inputs={"nums": [47]} # ADK pattern: list of numbers

)

# 4. Extract and print the output

print(result["output"]) # -> e.g., {"is_prime": true, "number": 47}

The above code:

- Uses RemoteA2aAgent as in the ADK‑Python quickstart, with an explicit agent_card URL.

- Follows ADK’s schema‑like pattern (a tool_name and inputs dict, where nums is a list of integers).

- Expose a specialist agent via A2A, discover it via agent card, and delegate a “check prime”‑style task.

Security Considerations

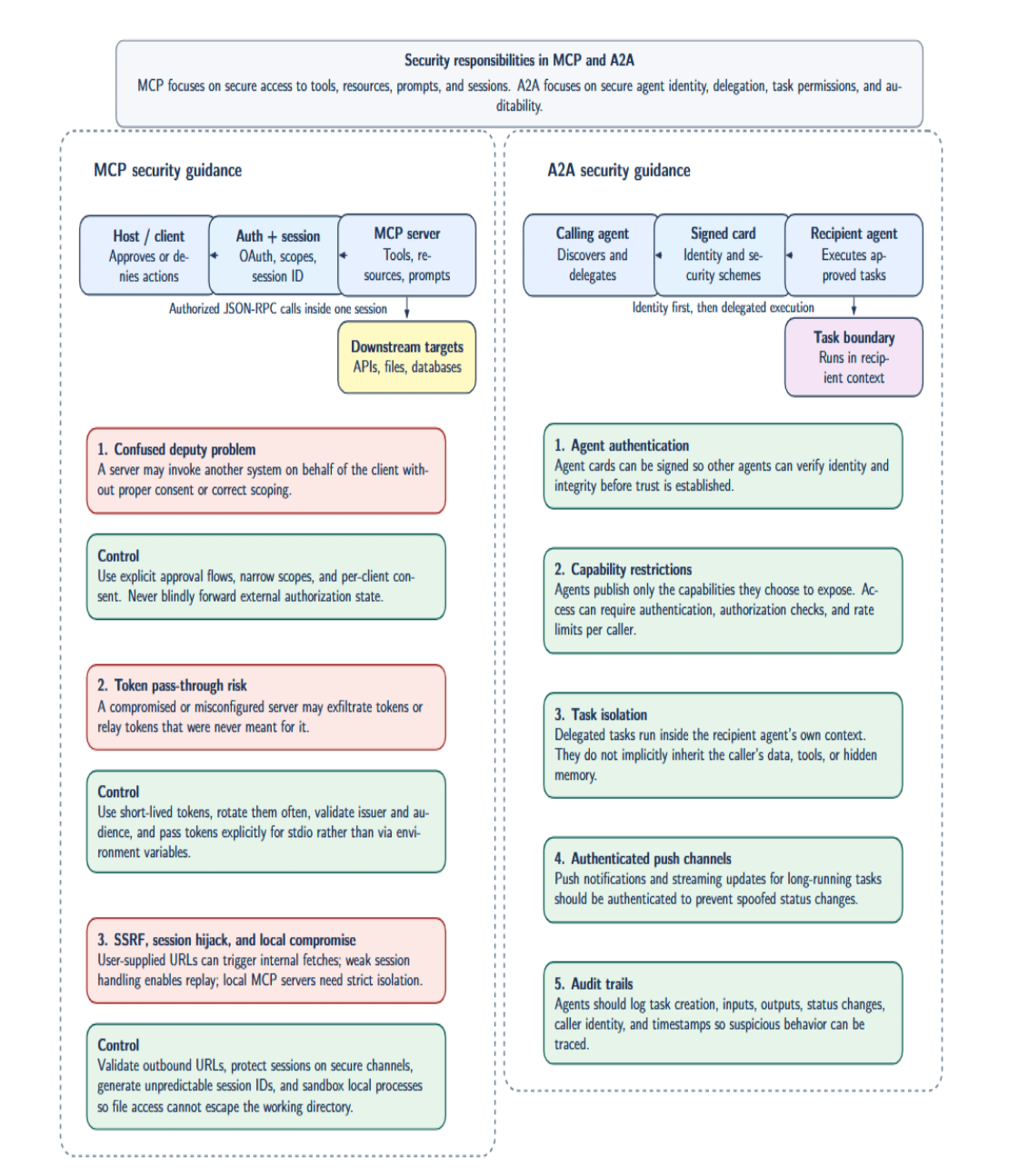

Security is where conflating A2A and MCP is most dangerous. Because they operate at different layers, their attack surfaces differ.

MCP Security

MCP’s security guidance points out risks like:

- Confused deputy problem: A MCP server might invoke another system on behalf of the client without proper consent or scoping. Servers should implement scopes and approval flows. Clients should limit privileges and should not blindly forward tokens.

- Token pass‑through risks: When using HTTP transports, tokens may be passed in authorization headers. If your server is compromised, it may exfiltrate tokens. Use short‑lived tokens that are rotated frequently with limited scopes. When using standard I/O transports, MCP clients should provide tokens explicitly rather than passing them as environment variables.

- Server‑side request forgery (SSRF): If your client application passes user‑supplied URLs to an MCP server, the server may inadvertently fetch resources from internal-only systems. To mitigate this risk, servers should validate inputs and enforce strict controls on outbound network requests.

- Session hijacking and replay attacks: MCP is session‑based; thus, session IDs should be generated from unpredictable entropy and protected over HTTPS and secure channels.

- Local server compromise: If hosts are spawning local MCP servers (using mcp.run(transport=“stdio”), for example), they must isolate that process such that any file I/O cannot escape the working directory.

- Human‑in‑the‑loop: The official MCP spec allows gating certain tools behind human intervention to approve sensitive operations, such as sending an email, purchasing a device, etc.)

A2A Security

A2A secures communication by focusing on agent identity and task permissions:

- Agent authentication: Agents can authenticate themselves by signing their agent cards (i.e., via JSON Web Signatures) to allow others to verify their identity and integrity.

- Capability restrictions: Agents only publish the capabilities they want to expose. Capabilities can require authentication and rate-limiting per client.

- Task isolation: Delegated tasks run within the recipient agent’s context. Tasks don’t provide implicit access to the caller’s data or tools. Agents can choose which tasks they allow being called on, and can terminate tasks.

- Push vs pull: Agent-to-agent allows for push-based notification about the status of long-running tasks. Push notification channels should be authenticated to prevent spoofing.

- Audit trails: Agents should log tasks, inputs, outputs, and status transitions, including metadata (e.g., caller ID, timestamps) for traceability. Without proper logging, malicious tasks may go undetected.

Pros and Cons of A2A and MCP

The following table summarises the key pros and cons of A2A and MCP. It provides a comparative view of the strengths each protocol has and where teams will need to trade off on architecture, scalability, and security.

| Protocol | Advantages | Disadvantages |

|---|---|---|

| A2A | Enables rich collaboration between autonomous agents without custom glue code. Scales across platforms because new agents can connect through standard JSON task exchanges. Uses established web standards such as HTTPS, JSON, and SSE, which makes enterprise integration easier. Supports real-time progress updates and streaming for long-running workflows. | Adds architectural complexity because each agent may need to run as a server. Cross-network calls can introduce more latency than local tool execution. Data freshness may depend on the remote agent rather than direct source access. Security management is more involved because agents must authenticate and exchange sensitive credentials. Adoption is still relatively early compared with more established integration patterns. |

| MCP | Simplifies tool integration by standardizing LLM-to-tool communication. Benefits from a growing ecosystem of MCP-compatible tools and servers. Improves robustness through structured schemas that reduce formatting and injection issues. Remains vendor-neutral, helping teams avoid lock-in to a single LLM provider or backend. | Does not handle native multi-agent coordination or task routing between agents. Legacy systems may require wrappers before they can participate in MCP workflows. For very simple lookups, MCP can feel heavier than calling an API directly. The protocol continues to evolve, so teams must watch for changes in implementations and versions. |

FAQ SECTION

What is the difference between A2A and MCP?

A2A standardizes agent-to-agent communications, including discovery, task delegation, asynchronous updates, and streaming. MCP standardizes how agents or applications call tools and access resources and prompts through a host‑client‑server model. A2A handles delegation; MCP handles capability integration.

Is MCP a replacement for agent‑to‑agent communication?

No. MCP is not intended to replace agent‑to‑agent communication. While they can be used together, they address different needs. MCP is intended to provide a standardized tool and context integration. A2A is intended to allow communication between peer agents. A2A and MCP address different layers in the overall agent stack.

Can A2A and MCP be used together?

Yes, with complex enough systems, you will have a “planner” agent that delegates work to “specialist” agents using A2A. Those agents may then use MCP to call tools, read files, or retrieve prompts. Used together, they provide a clean separation between orchestration and tool integration.

Which is better for multi‑agent systems?

A2A is a much better fit for pure multi‑agent systems. It provides built-in discovery, task lifecycle management, asynchronous updates, and negotiated capabilities. MCP by itself provides none of those features. It is focused on calling tools and providing resources.

Does MCP handle agent memory?

Not directly. MCP provides the model for exposing resources and calling tools to the host. While you could build a memory interface as a resource server (ex, vector store), MCP does not standardize how memory or context should be stored. Long‑term memory is still an application-level concern.

How do A2A systems scale?

A2A scales by decomposing work into coarse‑grained tasks and delegating them to agents that specialize in those tasks. Agent capabilities are published with agent cards, and specialized routing logic or “orchestrators” are responsible for routing tasks to available agents. You can horizontally scale by adding more agents, provided you scale your discovery services and manage trust relationships.

What are the security risks of agent communication?

Risks include weak identity controls, over‑broad permissions, prompt injection, data exfiltration, SSRF, session hijacking, and malicious tasks. Both A2A and MCP require careful authentication, authorization, input validation, output filtering, and auditing to mitigate these risks. A2A adds its own concerns around task acceptance, identity of remote agents, and stream integrity.

Conclusion

A2A and MCP should not be viewed as competing standards; they merely address distinct problems present within contemporary AI systems. A2A is the better fit for agent-to-agent delegation, coordination, and orchestration in multi-agent environments. MCP is the better fit for standardized tools, data, and an external system. Many robust AI architectures will use both A2A (agents collaborating with other agents) and MCP (agents securely interacting with tools). Teams that clearly distinguish between these two concepts will be better equipped to build scalable, interoperable, secure AI systems.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author(s)

I am a skilled AI consultant and technical writer with over four years of experience. I have a master’s degree in AI and have written innovative articles that provide developers and researchers with actionable insights. As a thought leader, I specialize in simplifying complex AI concepts through practical content, positioning myself as a trusted voice in the tech community.

With a strong background in data science and over six years of experience, I am passionate about creating in-depth content on technologies. Currently focused on AI, machine learning, and GPU computing, working on topics ranging from deep learning frameworks to optimizing GPU-based workloads.

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- **Key Takeaways**

- **What Is A2A (Agent‑to‑Agent)?**

- **What Is MCP (Model Context Protocol)?**

- **Key Differences Between A2A and MCP**

- **Security Considerations**

- **Pros and Cons of A2A and MCP**

- **FAQ SECTION**

- **Conclusion**

- **References and Resources**

Join the many businesses that use DigitalOcean AI Platform.

Reach out to our team for assistance with GPU Droplets, 1-click LLM models, AI Agents, and bare metal GPUs.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.