- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

By Justin L and Anish Singh Walia

Introduction

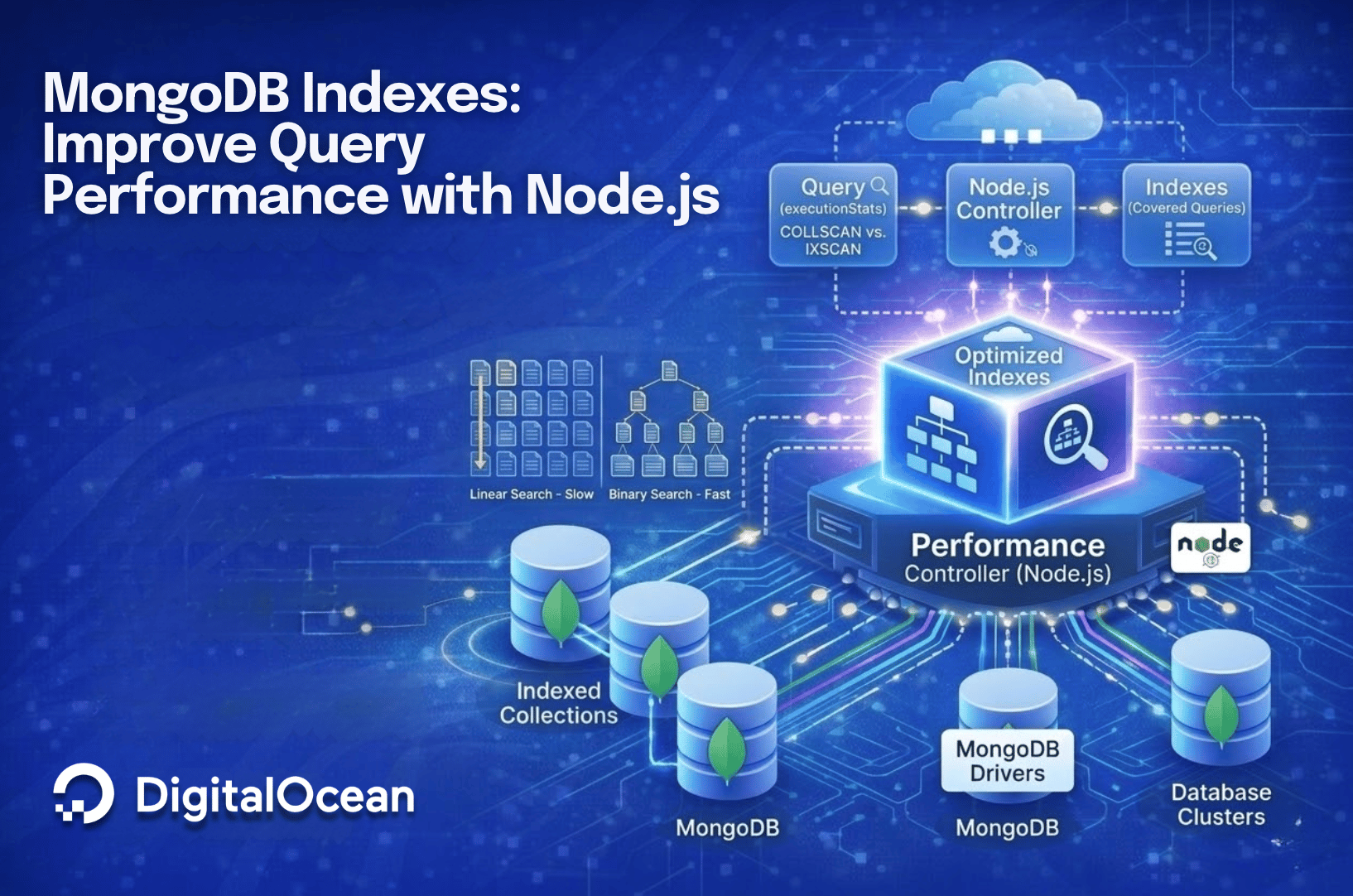

MongoDB indexes are data structures that store a subset of a collection’s data in an easy-to-traverse form. Without an index, MongoDB performs a collection scan (COLLSCAN) to find matching documents. This means the database reads every document in the collection, consuming significant CPU and I/O resources as the dataset grows.

Indexes let MongoDB locate documents without scanning the full collection. They work like the index at the back of a textbook: instead of reading every page, you look up a term and go directly to the right page.

In this tutorial, you will create and test MongoDB indexes using Node.js against a MongoDB Atlas cluster. You will provision a cluster with the Atlas CLI, load sample data, and compare query performance with and without indexes. By the end, you will understand how single field indexes, compound indexes, covered queries, and TTL indexes work in practice.

Key Takeaways

- MongoDB indexes prevent full collection scans by maintaining sorted references to documents, letting the database use binary search instead of linear search.

- Single field indexes speed up queries on one field but still require a FETCH stage for unindexed predicates.

- Compound indexes cover multiple query conditions and reduce the number of documents MongoDB needs to examine.

- Covered queries return results directly from the index without reading documents from disk, achieving zero document examinations.

- TTL (Time-To-Live) indexes automatically delete expired documents, making them ideal for session data, logs, and temporary records.

- The

explain('executionStats')method shows you exactly how MongoDB executes a query, including the scan type (COLLSCAN vs. IXSCAN) and document counts.

Prerequisites

To follow this tutorial, you will need:

- A MongoDB Atlas Account: You can sign up for free at MongoDB Atlas.

- Node.js Installed: Ensure Node.js is installed on your development machine. You can follow the guide on How To Install Node.js.

- MongoDB Atlas CLI: Install the official CLI tool to manage your cluster from the terminal.

- macOS (Homebrew):

brew install mongodb-atlas-cli - Linux: Refer to the official installation instructions.

- macOS (Homebrew):

Step 1: Provisioning the Database

Instead of clicking through the web interface, we will use the Atlas CLI to create a cluster, secure it, and populate it with data. Open your terminal and follow these steps.

1. Authenticate

First, authenticate with your Atlas account. This will open a browser window for you to confirm the code:

atlas auth login

Select the Atlas Project created when signing up for a MongoDB Atlas account. You may be prompted for your preferred output format during the login process. Selecting “json” is a good default choice.

Output? Select authentication type: UserAccount

To verify your account, copy your one-time verification code:

XXXX-XXXX

Paste the code in the browser when prompted to activate your Atlas CLI. Your code will expire after 3 minutes.

To continue, go to [https://account.mongodb.com/account/connect](https://account.mongodb.com/account/connect)

Press Enter to open the browser and complete authentication...

Successfully logged in as <email>.

Select one default organization and one default project.

? Choose a default organization: <organizationId>

? Choose a default project: 11223344556677889900aabb

? Default Output Format: json

You have successfully configured your profile.

You can use [atlas config set] to change your profile settings later.

Copy and paste the project ID you select for later use. We’ll use 11223344556677889900aabb in our examples.

2. Create a Cluster

Create a free-tier (M0) cluster named IndexTestCluster. We will use AWS as the provider in the US East region (you can change the region if preferred).

atlas clusters create IndexTestCluster \

--provider AWS \

--region US_EAST_1 \

--tier M0

<$>[warning] Atlas limits the number of M0 clusters that may exist within a single project. If you’ve reached the limit, consider using the existing cluster or changing to M10 at an additional cost. <$>

<$>[info] Cluster creation may take 3-5 minutes. You can watch the status with atlas clusters watch IndexTestCluster. <$>

Output{

"clusterType": "REPLICASET",

"connectionStrings": {},

"diskSizeGB": 10,

"groupId": "11223344556677889900aabb",

"id": "696005ebf9671da41311a91d",

"mongoDBMajorVersion": "8.0",

"mongoDBVersion": "8.0.17",

"name": "IndexTestCluster",

...

}

The groupId field of the output will match the projectId you selected during authentication.

3. Load Sample Data

Once the cluster is running, load the standard sample datasets. This includes the sample_mflix database we will use for our movie queries:

atlas clusters sampleData load IndexTestCluster

Output{

"_id": "6960098a67cc384a6059c0a4",

"clusterName": "IndexTestCluster",

"createDate": "2026-01-08T19:46:18Z",

"state": "WORKING"

}

4. Create a Database User

Next, create a database user that your Node.js script will use to connect.

<$>[info] The --deleteAfter option can optionally be added to the command with a date in ISO 8601 format (e.g., 2026-01-10T00:00:00Z) to automatically remove the user based on your requested date/time. It’s included in the example below and should be changed to a reasonable date and time for your use. <$>

Replace <username>, <password>, and <date> with your desired secure credentials:

atlas dbusers create \

--username <username> \

--password <password> \

--projectId 11223344556677889900aabb \

--role readWrite@<databaseName> \

--role dbAdmin@<databaseName> \

--deleteAfter <date>

Here is the output when creating the “index_test” user account with an expiration of 2026-01-10T00:00:00Z.

Output{

"databaseName": "<databaseName>",

"deleteAfterDate": "2026-01-10T00:00:00Z",

"groupId": "11223344556677889900aabb",

"roles": [

{

"databaseName": "<databaseName>",

"roleName": "readWrite"

},

{

"databaseName": "<databaseName>",

"roleName": "dbAdmin"

}

],

"scopes": [],

"username": "index_test",

...

}

Note: Remember that this cluster is accessible from the internet, depending on its configuration. Picking a secure username and password is essential for preventing unauthorized access.

5. Allow Network Access

Allow your current IP address to connect to the cluster. Update the <projectId> and <date> values with the project ID chosen during authentication and an expiration date in ISO 8601 format:

atlas accessLists create \

--currentIp \

--projectId <projectId> \

--deleteAfter <date>

Output{

"results": [

{

"cidrBlock": "104.30.164.0/32",

"deleteAfterDate": "2026-01-10T00:00:00Z",

"groupId": "11223344556677889900aabb",

"ipAddress": "104.30.164.0"

}

],

"totalCount": 1

}

6. Get the Connection String

Finally, retrieve the connection string needed for your code. Copy the standardSrv connection string (e.g., mongodb+srv://...):

atlas clusters connectionStrings describe IndexTestCluster

Output{

"standard": "...",

"standardSrv": "mongodb+srv://indextestcluster.a3sx9.mongodb.net"

}

We’ll use the example connection string mongodb+srv://indextestcluster.a3sx9.mongodb.net going forward. Make sure to use the one supplied to you by the last command.

Step 2: Setting Up the Node.js Project

Initialize a new Node.js project and install the MongoDB driver:

mkdir mongo-indexes

cd mongo-indexes

npm init -y

npm install mongodb

For a deeper overview of MongoDB operations in Node.js, see How To Perform CRUD Operations in MongoDB.

OutputWrote to ~/mongo-indexes/package.json:

{

"name": "mongo-indexes",

"version": "1.0.0",

"description": "",

"main": "index.js",

"author": "",

...

}

added 12 packages, and audited 13 packages in 4s

found 0 vulnerabilities

Create a file named index_test.js. You will use this file to run the index examples throughout this tutorial. Add the following boilerplate code to connect to your Atlas cluster.

<$>[warning] Remember to replace the placeholder connection string with the one you copied in Step 1, including your actual username and password. <$>

const { MongoClient } = require('mongodb');

// Replace with your connection string from Step 1

const uri = "mongodb+srv://<username>:<password>@indextestcluster.a3sx9.mongodb.net";

const client = new MongoClient(uri);

async function main() {

try {

await client.connect();

console.log("Connected to MongoDB Atlas");

// We will use the 'sample_mflix' database provided by the sample data

const db = client.db("sample_mflix");

const movies = db.collection("movies");

// Additional code will go here

} finally {

await client.close();

}

}

main().catch(console.error);

Run the script in your terminal window to verify connectivity:

node index_test.js

Step 3 — Simulating Linear vs. Binary Search

Before running queries against the database, let’s create a standalone script to simulate why indexes are faster. An index essentially allows the database to switch from a “Linear Search” (checking items one by one) to a “Binary Search” (dividing the search space in half repeatedly).

Create a new file named search_demo.js:

nano search_demo.js

Add the following code. This script generates a sorted dataset of 1 million numbers and runs two search functions against it to compare the number of iterations required.

// Generate a large sorted dataset (1 to 1,000,000)

const dataSize = 1000000;

const dataset = Array.from({ length: dataSize }, (_, i) => i + 1);

// Uncomment the line below after running the code at least once. The binary search results are likely wrong.

// shuffle(dataset)

/**

* Linear Search

* Iterates through the array until the target is found.

*/

function linearSearch(arr, target) {

let iterations = 0;

for (let i = 0; i < arr.length; i++) {

iterations++;

if (arr[i] === target) {

return { found: true, iterations };

}

}

return { found: false, iterations };

}

/**

* Binary Search

* Uses a half-interval search loop to eliminate half the dataset in each step.

* Requires a sorted array.

*/

function binarySearch(arr, target) {

let iterations = 0;

let left = 0;

let right = arr.length - 1;

while (left <= right) {

iterations++;

const mid = Math.floor((left + right) / 2);

if (arr[mid] === target) {

return { found: true, iterations };

}

if (arr[mid] < target) {

left = mid + 1;

} else {

right = mid - 1;

}

}

return { found: false, iterations };

}

/**

* Shuffle Array

* Randomizes the order of elements in the array.

*/

function shuffle(array) {

for (let i = array.length - 1; i > 0; i--) {

const j = Math.floor(Math.random() * (i + 1));

[array[i], array[j]] = [array[j], array[i]];

}

}

// Look for a number near the start, middle, end of the array, and a value that doesn't exist.

const targets = [10, 500000, 999999, -1];

console.log(`Running searches on dataset of size: ${dataSize.toLocaleString()}\n`);

console.log("Target\t\tLinear Iterations\tBinary Iterations\tLinear Found\tBinary Found");

console.log("---------------------------------------------------------");

// Compare performance

targets.forEach(target => {

const linearResult = linearSearch(dataset, target);

const binaryResult = binarySearch(dataset, target);

console.log(

`${target}\t\t${linearResult.iterations}\t\t\t${binaryResult.iterations}\t\t\t${linearResult.found}\t\t\t${binaryResult.found}`

);

});

Run the simulation by executing the new code file:

node search_demo.js

You should see the following output:

OutputRunning searches on dataset of size: 1,000,000

Target Linear Iterations Binary Iterations Linear Found Binary Found

---------------------------------------------------------

10 10 20 true true

500000 500000 1 true true

999999 999999 19 true true

-1 1000000 19 false false

There’s a massive difference between linear search and binary search. To find the number 999,999 near the end of the list, linear search took nearly 1 million checks, which makes sense since we start searching at the beginning of the list. Binary search, which starts in the middle of the list and eliminates half the remaining possible values at each step, took only 20 checks.

The catch? Binary search requires the list to be sorted. Try randomizing the list order by running shuffle(dataset) after creating the dataset variable. The results of the binary search function are likely wrong.

This is the purpose and value of indexes: they keep your data sorted so MongoDB can use a binary search algorithm.

Step 4 — Querying with a COLLSCAN (No Index)

Let’s apply this concept by querying the database. We’ll start with a full collection scan (COLLSCAN), which examines every document in the collection for matches.

We’ll query for movies that won a specific number of awards. The awards.wins field is not indexed, so it’s a good field to query for generating a COLLSCAN. We will use the .explain('executionStats') method to examine how the database executes the query.

Return to the file we created earlier called index_test.js. Add the following code inside the main function by replacing the line // Additional code will go here:

// Query without an index

console.log("Querying without Index (COLLSCAN)");

const queryNoIndex = { "awards.wins": 5 };

const explanation1 = await movies.find(queryNoIndex)

.explain('executionStats');

console.log(`Execution Stage: ${explanation1.executionStats.executionStages.stage}`);

console.log(`Keys Examined: ${explanation1.executionStats.totalKeysExamined}`);

console.log(`Documents Examined: ${explanation1.executionStats.totalDocsExamined}`);

console.log(`Documents Returned: ${explanation1.executionStats.nReturned}`);

console.log(`Time Taken: ${explanation1.executionStats.executionTimeMillis}ms`);

Save the file and run the script:

node index_test.js

OutputConnected to MongoDB Atlas

Querying without Index (COLLSCAN)

Execution Stage: COLLSCAN

Keys Examined: 0

Documents Examined: 21349

Documents Returned: 24

Time Taken: 21ms

Note: The sample data set may change slightly over time. It’s OK if your output doesn’t match the numbers here exactly. The concepts discussed will remain consistent and observable regardless of whether or how the data changes.

You should see Execution Stage: COLLSCAN. The Documents Examined count will likely be equal to the total number of documents in the collection (approx. 23,000). That means we examined over 23,000 documents to return 24 documents. Keys examined is zero. You will revisit this value shortly.

This confirms that MongoDB had to check every document to find the matches.

Note: Running the script multiple times will show different values for “Time Taken” because the database loads the collection into database cache after the first run. The “Documents Examined” value remains consistent across runs.

Step 5 — Querying with a Single Field Index

Now, let’s build an index on awards.wins and query it. To make the scenario more realistic, we will perform a query with two predicates: awards.wins (indexed) and type (not indexed).

Update your main function to create the index and run the new query:

// Create Single Field Index

await movies.createIndex({ "awards.wins": 1 });

console.log("\nIndex created on 'awards.wins'");

console.log("Querying with a single field index");

// Query on an indexed field and unindexed field

const querySingleIndex = { "awards.wins": { "$gt": 30 }, "type": "movie" };

const explanation2 = await movies.find(querySingleIndex)

.explain('executionStats');

// We examine the input stage to see the index scan

console.log(`Execution Stage 1: ${explanation2.executionStats.executionStages.inputStage.stage}`);

console.log(`Execution Stage 2: ${explanation2.executionStats.executionStages.stage}`);

console.log(`Total Keys Examined: ${explanation2.executionStats.totalKeysExamined}`);

console.log(`Total Docs Examined: ${explanation2.executionStats.totalDocsExamined}`);

console.log(`Documents Returned: ${explanation2.executionStats.nReturned}`);

console.log(`Time Taken: ${explanation2.executionStats.executionTimeMillis}ms`);

Run the script again. We ran a query on different fields to prevent overlap as we create new indexes, so we’ll need to compare results individually. You will notice the Total Docs Examined in this query is significantly lower than Total Docs Examined in the last query.

OutputIndex created on 'awards.wins'

Querying with a single field index

Execution Stage 1: IXSCAN

Execution Stage 2: FETCH

Total Keys Examined: 331

Total Docs Examined: 331

Documents Returned: 326

Time Taken: 1ms

Here is a more detailed breakdown. The “execution stage” explains how the database gathers results. You’ll see IXSCAN this time, compared to COLLSCAN for the last query. That means we’re scanning an index to filter our result set without reviewing all documents in the collection. After the IXSCAN completes, MongoDB executes a FETCH stage.

- IXSCAN: MongoDB used the index to find pointers to documents where

awards.winsis greater than 30. - FETCH: It then retrieved those specific documents to check if the second condition (

type: "movie") matched.

You’ll also notice that “Keys Examined” is no longer zero. The “Keys Examined” value means we’re leveraging the “keys” stored in the index instead of examining entire documents alone.

While better, we can still improve the index. We’re querying on two fields but only one of them is indexed. The database can quickly reduce the result set compared to the entire collection of documents, but it still returns more entries from the index than documents for our result set (“Total Docs Examined” vs. “Documents Returned”). That means MongoDB has to load documents from disk or cache (FETCH) to check the unindexed type field.

For more on MongoDB index structures and best practices, see How To Use Indexes in MongoDB.

Step 6 — Querying with a Compound Index (Optimized)

To fully optimize the query, we can create a compound index that includes multiple fields. To ensure our tests remain isolated and idempotent, we will use a different query example for this step, targeting movies by year and imdb.rating.

DigitalOcean’s Managed MongoDB service supports all standard index types covered in this tutorial.

Update your main function to include the new index and query:

// Create an index on year AND imdb.rating

await movies.createIndex({ "year": 1, "imdb.rating": 1 });

console.log("\nCompound index created on 'year' and 'imdb.rating'");

console.log("Querying with compound index");

// Query on year and rating

const queryCompound = { "year": { "$gt": 2010 }, "imdb.rating": { "$gt": 8.5 } };

const explanation3 = await movies.find(queryCompound)

.explain('executionStats');

console.log(`Execution Stage 1: ${explanation3.executionStats.executionStages.inputStage.stage}`);

console.log(`Execution Stage 2: ${explanation3.executionStats.executionStages.stage}`);

console.log(`Total Keys Examined: ${explanation3.executionStats.totalKeysExamined}`);

console.log(`Total Docs Examined: ${explanation3.executionStats.totalDocsExamined}`);

console.log(`Documents Returned: ${explanation3.executionStats.nReturned}`);

console.log(`Time Taken: ${explanation3.executionStats.executionTimeMillis}ms`);

Run the script. This time, “Total Docs Examined” is equal to “Documents Returned.” That’s a ratio of 1 (33 total docs examined to 33 documents returned).

Compound index created on 'year' and 'imdb.rating'

Querying with compound index

Execution Stage 1: IXSCAN

Execution Stage 2: FETCH

Total Keys Examined: 44

Total Docs Examined: 33

Documents Returned: 33

Time Taken: 0ms

Step 7 — Covered Queries

Our compound index can’t be improved to better serve the query { "year": { "$gt": 2010 }, "imdb.rating": { "$gt": 8.5 } }. We’ve created the most optimal compound index for this query. But the database is still performing two stages: IXSCAN and FETCH. Most queries will require executing at least these two stages to satisfy the query. But to experiment a bit more, let’s modify our query to make things even faster.

Let’s run the same query again, but change the projection (the part of the query that changes what the result set looks like after it executes). Update your main function by adding the following:

console.log("\nQuerying with compound index using a covered query");

// We use projection to exclude the _id field and include only indexed fields

const explanation4 = await movies.find(queryCompound)

.project({ "_id": 0, "year": 1, "imdb.rating": 1 })

.explain('executionStats');

console.log(`Execution Stage: ${explanation4.executionStats.executionStages.stage}`);

console.log(`Total Keys Examined: ${explanation4.executionStats.totalKeysExamined}`);

console.log(`Total Docs Examined: ${explanation4.executionStats.totalDocsExamined}`);

We didn’t change the query at all, and we left the index alone. You will still see a change in the explain plan.

OutputQuerying with compound index using a covered query

Execution Stage: PROJECTION_DEFAULT

Total Keys Examined: 44

Total Docs Examined: 0

The “Execution Stage” changes from IXSCAN to PROJECTION_DEFAULT to indicate that a covered query is being executed. The FETCH stage no longer happens because MongoDB does not need to fetch any documents from disk (or cache). The index has everything necessary to satisfy the query. You should see that Total Docs Examined is now 0 (or very close to it).

If the index contains all the fields required by the query (including the projected fields), MongoDB doesn’t need to look at the actual documents at all. It can return the results directly from the index. This is known as a covered query.

There’s much more to say about compound indexes, especially around field order. We won’t touch on the extensive topic here, but more information about how these indexes work are available on the MongoDB documentation:

Step 8 — Managing Data with TTL Indexes

A TTL (Time-To-Live) Index is a special single-field index that MongoDB can use to automatically remove documents from a collection after a certain amount of time. This is ideal for temporary data like machine logs, user sessions, or password reset tokens.

Add this example to your code. We will create a temporary collection for this demonstration:

const sessions = db.collection("user_sessions");

// Create a TTL index where documents will expire 2 seconds after the 'createdAt' time

await sessions.createIndex({ "createdAt": 1 }, { expireAfterSeconds: 2 });

console.log("\nTTL Index created on 'user_sessions'");

for(let i = 0; i < 10; i += 1) {

// Insert a document

let date = new Date();

date.setSeconds(date.getSeconds() + i)

await sessions.insertOne({

sessionId: "12345",

user: "jdoe",

createdAt: date

});

}

console.log("Session documents inserted. Waiting for TTL deletions (up to ~100 seconds)...");

for(let i = 0; i < 20; i += 1) {

// Sleep 5 seconds

await (new Promise(resolve => setTimeout(resolve, 5000)));

// Count all documents in the collection

let count = await sessions.countDocuments({});

console.log(`There are ${count} documents in the user_sessions collection`);

if (count === 0) {

break;

}

}

Note: MongoDB doesn’t delete documents at the exact moment associated with the TTL index. It uses a background process that deletes at regular intervals (typically about every 60 seconds). The loop above waits for up to ~100 seconds so you should usually see the documents disappear during the run, but depending on timing, it may still take a bit of extra time for the sample documents to be removed.

Performance Consideration: Avoid clustering large numbers of documents with the exact same deletion time (e.g., bulk importing millions of logs with the same timestamp). When the TTL thread wakes up (every 60 seconds), deleting a massive number of documents simultaneously can overwhelm the database, causing performance spikes. Spread out your write operations or expiration times if possible.

Frequently Asked Questions

1. How do MongoDB indexes affect write performance?

Every index you add requires MongoDB to update the index structure on each insert, update, or delete operation. A collection with 10 indexes needs 10 index updates per write. For read-heavy workloads, the trade-off is worth it. For write-heavy workloads, keep your index count low and only index fields you query often.

2. When should you use a compound index instead of multiple single field indexes?

Use a compound index when your queries filter on two or more fields together. MongoDB selects one index per query. If you have separate indexes on year and rating, MongoDB picks the more selective one and still scans documents for the other field. A compound index on { year: 1, rating: 1 } handles both predicates in a single index scan.

3. What is the difference between COLLSCAN and IXSCAN in MongoDB?

COLLSCAN means MongoDB scans every document in the collection to find matches. IXSCAN means MongoDB uses an index to narrow down results before fetching documents. You want IXSCAN in production queries. Run .explain('executionStats') on your queries to verify the scan type.

4. How do TTL indexes handle bulk deletions?

MongoDB runs a background thread every 60 seconds to remove expired documents. If millions of documents expire at the same time, the deletion batch puts heavy load on the database. Spread out your expiration times when inserting large volumes of temporary data to avoid performance spikes.

5. Do MongoDB indexes consume additional storage?

Yes. Each index stores a copy of the indexed field values along with pointers to the original documents. On large collections, indexes add significant storage overhead. Use db.collection.stats() to check your index sizes and remove unused indexes with db.collection.dropIndex().

Conclusion

In this tutorial, you improved MongoDB query performance by moving from a full collection scan to a compound index.

- COLLSCAN: Checks every document. This is the slowest approach.

- Single Field Index: Finds matches for one field quickly, but still fetches documents to filter by other fields.

- Compound Index: Covers multiple query predicates and reduces document examinations to a 1:1 ratio.

- Covered Query: Returns results directly from the index without accessing the document store.

- TTL Index: Automates data cleanup by expiring old documents on a schedule.

To learn more about indexing strategies, refer to the MongoDB Indexing Documentation.

You might also find these DigitalOcean resources helpful:

Try DigitalOcean Managed MongoDB

If you want to run MongoDB in production without managing infrastructure, try DigitalOcean Managed MongoDB. You get automated backups, high availability, and independent storage scaling, all within a fully managed service. Sign up for DigitalOcean and get $200 in free credit to get started.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author(s)

I help Businesses scale with AI x SEO x (authentic) Content that revives traffic and keeps leads flowing | 3,000,000+ Average monthly readers on Medium | Sr Technical Writer(Team Lead) @ DigitalOcean | Ex-Cloud Consultant @ AMEX | Ex-Site Reliability Engineer(DevOps)@Nutanix

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- Introduction

- Key Takeaways

- Prerequisites

- Step 1: Provisioning the Database

- Step 2: Setting Up the Node.js Project

- Step 3 — Simulating Linear vs. Binary Search

- Step 4 — Querying with a COLLSCAN (No Index)

- Step 5 — Querying with a Single Field Index

- Step 6 — Querying with a Compound Index (Optimized)

- Step 7 — Covered Queries

- Step 8 — Managing Data with TTL Indexes

- Frequently Asked Questions

- Conclusion

- Try DigitalOcean Managed MongoDB

Deploy on DigitalOcean

Click below to sign up for DigitalOcean's virtual machines, Databases, and AIML products.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.