- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

By Adrien Payong and Shaoni Mukherjee

AI automation enables us to create workflows driven by machine learning models. Instead of hard-coding “if-this-then-that” scripts, we can layer AI models on top of traditional business workflows. The resulting system can understand unstructured data, adapt to new situations, and make judgment calls that only experienced practitioners would know.

This comprehensive guide will explain what AI automation is, how it differs from traditional Business Process Automation, and when to choose simple workflows vs intelligent agents. We will discuss major architectural patterns and provide cheat sheets that you can use to prototype your own builds.

Key Takeaways

- AI automation = rules + judgment: Classic automation runs the steps; AI adds the ability to interpret messy inputs (emails, free text, docs) and make routing decisions.

- Workflow vs agent is the main design choice: Use workflows when you can explicitly map the process; use agents only when the path is genuinely dynamic—and then add guardrails.

- Reliability comes from the backbone: A production pattern is Trigger → Preprocess → LLM → Tool calls → Postprocess → Store/Log, with strict validation at every boundary.

- Guardrails are non-negotiable: Enforce structured outputs (JSON), require approvals for risky or high-value actions, and control usage with budgets/rate limits plus full audit logs.

- Scale safely with discipline: Reduce cost via batching, caching, smaller models, and context pruning; reduce risk via evals/regression tests, monitoring, and rollback/versioning.

What is AI automation?

AI automation means automating tasks using Artificial Intelligence, Machine Learning, or NLP technologies. The key distinction is moving from rule-based logic to automation that “can actually think, learn, and adapt”. For instance, “traditional” automation is great for well-defined tasks(“when a new invoice arrives, copy data to ERP”), but you need AI when there is some fuzziness in inputs or decisions. AI automation can handle uncertainty and process unstructured data (email/text/image data)and make decisions that are too complex for rules to capture. In short, AI automation isn’t magic – AI isn’t replacing logic, it’s augmenting it.

AI Business process automation vs classic automation

AI-enabled automation (“AI agents”) is most helpful when the business process you want to automate involves judgment steps or messy inputs. Think of how an AI could triage incoming RFP(Request for Proposal) or support emails, surface sales leads by analyzing market data, or translate natural language customer feedback into structured tickets. These use cases involve natural language processing or pattern recognition that would be difficult (if not impossible) to encode with classic rules. Classic BPA is best suited to cases where each step can be determined in advance. The easiest place to start automating is with tasks that you know you’ll do repeatedly with a known structure, then apply AI where input or routing requires some intelligence.

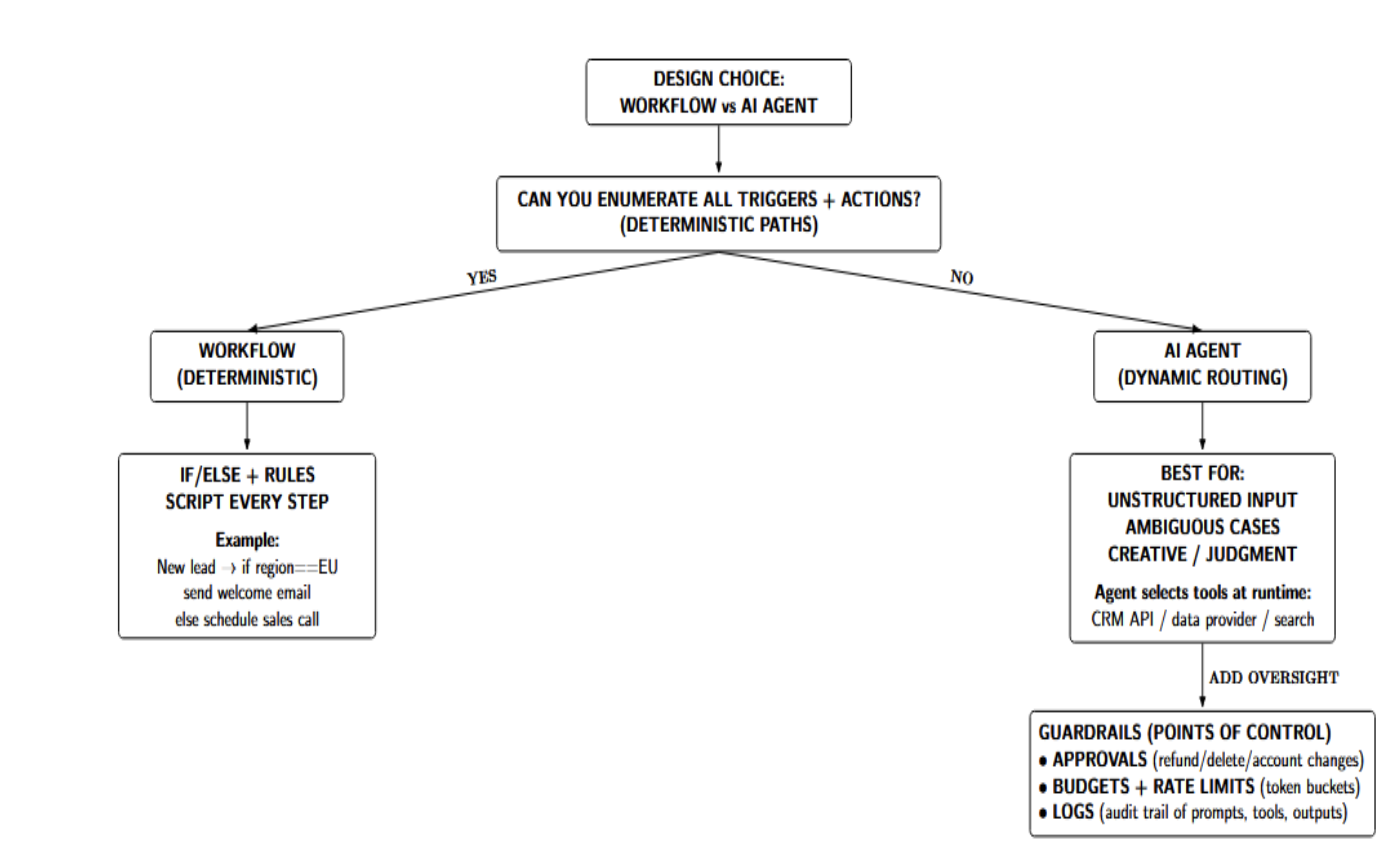

Workflows vs AI agents (decision framework)

The first design choice is whether to orchestrate the automation as a workflow or to hand control to an AI agent. Workflow automations are ideal when every step can be scripted explicitly. Agents work best when the path is dynamic, or humans need to make judgment calls along the way.

- Use workflow when steps are deterministic. If you can draw a flowchart that lists all possible triggers and actions, code it as a workflow. For example: “When a new lead is added to Salesforce, if region == Europe then send a welcome email, else schedule a sales call.”

- Use agents when routes are variable (with guardrails). If the workflow involves unstructured input or creative work, agents are preferred. AI agents process natural language or complex data, then choose which steps to take at runtime. A sales agent might conditionally call the CRM API or a data provider, depending on the context mentioned in the conversation. Rather than following preset paths, agents interpret your goal and dynamically execute the steps accordingly. This is powerful, but requires guardrails. You should identify points of control where you’ll always maintain oversight:

- Approvals: Certain actions should always have human approval before execution. High-risk actions (refund/delete/account changes) or expensive operations should be held for review. An AI agent can draft a warranty refund, but the final action step should pause and ask a manager to confirm: “This will refund $X, please approve.”

- Budgets & rate limits: Keep track of utilization and throttle if needed. External API calls (i.e., to your CRM system or LinkedIn) should be gated by rate limiters or token buckets. Track cumulative LLM calls or credits to avoid surprise bills.

- Logs: Maintain an audit log of every decision made by the agent (details below). Associate each user query to the agent’s internal prompt, the chosen tool calls, and outputs.

Choose your stack (quick comparison)

AI-powered automations can be built on many platforms. Here’s a quick comparison of the major ones to guide you towards the best toolkit for your team and use case.

| Platform | Core strengths/differentiators | Best fit & tradeoffs |

|---|---|---|

| Zapier | 8,000+ integrations, a fully-managed SaaS product, and extremely approachable for non-developer teams. Increasing support for AI assistants/chatbots and AI Agents. Model Context Protocol allows LLMs (such as ChatGPT/Claude) to securely call Zapier actions throughout Zapier’s app ecosystem. | Best for: fast setup, no-code prototyping, simple → moderately complex automations, maximum integration breadth. Tradeoffs: Pricing is typically based on per task, so it can get costly on high-volume workloads; not the best option for custom/business logic at scale. |

| Make (ex-Integromat) | Visual scenario builder with modules; Allows more complex routing of data (supports routers/loops/aggregators/etc) and offers visibility into richer data-flow. Supports connections to AI services (e.g., OpenAI) via modules/http/custom calls. Generally, more powerful branching/error handling than basic no-code tools. | Best for: intermediate users needing complex routing + transformations without writing code; often more cost-efficient per operation than task-based models. Tradeoffs: still SaaS-hosted; learning curve; not “AI-first” by design (AI is an add-on to workflows). |

| n8n | Open-source, developer-friendly node/trigger workflows; self-host anywhere for control and privacy. Supports code steps (JS/Python) and strong LLM tooling (e.g., LangChain nodes). Great for reliability patterns (idempotency), observability, and extensibility. | Best for: technical teams needing full control, on-prem/cloud hosting, advanced integrations, large data volumes, and custom logic. Tradeoffs: you manage infrastructure/ops; steeper learning curve than pure no-code SaaS. |

| Activepieces | Open-source BPA designed AI-first: native LLM integrations, content-based routing, decision branches, and support for AI agents. Offers hundreds of “pieces” (integrations), plus reliability features like auto-retry and versioning. Includes a built-in MCP server so external LLMs can call its actions (similar concept to Zapier MCP). | Best for: teams wanting AI-first, no-code-but-extensible workflows with open-source deployment options (self-host or cloud) and production-minded features. Tradeoffs: newer/less mature ecosystem vs. Zapier/Make; may have fewer battle-tested templates/community patterns. |

The different platforms can also work together. For instance, your team could develop basic automations on Zapier and migrate critical agents to a self-hosted solution like n8n or Activepieces when strict SLAs become necessary.

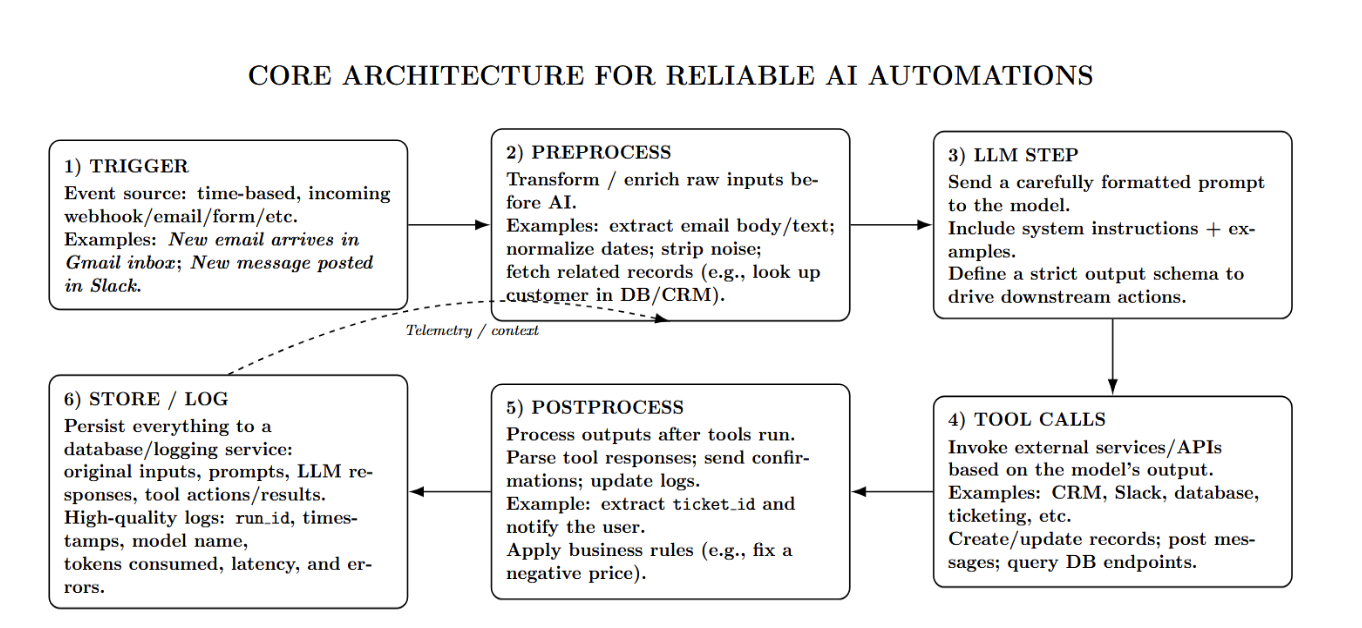

Core architecture for reliable AI automations

Regardless of which stack/language you’re working with, a robust backbone provides the reliability needed. A Framework for AI automation can look like: Trigger → Preprocess → LLM → Tool Calls → Postprocess → Store/Log. Here is what each step represents:

- Trigger: The trigger can be time-based, an incoming webhook/email/form/etc. or some other event. Example: “New email arrives in my Gmail inbox” or “New message is posted in my Slack channel.”

- Preprocess: Do some processing/transformation/enrichment on the raw inputs before sending them to your AI. Examples: Extract the email body/text, normalize dates, strip out irrelevant information, fetch related records you might need later (look up customer records from your database), etc.

- LLM step: Send your prompt to the desired language model. Format the prompt carefully. Provide system instructions, include one or more examples, and clearly define the output schema. The agent response will be used to trigger downstream actions.

- Tool calls: Based on the model’s output, the automation invokes external services or APIs (CRM, Slack, database, etc.). This could be creating/updating a record in your CRM, posting a Slack message, calling a database API, etc.

- Postprocess: Perform additional processing after tools have completed their job. This could be parsing tool outputs, sending confirmations to users, updating a log, etc. For example, maybe you created a support ticket. You can parse the ticket ID from the tool response and send a message to the user notifying them of the ticket. Apply any business rules you need (price was given as a negative number? Oops, fix it!).

- Store/Log: You can store the original inputs, prompts sent to the llm, the llm’s responses, and tool actions/results. High-quality logs contain a unique run_id, timestamps, model name, tokens consumed, and any errors.

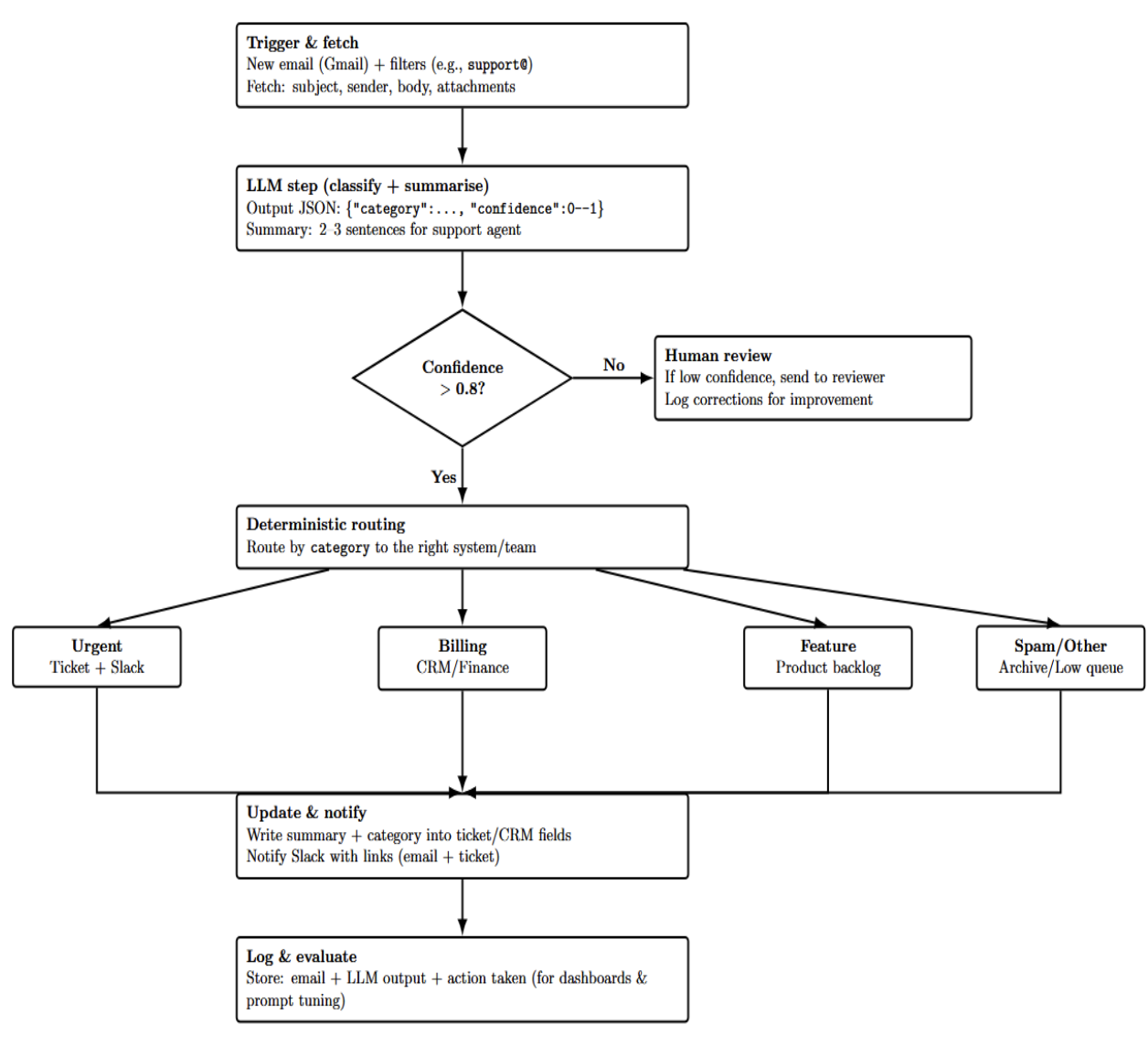

Build — AI Email Triage → Ticket + Slack summary (workflow)

Goal: Route incoming emails to the appropriate team, generate a summary, and post it to Slack, along with updating a help‑desk ticket.

Why this matters: Customer support teams get swamped with emails. Auto‑triaging emails saves response time and helps important messages rise to the surface. You can use this workflow template from the n8n guide as a starting point. It leverages Gmail triggers (or standard mail provider nodes), integrates OpenAI for classification and summarization, and sends notifications to Slack.

Steps

-

Trigger & fetch – Trigger the workflow on “New Email” from Gmail or your mail provider of choice. Configure filters to process only relevant inboxes (e.g., support@). Retrieve the subject, sender, body, and attachments.

-

Pre‑processing & classification – Feed email into an LLM like OpenAI GPT with the prompt such as,

-

“Classify this email into one of the following categories: urgent support request, billing question, feature request, spam, or other. Respond with a JSON object {\“category\”: <category>, \“confidence\”: <0-1>}.” The AI response will be in the required format with the category selected and a confidence level. You can fine-tune the prompt to add custom categories. Include instructions for the model not to “make something up if it doesn’t see one of these categories.” Set a threshold (e.g., confidence > 0.8) for allowing the result or sending it to human review.

-

Summarisation – Feed the body of the email to an LLM summarisation prompt: “Summarise the following email for forwarding to a customer support agent. Include the customer’s main issue/request and any actions they want taken. Limit the summary to 2–3 sentences.”

-

Deterministic routing – Route the email based on the AI‑selected category. For example:

-

Urgent support request: Create a Zendesk/Jira ticket as high priority and post a Slack message to the support channel.

-

Billing question: Update a CRM system or route to the finance team.

-

Feature request: append to a running product feedback document.

-

Spam/other: archive or send to a low‑priority queue.

-

Update systems & notify – Use API nodes to create/update tickets as needed. Include the body of the AI summary and classification in the ticket as custom fields. Send a Slack message with the result, including a link back to the email as well as the ticket.

-

Logging & evaluation – Store the input email, AI response, and final action in a datastore. This will allow you to build dashboards to track how accurate your classification is. Forward all emails that fall below a confidence threshold to a human for verification. Log all corrections to improve your prompts.

-

Error handling – Include error triggers with retry logic if calling external APIs fails. If making updates or POSTS to your help‑desk system API, implement exponential backoff. This is a best practice in the n8n guide.

Considerations

- PII & compliance: Mask/redact PII before sending content to the AI. Set guardrails to block certain information from being shared. Only send the necessary fields to LLMs.

- Latency: Summarisation can introduce delay. Use asynchronous processing for non-urgent categories; return an immediate acknowledgment to the sender while the workflow completes.

- Human override: Implement a human in the loop for low confidence categories or uncertain requests. This could be routed to a Slackbot or queue for agent review prior to ticket creation.

You can see in this build how AI steps fit into deterministic workflows, allowing you to reduce manual triage but still retain control and oversight. The same pattern can be used for contact forms, chat logs, and social media DMs.

Cheat sheets

To accelerate development, use these quick-reference cheat sheets (and download the full PDFs if available).

Workflow nodes & triggers cheat sheet (n8n model)

Use this table as a cheat sheet for selecting the most popular automation triggers (or workflow starters). Decide which entry point to use based on whether you need real-time push events (webhooks/app triggers), runs that occur on a scheduled basis (cron), inbox-driven processing(email), human submissions (forms/manual), or fallback polling if there is no webhook available.

| Trigger/Event | Best Use Case (when to pick it) | Example Node(s) + Online References |

|---|---|---|

| Webhook Trigger | Real-time inbound events from apps/services (fastest, most reliable “push” model). Ideal for app callbacks and event-driven automation. | n8n: Webhook node Zapier: Webhooks by Zapier Make: Make developer documentation |

| Cron / Scheduled Trigger | Time-based runs (daily digests, nightly syncs, periodic maintenance). Use when “time” is the trigger, not an app event. | n8n: Schedule Trigger node GitHub Actions: schedule (cron) event Make: Make developer documentation |

| Form / Interface Submit | Human-submitted requests (intake forms, approvals, data collection). Great for “human-in-the-loop” checkpoints. | n8n: n8n Form Trigger Zapier: Zapier Interfaces Typeform (via Zapier): Typeform integrations |

| API Poll (Scheduled Fetch) | When no webhook exists: check “latest updates” from an API on a cadence. Combine with scheduling + rate limits. | n8n: HTTP Request node + Schedule Trigger Make: HTTP module + Scenario scheduling |

Prompt patterns cheat sheet (structured output, tool routing, refusal rules)

- Structured JSON Output: Request the model to reply in a strict JSON format. Example: “Analyze the email and output JSON: {“department”: “…”, “priority”: “…”}.” This makes parsing reliable.

- Step-by-Step Planning: If you want the agent to plan its steps for complex tasks, tell it to do it. Example: “List out the facts first. Then, determine what department is required. Finally, generate a summary.”

- Tool Invocation by Name: When working with agents, ask the model to include the name of the tool and the action. Example: “Call the Gmail API to send an email with subject X and body Y.”

- Refusal/Safe Completion: Include a safety clause in the system prompt. Example: “If asked to do something that is out of scope or unethical, respond with: ‘I’m sorry, I cannot comply with that request.’” This helps prevent security issues.

- Example Prompts: When building automations, include one or two examples within the prompt (few-shot) that show the answer format and style you want.

Reliability cheat sheet (timeouts, retries, idempotency, fallbacks)

-

Timeouts: Don’t allow an LLM call to hang indefinitely. Set a timeout (e.g., 30s) on your API requests.

-

Retries: if an API call fails, retry with exponential backoff. Typically, 2–3 tries should be safe. If it’s a critical flow, alert the user after max retries.

-

Idempotency Key: Produce a unique key for each “intent” (e.g., hash of input text). Save it with your action log. On retries or duplicates, skip performing the same side-effect twice.

-

Dead-Letter Queue (DLQ): If a particular step keeps failing for any reason (e.g., bad/malformed data from a webhook), move the message to a DLQ (list, email, etc.) for later analysis, instead of blocking everything.

-

Fallback Plan: If the LLM output fails validation (e.g., a required field is missing), you should provide a fallback option. This can even be asking the model to re-generate their response with added instructions:

“The output was invalid, please retry or default to priority: Low.”

-

Human Escalation: In cases where automatic recovery fails, there should always be an escape hatch alerting a human via email/Slack.

Security cheat sheet (scopes, secrets, PII redaction)

- Least Privilege Scopes: Use API tokens with minimal access. For example, you should only give read/write scope to the spreadsheets your Zapier credential needs, not entire-account admin access.

- Secrets Management: Use secret management tools like a vault or environment variables to store API keys/passwords. Never log them or expose them in prompts. Regularly rotate keys.

- PII Redaction: Strip or mask personally identifiable information before sending to the model. Replace names, emails, etc. with placeholders. AWS provides special PII entity detection filters you can apply to input/output.

- Encrypted Storage: If you are storing logs or other data, ensure sensitive fields are encrypted while at rest.

- Audit & Access Logs: Monitor and log who is deploying automations and using your keys (so you know if a credential has been compromised).

Monitor and log who is deploying automations and using your keys (so you know if a credential.

Troubleshooting & scaling

The table below covers the most useful cost controls and risk controls you can leverage to keep your AI automations affordable & reliable at scale.

| Category | Technique | Practical example / what to do |

|---|---|---|

| Reduce cost | Batching | Combine similar items into one LLM call (e.g., summarize 5 docs in one prompt instead of 5 separate calls). |

| Reduce cost | Caching | Store recent LLM outputs/embeddings so repeat queries don’t cost tokens (e.g., cache a company lookup if multiple leads share the same company name; cache common boilerplate like footer text). |

| Reduce cost | Smaller models | Use cheaper models for routine work and reserve stronger models for edge cases (e.g., draft generic replies with a lower-cost model; use a higher-end model only when needed). |

| Reduce cost | Context pruning | Keep prompts lean: include only relevant email sections/history (e.g., strip quoted threads, keep key paragraphs + metadata). |

| Reduce risk | Automated evaluations | Run a recurring test suite of inputs and compare outputs to expected fields (e.g., validate JSON keys/values; use tools like LangSmith or a simple script). |

| Reduce risk | Human-in-the-loop spot checks | Review a sample of runs daily/weekly for correctness, tone, and routing accuracy—especially for high-impact workflows. |

| Reduce risk | Monitoring + alerts | Track success rate, latency, token usage, and error rate; alert on anomalies (e.g., sudden spike in tokens or failures). |

| Reduce risk | Rollback plan + versioning | Keep old prompts/flows so you can revert quickly (e.g., store prompt templates in Git; use workflow versioning where available). |

FAQs

1. What’s the difference between AI workflow automation and AI agents? AI workflows follow a fixed sequence of predefined steps, making them predictable and easy to debug. AI agents, on the other hand, dynamically decide what actions to take based on the input and context. This flexibility makes agents more powerful but also less predictable. Because of that, agents require stronger guardrails, monitoring, and validation.

2. Do I need coding to build AI automations? You don’t necessarily need coding skills to build AI automations. No-code and low-code tools like Zapier, Make, and n8n allow you to create workflows visually. However, coding becomes useful when you need custom logic, better error handling, or want to self-host solutions. It also helps improve performance and reliability at scale.

3. When should I use a workflow instead of an agent? You should use a workflow when your process is clearly defined, and all possible paths can be mapped in advance. Workflows are ideal for repetitive, structured tasks where consistency matters. Agents are better suited for scenarios where decisions depend on changing inputs or context. If you don’t need dynamic reasoning, workflows are usually more efficient and reliable.

4. How do I prevent hallucinations or incorrect tool actions? To reduce hallucinations, enforce structured outputs such as JSON schemas to guide the model’s responses. Validate all outputs before executing any action, especially when tools or APIs are involved. Adding approval steps for high-risk operations can further improve safety. Logging tool usage and implementing retries or idempotency also help maintain reliability.

5. How can I control costs as usage scales? You can control costs by batching requests and caching frequent responses to avoid redundant processing. Reducing prompt size and removing unnecessary context also helps lower token usage. Use smaller, cheaper models for routine tasks and reserve larger models for complex reasoning. Monitoring usage with alerts and setting budget limits ensures you stay within cost constraints.

Conclusion

AI automation is most valuable when you blend deterministic workflows (for predictable steps) with AI agent power (for messy inputs/judgment) and retain control with schemas, approvals, budgets, and logs. Build “workflow-first” with a single fast-win automation like email triage, then iteratively add agent loops only where variability/reasoning is genuinely valuable. The right architecture (trigger → preprocess → LLM → tools → postprocess → store/log) and included cheat sheets for prompts, reliability, and security enable scaling from prototypes up to regulated production systems that remain auditable, cost-effective, and secure at scale.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author(s)

I am a skilled AI consultant and technical writer with over four years of experience. I have a master’s degree in AI and have written innovative articles that provide developers and researchers with actionable insights. As a thought leader, I specialize in simplifying complex AI concepts through practical content, positioning myself as a trusted voice in the tech community.

With a strong background in data science and over six years of experience, I am passionate about creating in-depth content on technologies. Currently focused on AI, machine learning, and GPU computing, working on topics ranging from deep learning frameworks to optimizing GPU-based workloads.

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- **Key Takeaways**

- **What is AI automation?**

- **Workflows vs AI agents (decision framework)**

- **Choose your stack (quick comparison)**

- **Core architecture for reliable AI automations**

- **Build — AI Email Triage → Ticket \+ Slack summary (workflow)**

- **Cheat sheets**

- **Troubleshooting & scaling**

- **FAQs**

- **Conclusion**

- **References**

Join the many businesses that use DigitalOcean AI Platform.

Reach out to our team for assistance with GPU Droplets, 1-click LLM models, AI Agents, and bare metal GPUs.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.