AI tools have become embedded across business operations, from chatbots handling customer service to automated content generation. But IBM’s 2025 breach report revealed a troubling gap between adoption and security: one in five organizations experienced breaches through “shadow AI.” This includes employees pasting sensitive source code, meeting notes, and customer data into unauthorized tools like ChatGPT—adding an average of $670,000 to breach costs, with 97% of AI-breached organizations lacking proper access controls.

These incidents underscore that privacy risks aren’t hypothetical—they’re actively being exploited as workers chase productivity gains without understanding the exposure they’re creating. The solution isn’t to abandon AI technology altogether but to implement strong guardrails: access controls, prompt filtering, approved enterprise AI tools, and governance frameworks. Companies implementing AI business tools or developing their own AI solutions must balance protecting sensitive information with maximizing the technology’s capabilities—especially when operating in regulated industries like healthcare or finance with strict compliance requirements. Let’s explore AI and privacy, examining the risks, challenges, and strategies for mitigation that your business should consider.

Key takeaways:

-

AI privacy is the protection of personal information as it moves through AI systems—requiring organizations to balance innovation with safeguards that prevent these tools from misusing or exposing sensitive data.

-

Implementing robust governance—including “privacy by design,” ethical guidelines, and regular audits—builds customer trust and minimizes the legal and reputational risks associated with processing vast amounts of personal data.

-

Effective risk mitigation requires layering technical controls across the entire AI lifecycle, such as data anonymization, AI Security Posture Management (AI-SPM), and strict protocols for handling user inputs during the inference stage.

-

Staying compliant with an evolving regulatory landscape, including the EU AI Act, Canada’s Bill C-27, and California’s SB 53, is critical for maintaining operational resilience and avoiding substantial penalties.

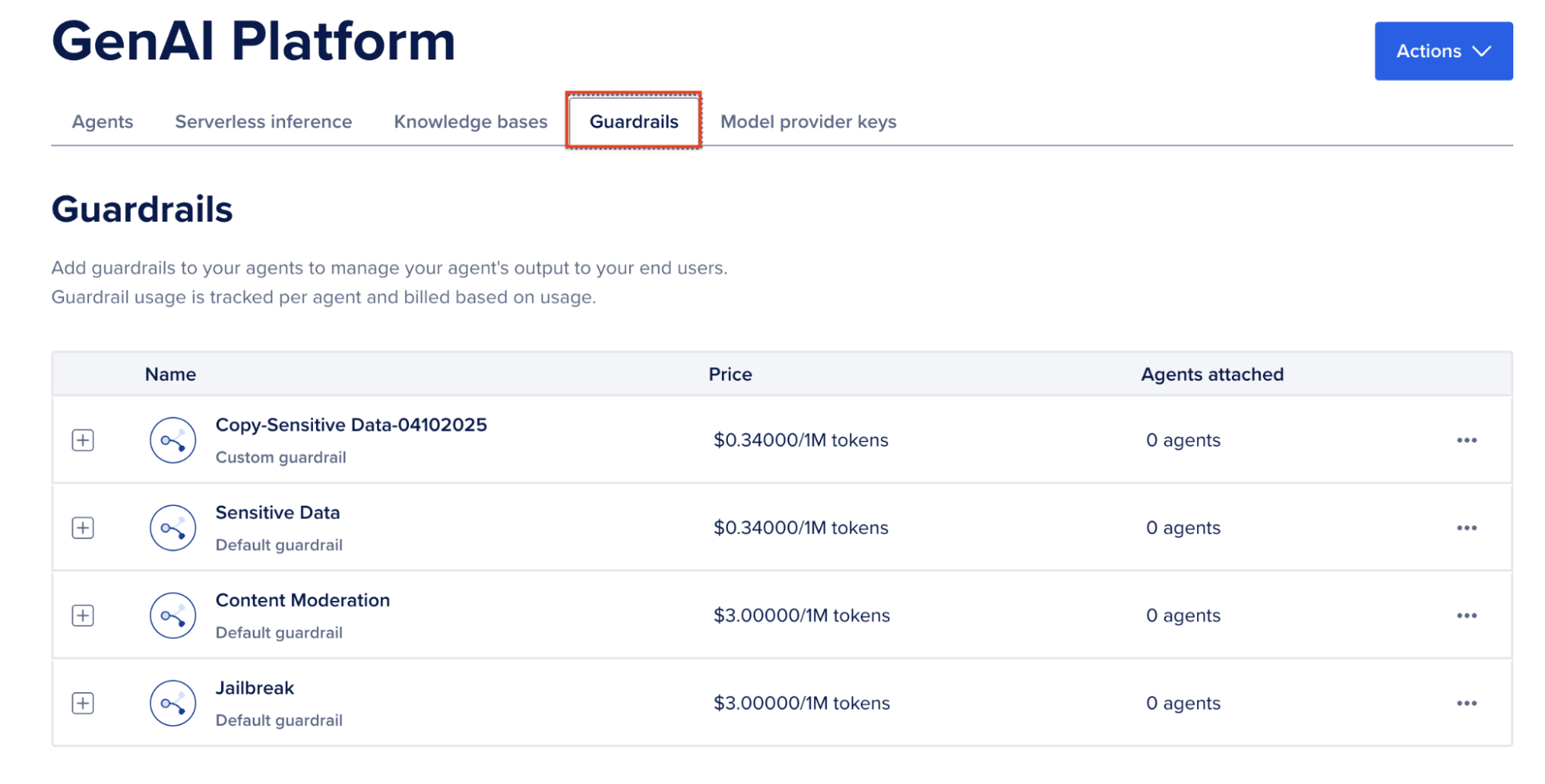

Transform your AI development with DigitalOcean GradientAI Platform. Build custom, fully-managed agents backed by leading LLMs from Anthropic, DeepSeek, Meta, Mistral, and OpenAI. From serverless inference to multi-agent workflows, integrate powerful AI capabilities into your applications in hours with RAG workflows, function calling, guardrails, and embeddable chatbots.

Get started with DigitalOcean GradientAI Platform and build industry-changing AI solutions without managing complex infrastructure.**

What is AI privacy?

AI privacy is the set of practices and concerns centered around the ethical collection, storage, and use of personal information by AI systems. This focus addresses the critical need to protect individual data rights and maintain confidential AI computing as AI algorithms process and learn from vast quantities of personal data. Ensuring AI privacy involves navigating the balance between technological innovation and the preservation of personal privacy in an era where data is highly valuable.

Because AI regulation currently lags behind technological strides, organizations face ambiguity that often delays the implementation of AI in production workflows. To mitigate future risks, companies should anticipate upcoming regulatory shifts by proactively developing their own sensible AI privacy guidelines.

AI data collection methods and privacy

AI systems rely on a wealth of data to improve it’s algorithms and outputs, employing different collection methods that have the potential to pose privacy risks. When the techniques used to gather this data are not disclosed transparently to the individuals (e.g. customers) from whom the data is being collected, this can lead to breaches of AI surveillance privacy that hurt a brand’s reputation.

In developing your company’s approach, consider the privacy risks of these popular methods of AI data collection:

-

Web scraping: AI systems can accumulate vast amounts of information by automatically harvesting data from websites. While some of this data is public, web scraping can also capture personal details—such as names, contact info, or social media history—without explicit user consent. Recent copyright lawsuits from publishers like The New York Times against OpenAI and Perplexity highlight the growing legal and ethical scrutiny surrounding data usage without permission.

-

Biometric data: AI systems that use facial recognition, fingerprinting, and other biometric technologies can intrude into personal privacy, collecting sensitive data that is unique to individuals and can cause harm if malicious actors gain access to it due to poor data handling safeguards.

-

IoT (Internet of Things) devices: Internet-enabled devices provide AI systems with real-time data from our homes, workplaces, and public spaces. This data can reveal the intimate details of users’ daily lives, creating a continuous stream of information about their habits and behaviors.

-

Social media monitoring: AI algorithms can analyze social media activity, capturing demographic information, preferences, and even emotional states, often without explicit user awareness or consent.

Beyond collection methods, the way data is processed and stored within an AI’s architecture introduces specific technical risks:

-

Training data: This is the foundational dataset used to “teach” the model. Privacy risks arise if this data contains personally identifiable information that hasn’t been properly anonymized, as it becomes a permanent part of the model’s “knowledge.”

-

Model memorization: This occurs when a model inadvertently “hard-codes” specific snippets of its training data rather than just learning general patterns. This can lead to the model “regurgitating” sensitive information—like a credit card number or a private address—verbatim when prompted.

-

Embeddings: To “understand” data, AI converts it into numerical vectors called embeddings. While these look like random numbers, they are mathematically dense representations of the original input. Recent research shows that these vectors can often be “inverted” to reconstruct the original sensitive text or images.

-

Inference: This is the stage where the model is actually used to make a prediction or generate an output based on new data. Privacy concerns here focus on “input privacy”—ensuring the data a user types into a prompt isn’t stored or used to further train the model without their knowledge.

The privacy implications of these methods are far-reaching and can lead to unauthorized surveillance, identity theft, and a loss of anonymity. As AI technologies become more integrated into everyday life, data collection must remain transparent and secure, while individuals retain control over their personal information.

LLM poisoning happens when attackers inject malicious or corrupted data during training and fine-tuning LLM models. Learn more about how this can create several AI and privacy risks:

How AI systems create privacy risk

According to 2025 PitchBook data via Bloomberg, AI startups are pulling in more than 50% of all venture capitalist investments. This wave of AI innovation has brought forth unprecedented capabilities in data processing, analysis, and predictive modeling. However, AI introduces privacy challenges that are complex and multifaceted, considerably different from those posed by traditional data processing. These concerns shape customer trust and have legal and ethical implications:

-

Data volume and variety: AI systems can digest and analyze exponentially more data than traditional systems, increasing the risk and scale of personal data exposure.

-

Predictive analytics: Through pattern recognition and predictive modeling, AI can infer personal behaviors and preferences, often without the individual’s explicit knowledge or consent.

-

Data security: The large data sets AI requires to function effectively are attractive targets for cyber threats, amplifying the risk of breaches that could compromise personal privacy.

-

Lack of transparency in AI algorithms: The “black box” obscurity of AI systems raises concerns for businesses, users, and regulators, as they don’t have enough information to fully comprehend how AI algorithms arrive at certain conclusions or actions. Without this transparency, the way businesses use AI can risk eroding customer confidence, potentially breaching regulatory requirements.

-

Embedded AI bias: Without careful oversight, AI can perpetuate existing biases from the data it’s fed, leading to discriminatory outcomes and privacy violations—an issue explored in-depth in AI and ML books like Algorithms of Oppression. These biases can perpetuate and even amplify existing social inequalities, affecting groups based on race, gender, or socioeconomic status. For businesses, this undermines fair practice and can lead to a loss of trust and legal consequences.

-

Copyright and intellectual property issues with AI: AI systems often require large datasets for training, which can lead to using copyrighted materials without authorization. This infringes on copyright laws and raises privacy concerns when the content includes personal data. Businesses must navigate these AI privacy challenges carefully to avoid litigation and the potential fallout from using third-party intellectual property without consent.

-

Unauthorized use of personal data: Incorporating personal data into AI models without explicit consent poses significant risks, including legal repercussions under data protection laws—like GDPR and the developing EU AI Act—and potential breaches of ethical standards. Unauthorized use of this data can result in privacy violations, substantial fines, and damage to a company’s reputation.

These challenges underscore the necessity for robust AI data protection measures. Balancing the benefits of AI with the right to privacy requires vigilant design, implementation, and governance for AI personal data protection.

AI bias isn’t just a fairness issue—it’s a privacy risk. Biased data and model behavior can surface sensitive attributes or amplify exposure in ways users never consented to. Explore how AI bias develops and why it matters.

AI privacy risks and generative AI

Unlike traditional data processing, which typically involves structured databases, generative AI creates new content based on patterns learned during training. This introduces specific risks that go beyond standard collection concerns:

-

Training data leakage and memorization: Large Language Models (LLMs) can inadvertently “memorize” sensitive strings of text—such as private addresses or medical identifiers—from their training sets. These can then be unintentionally revealed to other users through specific prompts.

-

Sensitive attribute inference: Generative AI can analyze seemingly anonymous data to predict sensitive, unstated attributes about an individual, such as their political leanings, health status, or religious beliefs, creating a “derived” privacy breach.

-

Prompt injection and data extraction: Malicious actors can use “jailbreaking” or prompt injection techniques to bypass safety filters, tricking a model into revealing the data it was trained on or the private system instructions it was given.

-

Synthetic misinformation (deepfakes): The ability of generative AI to create realistic images, audio, and text enables the creation of highly personal misinformation. This risks an individual’s “identity privacy” by generating unauthorized representations of their likeness or voice.

Developing an AI data privacy framework: strategies for mitigating AI privacy risks

According to Deloitte’s 2024 State of Ethics report, only 27% of professionals say their organization has clear ethical standards for generative AI. The implication is clear: to safeguard against the invasive potential of AI, businesses must proactively adopt strategies that ensure privacy is not compromised. Mitigating artificial intelligence data privacy risks involves combining technical solutions, ethical guidelines, and data governance policies.

Build with AI in a way that’s both powerful and privacy-aware: DigitalOcean’s Gradient platform makes it easy to build AI apps with secure, privacy-focused foundations—like this guide on creating a compliant chatbot.

Embed privacy in AI design: The foundation

To mitigate AI privacy risks, integrate privacy considerations at the initial stages of AI system development. This involves adopting “privacy by design” (privacy-first AI) principles, ensuring that data protection is a foundational component of the technology. To translate this into actionable guidance, organizations should map privacy controls directly to the AI lifecycle:

-

Data collection: Implement strict data minimization through feature selection—identifying and using only the necessary variables for model training while excluding irrelevant personal identifiers.

-

Training: Use techniques like federated learning to allow for decentralized, local model training that keeps data on the user’s device, along with encryption for data at rest and in transit.

-

Deployment: Establish automated guardrails and regular audits to ensure ongoing compliance and prevent the model from leaking sensitive information during live inference.

Strong AI data governance starts with the right foundation. Through our partnership with Persistent, DigitalOcean’s Gradient™ AI Agentic Cloud supports enterprise-ready AI focused on security, compliance, and cost efficiency. Learn more about the DigitalOcean and Persistent partnership.

Build an AI security posture management approach: The infrastructure

As AI environments grow more complex, organizations should implement AI-SPM to maintain a continuous, bird’s-eye view of their AI assets. This approach involves creating a comprehensive inventory of all AI models, datasets, and third-party integrations to identify “shadow AI”—unauthorized tools used by employees that may leak sensitive data. An effective AI-SPM strategy focuses on identifying vulnerabilities within the AI stack, such as misconfigured vector databases or insecure API connections, and remediating them before they can be exploited. By shifting from static security checks to this continuous monitoring model, businesses can ensure that their AI data privacy and security controls evolve alongside each other, keeping sensitive data protected across the entire lifecycle.

Anonymize and aggregate data: The data preparation

Using anonymization techniques—like differential privacy, k-anonymity, pseudonymization, and synthetic data—can shield individual identities by stripping away identifiable information from the data sets AI systems use. This process involves altering, encrypting, or removing personal identifiers, ensuring that the data cannot be traced back to an individual. In tandem with anonymization, data aggregation combines individual data points into larger datasets, which can be analyzed without revealing personal details. These strategies reduce the risk of privacy breaches by limiting data association with specific individuals during AI analysis.

Establish protocols for handling user inputs: The live interaction

For AI systems in production, privacy depends largely on how user prompts are managed during the inference stage. Organizations must clearly document and govern the lifecycle of these inputs, specifically regarding prompt logging, feedback loops, and fine-tuning. While logging is often necessary for technical troubleshooting and security monitoring, it should be treated as temporary; prompts should not be stored longer than required for these immediate operational needs.

Clearly defining these boundaries prevents “data leakage” where sensitive information from one user’s prompt could inadvertently influence the model’s future outputs for another user.

Limit data retention: The cleanup

Implementing strict data retention policies minimizes the risks of privacy in artificial intelligence. Setting clear limits on how long data can be stored prevents unnecessary long-term accumulation of personal information, reducing the likelihood of it being exposed in a breach. To ensure limits are effective, decouple raw training records from the AI system once a model has been successfully validated, as the model’s utility resides in its mathematical weights rather than the original datasets. Retention should be further managed by segmenting prompt data and adopting a policy of prompt ephemerality, where user inputs are utilized for immediate inference and then promptly deleted rather than being stored for future fine-tuning.

Operationalizing AI privacy standards to establish governance and accountability

To bridge the gap between governance and execution, organizations must move from high-level policy to actionable data practices. By prioritizing transparency, staying ahead of global regulatory shifts, and fostering an internal culture of ethics, businesses can ensure their AI initiatives remain both compliant and trustworthy.

Increase transparency and user control

Improving transparency around AI system data practices builds user trust and accountability. Companies collecting data have the responsibility to communicate which data types are being collected, how AI algorithms process them, and for what purposes. Providing users with control over their data (i.e. options to view, edit, or delete information) empowers individuals to take control of their digital footprint. Offering useful controls aligns with ethical standards and ensures AI privacy compliance with evolving data protection regulations, which increasingly mandate user consent and governance.

From an organizational standpoint, creating and enforcing policies that automatically opt-out of data being used to train algorithms is a solid step towards enforcing AI privacy standards.

Transparency requires more than documentation—it requires controls. LLM guardrails help enforce AI privacy policies by limiting how user data is accessed, processed, and reused. See how LLM guardrails enable responsible AI governance.

Understand the impact of AI data privacy regulations

Understanding the implications of GDPR and AI, and similar regulations like the EU AI Act, mitigates AI privacy risks, as these laws set stringent standards for data protection and grant individuals significant control over their personal information. These regulations mandate that organizations must be transparent about their AI processing activities and ensure that individuals’ data rights are upheld, including the right to an explanation of algorithmic decisions.

Companies must implement measures that guarantee the accuracy, fairness, and accountability of their AI systems, particularly when decisions have a legal or significant effect on individuals. Failure to adhere to such regulatory standards can lead to substantial penalties.

Get familiar with the following regulations and guidelines, and how they impact your company’s operations:

-

Canada’s proposed Bill C-27 (includes the Artificial Intelligence and Data Act)

-

U.S. Federal Trade Commission (FTC) guidance on using artificial intelligence and algorithms

Cultivate a culture of ethical AI use

To reduce AI privacy risks, establish ethical guidelines for AI use that prioritize data protection and respect for intellectual property rights. Provide regular training to ensure that all your employees understand these guidelines and the importance of upholding them in their day-to-day work with AI technologies. Have transparent policies that govern data collection, storage, and use of personal and sensitive information. Finally, fostering an environment where ethical concerns can be openly discussed and addressed will help maintain a vigilant stance against potential privacy infringements. One way to do this is by hiring consultants or designating a committee to help establish your company’s AI ethics policy.

The future will hinge on a collaborative approach, where continuous dialogue among technologists, businesses, regulators, and the public shapes AI development to uphold privacy rights while fostering technological advancement.

AI and privacy FAQs

What privacy risks does AI introduce? AI introduces privacy risks, including unauthorized surveillance, identity theft, and the loss of individual anonymity. These systems may increase data exposure through massive processing volumes and use predictive analytics to infer personal behaviors without explicit consent. Additionally, generative AI specifically risks “memorizing” and inadvertently revealing sensitive training data, such as private addresses or medical identifiers.

How does AI handle personal data? AI handles personal data by employing varied collection methods—such as web scraping, biometric sensors, and IoT devices—to analyze demographic information, preferences, and even emotional states. During the training phase, this data is often converted into numerical vectors called embeddings, which are dense mathematical representations of the original input. In production, systems process user inputs during the inference stage to generate predictions or content, which may then be logged or used for further model fine-tuning.

Are AI systems subject to GDPR? Yes, AI systems are subject to the GDPR and similar regulations, which mandate transparency in processing activities and uphold individual data rights, such as the right to an explanation for algorithmic decisions. These laws set stringent standards for data protection and can result in substantial penalties for organizations that fail to ensure the accuracy, fairness, and accountability of their AI systems. The developing EU AI Act further expands these requirements by introducing specific risk assessments and governance strategies for high-risk applications.

What is privacy by design in AI? Privacy by design in AI is the practice of integrating data protection considerations as a foundational component at the initial stages of system development rather than as an afterthought. This involves mapping specific controls to the entire AI lifecycle, such as using feature selection during data collection to minimize processed variables and employing federated learning during training to keep data localized. The goal is to build models with inherent safeguards that limit unnecessary data exposure and provide robust AI privacy and security from the outset.

How can organizations protect data used in AI models? Organizations can protect data by implementing technical safeguards like encryption for data at rest and in transit, alongside anonymization techniques such as differential privacy and synthetic data. They should also adopt AI Security Posture Management (AI-SPM) to maintain a continuous inventory of AI assets and identify vulnerabilities like “shadow AI” or insecure API connections. Finally, establishing strict operational policies—including prompt ephemerality and decoupling raw training records from validated models—minimizes the long-term accumulation of sensitive information.

Build with DigitalOcean’s Gradient Platform

DigitalOcean Gradient Platform makes it easier to build and deploy AI agents without managing complex infrastructure. Build custom, fully-managed agents backed by the world’s most powerful LLMs from Anthropic, DeepSeek, Meta, Mistral, and OpenAI. From customer-facing chatbots to complex, multi-agent workflows, integrate agentic AI with your application in hours with transparent, usage-based billing and no infrastructure management required.

Key features:

-

Serverless inference with leading LLMs and simple API integration

-

RAG workflows with knowledge bases for fine-tuned retrieval

-

Function calling capabilities for real-time information access

-

Multi-agent crews and agent routing for complex tasks

-

Guardrails for content moderation and sensitive data detection

-

Embeddable chatbot snippets for easy website integration

-

Versioning and rollback capabilities for safe experimentation

Get started with DigitalOcean Gradient Platform for access to everything you need to build, run, and manage the next big thing.

- Table of contents

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.