- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

Sr Technical Content Strategist and Team Lead

Introduction

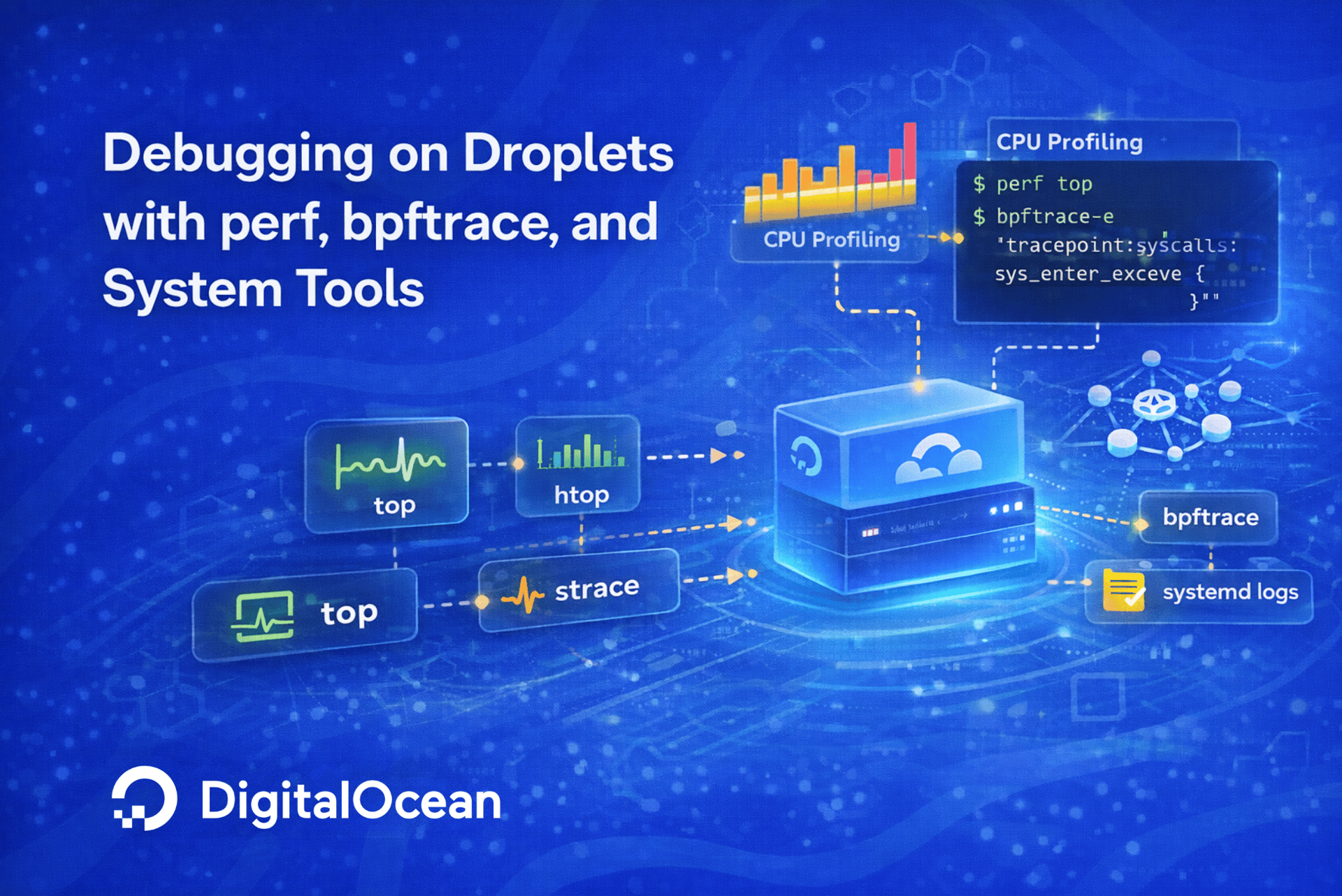

When a Linux server slows down under load, the challenge is rarely a lack of resources. More often, the bottleneck hides inside a specific function, a leaky allocation, or an I/O path that no one has instrumented. General monitoring dashboards tell you that something is wrong. Performance debugging tools tell you where and why.

This tutorial walks you through a hands-on performance debugging lab on a DigitalOcean Premium CPU-Optimized Droplet. You will deploy an intentionally slow application, then use four Linux performance tools to find and fix the bottlenecks:

systemd-analyzefor boot and service startup timingperffor CPU profiling and flame graph generationbpftracefor kernel-level and I/O tracing with one-liner scriptsvalgrindfor memory leak detection and heap profiling

After each round of profiling, you will apply targeted fixes and re-measure. The final section maps your findings to DigitalOcean product decisions, such as choosing a larger Droplet, adding Block Storage, switching to Managed Databases, or moving to DigitalOcean Kubernetes (DOKS).

Key Takeaways

- Profile before you scale. Tools like

perfandbpftracepinpoint the exact function or system call causing a bottleneck, so you can fix code before spending money on bigger infrastructure. systemd-analyzereveals hidden startup costs. Slow-booting services add latency every time you deploy or restart. Identifying and fixing these saves time in CI/CD pipelines and during incident recovery.bpftraceone-liners give kernel-level visibility without recompiling. You can trace disk I/O latency, system call frequency, and TCP connection rates in production with minimal overhead.- Memory leaks compound over time. Valgrind’s Massif tool shows exactly which functions allocate memory that is never freed, preventing out-of-memory crashes before they happen.

- Profiling data drives smarter architecture decisions. Whether the answer is vertical scaling, Block Storage volumes, Managed Databases, or container orchestration with DOKS, the data from these tools tells you exactly what to invest in.

Prerequisites

Before you begin, make sure you have the following:

- A DigitalOcean account. If you do not have one, sign up and get $200 in free credit.

- A Premium CPU-Optimized Droplet (4 vCPUs, 8 GB RAM recommended) running Ubuntu 24.04 LTS. Follow the Initial Server Setup with Ubuntu tutorial to configure a non-root user with

sudoprivileges. - Familiarity with the Linux command line. If you need a refresher, check the Linux Command Line Primer.

- DigitalOcean Monitoring enabled on the Droplet for baseline resource visibility.

Step 1: Install Performance Debugging Tools

Connect to your Droplet over SSH and install the tools you will use throughout this lab.

sudo apt update && sudo apt install -y linux-tools-common linux-tools-$(uname -r) bpftrace valgrind gcc make

This installs:

| Package | Purpose |

|---|---|

linux-tools-common and linux-tools-$(uname -r) |

Provides the perf profiler matched to your running kernel |

bpftrace |

High-level tracing language built on eBPF for kernel and user-space probes |

valgrind |

Memory debugging and profiling suite (includes Massif for heap analysis) |

gcc and make |

Compiler toolchain to build the sample application |

Verify the installations:

perf --version

bpftrace --version

valgrind --version

You should see output similar to:

Outputperf version 6.8.12

bpftrace v0.20.2

valgrind-3.22.0

Each command should return a version string without errors. If perf reports a kernel mismatch, confirm that linux-tools-$(uname -r) matches your running kernel with uname -r.

Step 2: Deploy an Intentionally Slow Application

To practice performance debugging, you need a workload with known bottlenecks. Create a small C program that simulates three common problems: CPU-bound computation, memory leaks, and excessive disk I/O.

First, create a project directory and move into it:

mkdir -p ~/perf-lab && cd ~/perf-lab

Next, open a new file called slow_app.c using vi:

vi slow_app.c

Press i to enter insert mode, then paste the following code into the file:

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <unistd.h>

#include <math.h>

#include <fcntl.h>

/* CPU-bound: inefficient prime check */

int is_prime_naive(int n) {

if (n < 2) return 0;

for (int i = 2; i < n; i++) {

if (n % i == 0) return 0;

}

return 1;

}

/* Memory leak: allocates without freeing */

void leaky_function(int iterations) {

for (int i = 0; i < iterations; i++) {

char *buf = malloc(4096);

if (buf) {

memset(buf, 'A', 4096);

/* intentionally never freed */

}

}

}

/* Disk I/O: synchronous writes with fsync */

void io_heavy(const char *path, int writes) {

int fd = open(path, O_WRONLY | O_CREAT | O_TRUNC, 0644);

if (fd < 0) { perror("open"); return; }

char data[4096];

memset(data, 'B', sizeof(data));

for (int i = 0; i < writes; i++) {

write(fd, data, sizeof(data));

fsync(fd);

}

close(fd);

}

int main() {

printf("Starting CPU-bound work...\n");

int count = 0;

for (int n = 2; n < 80000; n++) {

if (is_prime_naive(n)) count++;

}

printf("Found %d primes.\n", count);

printf("Starting memory allocations...\n");

leaky_function(5000);

printf("Starting I/O-heavy writes...\n");

io_heavy("/tmp/perf_lab_output.bin", 2000);

printf("Done.\n");

return 0;

}

When you are done, press Esc to exit insert mode, then type :wq and press Enter to save and close the file.

Compile with debug symbols (required for perf and valgrind):

gcc -g -O0 -o slow_app slow_app.c -lm

The -g flag includes debug symbols so profilers can map samples back to source lines. The -O0 flag disables compiler optimizations, keeping the code structure intact for easier analysis.

Run a quick test:

time ./slow_app

You should see output similar to:

OutputStarting CPU-bound work...

Found 7837 primes.

Starting memory allocations...

Starting I/O-heavy writes...

Done.

real 0m12.427s

user 0m1.372s

sys 0m0.420s

The real time gives your baseline. Note it down; you will compare it after applying optimizations.

Step 3: Measure Boot and Service Timing with systemd-analyze

Before diving into application profiling, check whether the Droplet itself has startup inefficiencies. Slow-booting services add latency to every deployment and recovery cycle.

Get the overall boot time:

systemd-analyze

You should see output similar to:

OutputStartup finished in 2.812s (kernel) + 1min 35.237s (userspace) = 1min 38.049s

graphical.target reached after 14.232s in userspace.

List the slowest services:

systemd-analyze blame | head -15

You should see output similar to:

Output 1min 16.867s cloud-final.service

31.390s apt-daily.service

21.825s apt-daily-upgrade.service

7.285s cloud-config.service

2.955s cloud-init.service

2.354s cloud-init-local.service

1.570s apparmor.service

1.402s pollinate.service

1.329s dev-vda1.device

1.042s apport.service

816ms grub-common.service

721ms man-db.service

720ms certbot.service

678ms ldconfig.service

673ms rsyslog.service

Check the critical chain for dependencies:

systemd-analyze critical-chain

You should see output similar to:

OutputThe time when unit became active or started is printed after the "@" character.

The time the unit took to start is printed after the "+" character.

graphical.target @14.232s

└─multi-user.target @14.230s

└─apport.service @11.060s +1.042s

└─basic.target @11.053s

└─sockets.target @11.051s

└─lxd-installer.socket @11.019s +28ms

└─sysinit.target @10.971s

└─cloud-init.service @8.009s +2.955s

└─cloud-init-local.service @3.803s +2.354s

└─systemd-remount-fs.service @1.270s +43ms

└─systemd-fsck-root.service @1.104s +134ms

└─systemd-journald.socket @811ms

└─system.slice @624ms

└─-.slice @624ms

This shows which services block others from starting. If a non-essential service sits on the critical path, you can disable or defer it:

sudo systemctl disable <service name> --now

On DigitalOcean Droplets, cloud-init handles instance metadata and should not be disabled. Focus on services that are not required for your workload.

Step 4: Profile CPU Hot Paths with perf

The perf profiler samples the CPU at regular intervals and records which functions are running. This reveals exactly where your application spends the most CPU cycles.

Run the application and record a profile of the application:

sudo perf record -F 99 -g --call-graph dwarf -o perf.data ./slow_app

You should see output similar to:

OutputStarting CPU-bound work...

Found 7837 primes.

Starting memory allocations...

Starting I/O-heavy writes...

Done.

[ perf record: Woken up 6 times to write data ]

[ perf record: Captured and wrote 1.724 MB perf.data (210 samples) ]

| Flag | Purpose |

|---|---|

-F 99 |

Sample at 99 Hz (99 times per second) |

-g |

Capture call graphs for each sample |

--call-graph dwarf |

Use DWARF debug info for accurate stack unwinding |

This creates a perf.data file in the current directory.

View the profiling report:

sudo perf report --stdio --sort comm,dso,symbol -i perf.data | head -40

You will see output like:

OutputSamples: 210 of event 'task-clock:ppp'

# Event count (approx.): 2121212100

#

# Children Self Command Shared Object Symbol IPC [IPC Coverage]

# ........ ........ ........ ................. ......................................... ....................

#

99.52% 0.00% slow_app slow_app [.] _start - -

|

---_start

__libc_start_main_impl (inlined)

__libc_start_call_main

main

|

|--63.81%--is_prime_naive

| |

| --1.43%--asm_common_interrupt

| common_interrupt

| irq_exit_rcu

| __irq_exit_rcu

| handle_softirqs

| net_rx_action

| __napi_poll

| virtnet_poll

| napi_complete_done

| netif_receive_skb_list_internal

| __netif_receive_skb_list_core

| ip_list_rcv

| ip_sublist_rcv

| ip_sublist_rcv_finish

| ip_local_deliver

| ip_local_deliver_finish

| ip_protocol_deliver_rcu

| tcp_v4_rcv

| tcp_v4_do_rcv

| tcp_rcv_established

The report shows that is_prime_naive consumes over 63.81% of CPU time. This is the hot path to optimize.

Generate a flame graph (optional)

Flame graphs provide a visual representation of where CPU time is spent. To generate one, first clone Brendan Gregg’s flame graph scripts:

git clone https://github.com/brendangregg/FlameGraph.git ~/FlameGraph

Then, from the ~/perf-lab directory where perf.data was created, run the pipeline to generate the SVG:

cd ~/perf-lab

sudo perf script -i perf.data | ~/FlameGraph/stackcollapse-perf.pl | ~/FlameGraph/flamegraph.pl > flamegraph.svg

The -i perf.data flag tells perf script exactly which data file to read, avoiding the “No such file or directory” error if you run the command from a different directory.

To download flamegraph.svg to your local machine for viewing, run the following scp command from your local terminal (not the Droplet). Replace your_user with your server username and your_server_ip with the Droplet’s IP address:

scp your_user@your_server_ip:~/perf-lab/flamegraph.svg .

This copies the file into your current local directory. Open flamegraph.svg in a browser. Wide bars at the top indicate functions consuming the most CPU time.

Step 5: Trace Kernel and I/O Events with bpftrace

While perf focuses on CPU profiling, bpftrace gives you programmable access to kernel tracepoints, letting you inspect I/O patterns, system call latency, and more without modifying your application.

Trace disk I/O latency distribution

Run this one-liner to see how long disk I/O requests take. It records a timestamp when each I/O request is issued and calculates the elapsed time when that request completes:

sudo bpftrace -e '

tracepoint:block:block_rq_issue { @start[args->sector] = nsecs; }

tracepoint:block:block_rq_complete /@start[args->sector]/ {

@usecs = hist((nsecs - @start[args->sector]) / 1000);

delete(@start[args->sector]);

}

'

Press Ctrl+C after 10 to 15 seconds. The output shows a histogram of I/O request completion times in microseconds. Spikes in higher buckets (above 10ms) suggest disk saturation.

This histogram displays the distribution of disk I/O request completion times, measured in microseconds, as captured by the bpftrace one-liner. Each bracketed range (e.g., [256, 512)) represents a latency bucket, and the corresponding bar (|@@@@...|) visually shows how many requests fell into that latency range. For example, the [256, 512) bucket had 64 events, indicating that most I/O requests completed within 256 to 512 microseconds.

Taller bars in lower-latency buckets show fast disk performance, whereas significant counts in higher-latency buckets (like [1K, 2K)) may point to occasional slower I/O. Use these distributions to identify whether your disk subsystem is experiencing sporadic or consistent latency spikes.

The raw output:

Attaching 2 probes...

^C

@start[0]: 5776850089440350

@usecs:

[256, 512) 64 |@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@|

[512, 1K) 25 |@@@@@@@@@@@@@@@@@@@@ |

[1K, 2K) 61 |@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@ |

[2K, 4K) 8 |@@@@@@ |

The above output shows that the majority of disk I/O operations completed in under 2 milliseconds, but a handful took longer, helping you assess the health and responsiveness of your storage layer.

Count system calls by type

To see which system calls your application makes most frequently, you can trace every syscall entry and filter it to only the slow_app process you created in the previous step. This version uses the syscall ID to count calls by number:

Open a new terminal and start the bpftrace trace:

sudo bpftrace -e 'tracepoint:raw_syscalls:sys_enter /comm == "slow_app"/ { @[args->id] = count(); }'

Open a second terminal (a new SSH session to the same Droplet) and run the application:

cd ~/perf-lab

./slow_app

After slow_app finishes, switch back to the first terminal and press Ctrl+C to stop the trace. You should see output similar to:

OutputAttaching 1 probe...

^C

@[21]: 1

@[218]: 1

@[302]: 1

@[158]: 1

@[318]: 1

@[273]: 1

@[0]: 1

@[11]: 1

@[231]: 1

@[334]: 1

@[17]: 2

@[257]: 3

@[10]: 3

@[5]: 3

@[3]: 3

@[9]: 8

@[12]: 155

@[74]: 2000

@[1]: 2005

Each line follows the format @[syscall_id]: call_count. The number in brackets is the Linux syscall number (defined in the kernel’s syscall table for x86_64), and the value after the colon is how many times slow_app invoked that syscall during the trace.

Here is what the most significant entries mean:

| Syscall ID | Name | Count | What it tells you |

|---|---|---|---|

1 |

write |

2,005 | The application made over 2,000 write calls, one for each 4 KB block written to /tmp/perf_lab_output.bin |

74 |

fsync |

2,000 | Every single write was followed by an fsync, forcing the kernel to flush data to disk each time. This is the primary I/O bottleneck |

12 |

brk |

155 | The heap was expanded 155 times via brk to satisfy the malloc calls in leaky_function |

9 |

mmap |

8 | Memory-mapped regions for loading shared libraries and allocating large memory blocks |

5 |

fstat |

3 | File metadata lookups (file size, permissions) |

10 |

mprotect |

3 | Setting memory region protection flags during library loading |

257 |

openat |

3 | Opening files (the output file and shared libraries) |

3 |

close |

3 | Closing file descriptors |

0 |

read |

1 | A single read call during program startup |

The low-count syscalls (like 218/set_tid_address, 302/prlimit64, 334/rseq) are standard process initialization calls that the C runtime library makes before main() runs. They appear in every program and are not a performance concern.

What this means is that the write and fsync syscalls dominate the syscall profile with 2,000 calls each. The application calls fsync after every single 4 KB write, forcing the kernel to flush data to disk 2,000 times instead of once at the end. This pattern is the root cause of the I/O bottleneck you will fix in Step 7.

Trace fsync latency

To measure how long each fsync call takes, you can trace the fsync system call entry and exit and calculate the elapsed time:

sudo bpftrace -e '

tracepoint:syscalls:sys_enter_fsync { @start[tid] = nsecs; }

tracepoint:syscalls:sys_exit_fsync /@start[tid]/ {

@fsync_latency_us = hist((nsecs - @start[tid]) / 1000);

delete(@start[tid]);

}

'

Run ./slow_app in a second terminal while this trace is active, then press Ctrl+C after it finishes. You should see output similar to:

OutputAttaching 2 probes...

^C

@fsync_latency_us:

[2K, 4K) 162 |@@@@@@ |

[4K, 8K) 1218 |@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@|

[8K, 16K) 484 |@@@@@@@@@@@@@@@@@@@@ |

[16K, 32K) 96 |@@@@ |

[32K, 64K) 26 |@ |

[64K, 128K) 11 | |

[128K, 256K) 3 | |

This is a histogram of fsync latency measured in microseconds. Each row represents a latency bucket, and the @ bars show how many fsync calls fell into that range:

- [2K, 4K) means 2,000 to 3,999 microseconds (2 to 4 ms). 162 calls completed this quickly.

- [4K, 8K) is the tallest bar with 1,218 calls. This is where the majority of

fsynccalls landed, taking between 4 and 8 milliseconds each. - [8K, 16K) captured 484 calls at 8 to 16 ms, and the count drops off as latency increases.

- The tail entries at [64K, 128K) and [128K, 256K) represent occasional outliers where a single

fsynctook up to a quarter of a second, likely caused by the kernel flushing a larger batch of dirty pages or competing with other I/O.

Adding up all the rows gives roughly 2,000 total fsync calls, which matches the syscall count from the earlier trace. Since the most common latency is 4 to 8 ms per call, you can estimate the total time the application spent blocked on fsync alone: approximately 1,218 x 6 ms + 484 x 12 ms + 162 x 3 ms … which works out to roughly 14 to 15 seconds of wall-clock time spent purely on disk flushes.

This confirms that the per-write fsync pattern is not just generating excess syscalls but is also adding seconds of disk wait time. Batching writes and calling fsync once at the end (as you will do in Step 7) eliminates nearly all of this overhead.

Step 6: Detect Memory Leaks with valgrind

Memory leaks cause a process to consume increasing amounts of RAM over time, eventually triggering the Linux OOM (Out-of-Memory) killer. Valgrind’s Memcheck tool detects leaks by tracking every allocation and free.

Run the application under Memcheck:

valgrind --leak-check=full --show-leak-kinds=all --track-origins=yes ./slow_app

The output reports leaked memory at the end:

Output==2960525== Memcheck, a memory error detector

==2960525== Copyright (C) 2002-2022, and GNU GPL'd, by Julian Seward et al.

==2960525== Using Valgrind-3.22.0 and LibVEX; rerun with -h for copyright info

==2960525== Command: ./slow_app

==2960525==

Starting CPU-bound work...

Found 7837 primes.

Starting memory allocations...

Starting I/O-heavy writes...

Done.

==2960525==

==2960525== HEAP SUMMARY:

==2960525== in use at exit: 20,480,000 bytes in 5,000 blocks

==2960525== total heap usage: 5,001 allocs, 1 frees, 20,481,024 bytes allocated

==2960525==

==2960525== 20,480,000 bytes in 5,000 blocks are definitely lost in loss record 1 of 1

==2960525== at 0x4846828: malloc (in /usr/libexec/valgrind/vgpreload_memcheck-amd64-linux.so)

==2960525== by 0x1092D2: leaky_function (slow_app.c:20)

==2960525== by 0x109477: main (slow_app.c:50)

==2960525==

==2960525== LEAK SUMMARY:

==2960525== definitely lost: 20,480,000 bytes in 5,000 blocks

==2960525== indirectly lost: 0 bytes in 0 blocks

==2960525== possibly lost: 0 bytes in 0 blocks

==2960525== still reachable: 0 bytes in 0 blocks

==2960525== suppressed: 0 bytes in 0 blocks

==2960525==

Every line prefixed with ==2960525== is Valgrind’s instrumentation output (the number is the process ID). Here is what each section reveals:

HEAP SUMMARY shows the overall picture of dynamic memory:

- in use at exit: 20,480,000 bytes in 5,000 blocks tells you that when the program finished, 20 MB of heap memory across 5,000 separate allocations was still allocated and never returned to the system.

- total heap usage: 5,001 allocs, 1 frees means the program called

malloc5,001 times but only calledfreeonce. The 5,000 missingfreecalls are the leak.

The stack trace pinpoints exactly where the leaked memory was allocated:

mallocwas called insideleaky_functionat line 20 ofslow_app.cleaky_functionwas called frommainat line 50

This matches the code: leaky_function runs 5,000 times, each time calling malloc(4096) to allocate a 4 KB block, but it never calls free on any of them. Over 5,000 iterations, that adds up to 5,000 x 4,096 = 20,480,000 bytes (roughly 20 MB).

LEAK SUMMARY categorizes the leaked memory:

- definitely lost: 20,480,000 bytes means the program no longer has any pointer to this memory. It cannot be freed or reused. This is the most severe leak category.

- indirectly lost, possibly lost, still reachable are all zero, confirming that every single leaked byte is a straightforward, unrecoverable leak with no ambiguity.

In a short-lived program like this demo, 20 MB is manageable. In a long-running production service, the same pattern would cause memory usage to grow continuously until the Linux OOM killer terminates the process, potentially causing downtime. The fix in Step 7 adds free() calls to eliminate this leak entirely.

Heap profiling with Massif

For a more detailed view of memory usage over time, use Valgrind’s Massif tool:

valgrind --tool=massif --time-unit=B ./slow_app

ms_print massif.out.*

Massif produces a timeline of heap usage, showing allocation peaks and which functions are responsible. This is especially useful for long-running services where memory grows gradually.

You should see output similar to:

Output|

->00.01% (1,024B) in 1+ places, all below ms_print's threshold (01.00%)

--------------------------------------------------------------------------------

n time(B) total(B) useful-heap(B) extra-heap(B) stacks(B)

--------------------------------------------------------------------------------

70 17,262,456 17,262,456 17,228,800 33,656 0

71 17,459,448 17,459,448 17,425,408 34,040 0

72 17,656,440 17,656,440 17,622,016 34,424 0

73 17,853,432 17,853,432 17,818,624 34,808 0

74 18,050,424 18,050,424 18,015,232 35,192 0

75 18,247,416 18,247,416 18,211,840 35,576 0

76 18,444,408 18,444,408 18,408,448 35,960 0

77 18,641,400 18,641,400 18,605,056 36,344 0

78 18,838,392 18,838,392 18,801,664 36,728 0

79 19,035,384 19,035,384 18,998,272 37,112 0

99.81% (18,998,272B) (heap allocation functions) malloc/new/new[], --alloc-fns, etc.

->99.80% (18,997,248B) 0x1092D2: leaky_function (slow_app.c:20)

| ->99.80% (18,997,248B) 0x109477: main (slow_app.c:50)

|

->00.01% (1,024B) in 1+ places, all below ms_print's threshold (01.00%)

--------------------------------------------------------------------------------

n time(B) total(B) useful-heap(B) extra-heap(B) stacks(B)

--------------------------------------------------------------------------------

80 19,232,376 19,232,376 19,194,880 37,496 0

81 19,429,368 19,429,368 19,391,488 37,880 0

82 19,626,360 19,626,360 19,588,096 38,264 0

83 19,823,352 19,823,352 19,784,704 38,648 0

84 20,020,344 20,020,344 19,981,312 39,032 0

85 20,217,336 20,217,336 20,177,920 39,416 0

86 20,414,328 20,414,328 20,374,528 39,800 0

87 20,521,032 20,521,032 20,481,024 40,008 0

99.81% (20,481,024B) (heap allocation functions) malloc/new/new[], --alloc-fns, etc.

->99.80% (20,480,000B) 0x1092D2: leaky_function (slow_app.c:20)

| ->99.80% (20,480,000B) 0x109477: main (slow_app.c:50)

|

->00.00% (1,024B) in 1+ places, all below ms_print's threshold (01.00%)

This output shows a timeline of heap usage over time, showing allocation peaks and which functions are responsible. The most significant allocations are in leaky_function (at line 20 of slow_app.c), which is called 5,000 times and allocates 20 MB of memory. The next largest allocations are in main (at line 50), which allocates 40 KB of memory.

Step 7: Apply Optimizations and Re-Profile

Now that you have identified three bottlenecks, apply targeted fixes.

Fix 1: Optimize the prime-checking algorithm

Let’s create a new version of the slow_app program called slow_app_fixed that fixes the prime-checking algorithm and frees the allocated memory.

We will replace the naive O(n) trial division with an O(sqrt(n)) approach in the slow_app_fixed.c file:

cat > slow_app_fixed.c << 'EOF'

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <unistd.h>

#include <math.h>

#include <fcntl.h>

/* Optimized: check divisibility only up to sqrt(n) */

int is_prime_fast(int n) {

if (n < 2) return 0;

if (n < 4) return 1;

if (n % 2 == 0 || n % 3 == 0) return 0;

for (int i = 5; i * i <= n; i += 6) {

if (n % i == 0 || n % (i + 2) == 0) return 0;

}

return 1;

}

/* Fixed: free allocated memory */

void fixed_function(int iterations) {

for (int i = 0; i < iterations; i++) {

char *buf = malloc(4096);

if (buf) {

memset(buf, 'A', 4096);

free(buf);

}

}

}

/* Optimized I/O: batch writes, single fsync at end */

void io_batched(const char *path, int writes) {

int fd = open(path, O_WRONLY | O_CREAT | O_TRUNC, 0644);

if (fd < 0) { perror("open"); return; }

char data[4096];

memset(data, 'B', sizeof(data));

for (int i = 0; i < writes; i++) {

write(fd, data, sizeof(data));

}

fsync(fd);

close(fd);

}

int main() {

printf("Starting CPU-bound work...\n");

int count = 0;

for (int n = 2; n < 80000; n++) {

if (is_prime_fast(n)) count++;

}

printf("Found %d primes.\n", count);

printf("Starting memory allocations...\n");

fixed_function(5000);

printf("Starting I/O-heavy writes...\n");

io_batched("/tmp/perf_lab_output.bin", 2000);

printf("Done.\n");

return 0;

}

EOF

Compile and benchmark:

gcc -g -O2 -o slow_app_fixed slow_app_fixed.c -lm

time ./slow_app_fixed

Expected result:

OutputStarting CPU-bound work...

Found 7837 primes.

Starting memory allocations...

Starting I/O-heavy writes...

Done.

real 0m0.181s

user 0m0.006s

sys 0m0.018s

The optimized version runs roughly 40x faster than the original. The prime count (7,837) remains the same, confirming correctness. The performance improvement is due to the optimized prime-checking algorithm, which reduces the number of iterations required to find the primes by checking divisibility only up to the square root of the number.

This reduces the number of iterations from O(n) to O(sqrt(n)), making the algorithm much more efficient.

Verify the fixes

Re-run perf to confirm the CPU hot path is resolved:

sudo perf record -F 99 -g --call-graph dwarf ./slow_app_fixed

sudo perf report --stdio --sort comm,dso,symbol | head -20

You should see output similar to:

Output# Total Lost Samples: 0

#

# Samples: 1 of event 'task-clock:ppp'

# Event count (approx.): 10101010

#

# Children Self Command Shared Object Symbol IPC [IPC Coverage]

# ........ ........ .............. ................. .................................. ....................

#

100.00% 100.00% slow_app_fixed [kernel.kallsyms] [k] mark_buffer_dirty - -

|

---_start

__libc_start_main_impl (inlined)

__libc_start_call_main

main

io_batched

__GI___libc_write

entry_SYSCALL_64_after_hwframe

CPU usage should now be distributed more evenly.

Confirm zero memory leaks:

valgrind --leak-check=full ./slow_app_fixed

The expected output should include:

==2961119== Memcheck, a memory error detector

==2961119== Copyright (C) 2002-2022, and GNU GPL'd, by Julian Seward et al.

==2961119== Using Valgrind-3.22.0 and LibVEX; rerun with -h for copyright info

==2961119== Command: ./slow_app_fixed

==2961119==

Starting CPU-bound work...

Found 7837 primes.

Starting memory allocations...

Starting I/O-heavy writes...

Done.

==2961119==

==2961119== HEAP SUMMARY:

==2961119== in use at exit: 0 bytes in 0 blocks

==2961119== total heap usage: 5,001 allocs, 5,001 frees, 20,481,024 bytes allocated

==2961119==

==2961119== All heap blocks were freed -- no leaks are possible

Step 8: Map Findings to DigitalOcean Architecture Decisions

Performance profiling does more than fix code. The data you collect guides infrastructure decisions. Here is a framework for mapping profiling results to DigitalOcean product choices:

| Profiling Finding | Root Cause | Recommended DigitalOcean Action |

|---|---|---|

perf shows sustained high CPU across all cores |

Application is CPU-bound after code optimization | Resize to a larger CPU-Optimized Droplet or scale horizontally |

bpftrace shows high disk I/O latency (>10ms p99) |

Storage throughput limits on the root volume | Attach Block Storage Volumes with higher IOPS or use NVMe-backed Premium Droplets |

valgrind shows growing heap in a database process |

Application-level query inefficiency or connection bloat | Offload to DigitalOcean Managed Databases (PostgreSQL, MySQL, MongoDB) for connection pooling and automatic tuning |

systemd-analyze shows >30s boot time |

Heavy init scripts and service dependencies | Containerize services and deploy on DigitalOcean Kubernetes (DOKS) for faster cold starts and horizontal auto-scaling |

| Mixed CPU and I/O bottlenecks across multiple services | Monolithic architecture hitting single-server limits | Decompose into microservices on DOKS with per-service resource limits and Load Balancers |

Vertical vs. horizontal scaling: If profiling shows a single-threaded CPU bottleneck, vertical scaling (a bigger Droplet) helps immediately. If the workload parallelizes well, horizontal scaling with multiple smaller Droplets behind a load balancer is more cost-effective long-term. For a deeper comparison, read Horizontal Scaling vs Vertical Scaling.

Database offloading: If memory profiling shows your application spending most of its heap on database connection buffers or query caches, moving to a managed database eliminates that overhead. DigitalOcean Managed Databases handle connection pooling, automated backups, and failover. Learn more in Managed vs Self-Managed Databases.

You can refer to our How to Resize Droplets for Vertical Scaling guide for more information on how to resize Droplets for vertical scaling.

FAQs

1. What does the perf tool do in Linux?

perf is a performance profiling tool built into the Linux kernel. It samples CPU activity at configurable intervals and records which functions, libraries, and kernel paths are executing. This lets you identify CPU hot paths (functions consuming the most processor time), measure hardware events like cache misses and branch mispredictions, and generate flame graphs for visual analysis. On DigitalOcean Droplets, perf is available through the linux-tools package matched to your running kernel version.

2. What is the difference between profiling and tracing?

Profiling collects statistical samples of what your system is doing at regular intervals. It answers “where does the CPU spend most of its time?” with low overhead, making it safe for production. Tracing captures every occurrence of a specific event, such as each disk I/O request, every system call, or each function entry. Tracing provides complete visibility but generates more data and carries higher overhead. perf is primarily a profiler, while bpftrace is a tracer. In practice, you start with profiling to identify the broad problem area, then use tracing to drill into the exact root cause.

3. How do you find CPU bottlenecks on a Linux server?

Start with top or htop to see which processes consume the most CPU. Then use perf record -F 99 -g -p <PID> to profile that specific process. Run perf report to see which functions dominate. If the bottleneck is in kernel space, use bpftrace to trace specific kernel functions or system calls. On DigitalOcean Droplets, you can also check the Monitoring dashboard for CPU utilization trends before diving into per-process profiling.

4. Can you run perf and bpftrace safely in production?

Yes, with precautions. perf at low sampling rates (49 to 99 Hz) adds less than 1% overhead and is routinely used in production environments. bpftrace uses eBPF programs that are verified by the kernel before execution, preventing crashes or data corruption. However, some bpftrace scripts that trace high-frequency events (like every memory allocation) can add measurable overhead. Start with targeted one-liners, test on staging, and monitor the overhead using top while the trace runs. Both tools are used in production at companies like Netflix and Meta.

5. How do you detect memory leaks in Linux applications?

Run your application under Valgrind’s Memcheck tool with valgrind --leak-check=full ./your_app. Memcheck tracks every malloc and free call and reports allocations that are never freed when the program exits. For long-running services, use Valgrind’s Massif tool (--tool=massif) to capture heap usage over time, then visualize with ms_print. In production, where Valgrind’s slowdown is not acceptable, use bpftrace to trace kmalloc or malloc calls and count allocations per stack trace.

Conclusion

Performance debugging is a skill that pays off at every stage of infrastructure growth. In this tutorial, you profiled an intentionally slow application on a DigitalOcean Droplet using four tools: systemd-analyze for boot timing, perf for CPU profiling, bpftrace for kernel and I/O tracing, and valgrind for memory leak detection. By applying targeted fixes based on profiling data, you achieved a roughly 40x speedup and eliminated all memory leaks.

The methodology here, profile first, fix the bottleneck, then re-measure, applies to any workload running on Linux. Whether you are debugging a Python web application, a Go microservice, or a C/C++ data pipeline, the same tools reveal where time and memory are spent. The profiling data also guides your architecture decisions: when to scale vertically, when to add storage, when to offload to managed services, and when to move to containers.

For a broader look at Linux kernel tuning and networking optimization, read Tune Up: Optimizing Linux Performance on DigitalOcean Community.

Next Steps

Ready to put these techniques to work on your own infrastructure? Here are some paths forward:

- Spin up a Premium CPU-Optimized Droplet and run this lab against your own application. Create a Droplet and start profiling today.

- Enable DigitalOcean Monitoring to track CPU, memory, and disk I/O metrics alongside your profiling data. Learn more about Monitoring Metrics.

- Explore Managed Databases to offload database tuning and connection management. Compare DigitalOcean Managed Database options.

- Deploy on DigitalOcean Kubernetes (DOKS) if profiling shows your workload benefits from horizontal scaling and containerized isolation. Get started with DOKS.

- Set up load balancing to distribute traffic across multiple Droplets when a single server hits its limits. Read An Introduction to HAProxy and Load Balancing Concepts.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author

I help Businesses scale with AI x SEO x (authentic) Content that revives traffic and keeps leads flowing | 3,000,000+ Average monthly readers on Medium | Sr Technical Writer(Team Lead) @ DigitalOcean | Ex-Cloud Consultant @ AMEX | Ex-Site Reliability Engineer(DevOps)@Nutanix

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- Introduction

- Key Takeaways

- Prerequisites

- Step 1: Install Performance Debugging Tools

- Step 2: Deploy an Intentionally Slow Application

- Step 3: Measure Boot and Service Timing with systemd-analyze

- Step 4: Profile CPU Hot Paths with perf

- Step 5: Trace Kernel and I/O Events with bpftrace

- Step 6: Detect Memory Leaks with valgrind

- Step 7: Apply Optimizations and Re-Profile

- Step 8: Map Findings to DigitalOcean Architecture Decisions

- FAQs

- Conclusion

Deploy on DigitalOcean

Click below to sign up for DigitalOcean's virtual machines, Databases, and AIML products.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.