Tutorial

Recommended Security Measures to Protect Your Servers

Introduction

Most of the time, your main focus will be on getting your cloud applications up and running. As part of your setup and deployment process, it is important to build in robust and thorough security measures for your systems and applications before they are publicly available. Implementing the security measures in this tutorial before deploying your applications will ensure that any software that you run on your infrastructure has a secure base configuration, as opposed to ad-hoc measures that may be implemented post-deploy.

This guide highlights some practical security measures that you can take while you are configuring and setting up your server infrastructure. This list is not an exhaustive list of everything that you can do to secure your servers, but this offers you a starting point that you can build upon. Over time you can develop a more tailored security approach that suits the specific needs of your environments and applications.

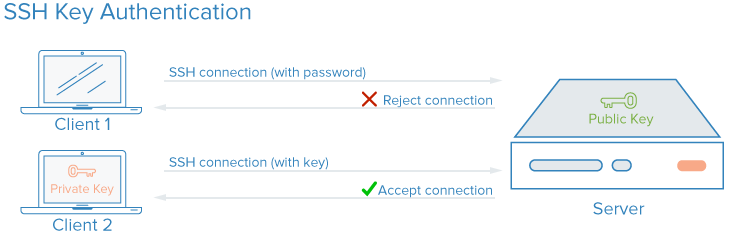

SSH Keys

SSH, or secure shell, is an encrypted protocol used to administer and communicate with servers. When working with a server, you’ll probably spend most of your time in a terminal session connected to your server through SSH. As an alternative to password-based logins, SSH keys use encryption to provide a secure way of logging into your server and are recommended for all users.

With SSH keys, a private and public key pair are created for the purpose of authentication. The private key is kept secret and secure by the user, while the public key can be shared. This is commonly referred to as asymmetric encryption, a pattern you may see elsewhere.

To configure SSH key authentication, you need to put your public SSH key on the server in the expected location (usually ~/.ssh/authorized_keys). To learn more about how SSH-key-based authentication works, read Understanding the SSH Encryption and Connection Process.

How Do SSH Keys Enhance Security?

With SSH, any kind of authentication — including password authentication — is completely encrypted. However, when password-based logins are allowed, malicious users can repeatedly, automatically attempt to access a server, especially if it has a public-facing IP address. Although there are ways of locking out access after multiple failed attempts from the same IP, and malicious users will be limited in practice by how rapidly they can attempt to log in to your server, any circumstance in which a user can plausibly attempt to gain access to your stack by repeated brute force attacks will pose a security risk.

Setting up SSH key authentication allows you to disable password-based authentication. SSH keys generally have many more bits of data than a password — you can create a 128-character SSH key hash from a 12 character password — making them much more challenging to brute-force. Some encryption algorithms are nevertheless considered crackable by attempting to reverse-engineer password hashes enough times on a powerful enough computer. Others, including the default RSA key generated by modern SSH clients, are not yet plausible to crack.

How to Implement SSH Keys

SSH keys are the recommended way to log into any Linux server environment remotely. A pair of SSH keys can be generated on your local machine using the ssh command, and you can then transfer the public key to a remote server.

To set up SSH keys on your server, you can follow How To Set Up SSH Keys for Ubuntu, Debian, or CentOS.

For any parts of your stack that require password access, or which are prone to brute force attacks, you can implement a solution like fail2ban on your servers to limit password guesses.

It is a best practice to not allow the root user to login directly over SSH. Instead, login as an unprivileged user and then escalate privileges as needed using a tool like sudo. This approach to limiting permissions is known as the principle of least privilege. Once you have connected to your server and created an unprivileged account that you have verified works with SSH, you can disable root logins by setting the PermitRootLogin no directive in /etc/ssh/sshd_config on your server and then restarting the server’s SSH process with a command like sudo systemctl restart sshd.

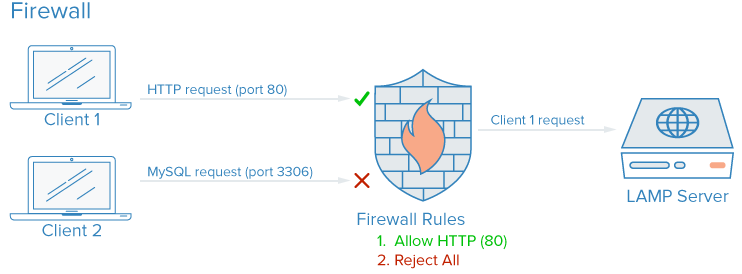

Firewalls

A firewall is a software or hardware device that controls how services are exposed to the network, and what types of traffic are allowed in and out of a given server or servers. A properly configured firewall will ensure that only services that should be publicly available can be reached from outside your servers or network.

On a typical server, a number of services may be running by default. These can be categorized into the following groups:

- Public services that can be accessed by anyone on the internet, often anonymously. An example of this is the web server that servers your actual website.

- Private services that should only be accessed by a select group of authorized accounts or from certain locations. For example, a database control panel like phpMyAdmin.

- Internal services that should be accessible only from within the server itself, without exposing the service to the public internet. For example, a database that should only accept local connections.

Firewalls can ensure that access to your software is restricted according to the categories above with varying degrees of granularity. Public services can be left open and available to the internet, and private services can be restricted based on different criteria, such as connection types. Internal services can be made completely inaccessible to the internet. For ports that are not being used, access is blocked entirely in most configurations.

How Do Firewalls Enhance Security?

Even if your services implement security features or are restricted to the interfaces you’d like them to run on, a firewall serves as a base layer of protection by limiting connections to and from your services before traffic is handled by an application.

A properly configured firewall will restrict access to everything except the specific services you need to remain open, usually by opening only the ports associated with those services. For example, SSH generally runs on port 22, and HTTP/HTTPS access via a web browser usually run on ports 80 and 443 respectively. Exposing only a few pieces of software reduces the attack surface of your server, limiting the components that are vulnerable to exploitation.

How to Implement Firewalls

There are many firewalls available for Linux systems, and some are more complex than others. In general, you should only need to make changes to your firewall configuration when you make changes to the services running on your server. Here are some options to get up and running:

-

UFW, or Uncomplicated Firewall, is installed by default on some Linux distributions like Ubuntu. You can learn more about it in How To Set Up a Firewall with UFW on Ubuntu 20.04

-

If you are using Red Hat, Rocky, or Fedora Linux, you can read How To Set Up a Firewall Using firewalld to use their default tooling.

-

Many software firewalls such as UFW and firewalld will write their configured rules directly to a file called

iptables. To learn how to work with theiptablesconfiguration directly, you can review Iptables Essentials: Common Firewall Rules and Commands . Note that some other software that implements port rules on its own, such as Docker, will also write directly toiptables, and may conflict with the rules you create with UFW, so it’s helpful to know how to read aniptablesconfiguration in cases like this.

Note: Many hosting providers, including DigitalOcean, will allow you to configure a firewall as a service which runs as an external layer over your cloud server(s), rather than needing to implement the firewall directly. These configurations, which are implemented at the network edge using managed tools, are often less complex in practice, but can be more challenging to script and replicate. You can refer to the documentation for DigitalOcean’s cloud firewall.

Be sure that your firewall configuration defaults to blocking unknown traffic. That way any new services that you deploy will not be inadvertently exposed to the Internet. Instead, you will have to allow access explicitly, which will force you to evaluate how a service is run, accessed, and who should be able to use it.

VPC Networks

Virtual Private Cloud (VPC) networks are private networks for your infrastructure’s resources. VPC networks provide a more secure connection among resources because the network’s interfaces are inaccessible from the public internet.

How Do VPC Networks Enhance Security?

Some hosting providers will, by default, assign your cloud servers one public network interface and one private network interface. Disabling your public network interface on parts of your infrastructure will only allow these instances to connect to each other using their private network interfaces over an internal network, which means that the traffic among your systems will not be routed through the public internet where it could be exposed or intercepted.

By conditionally exposing only a few dedicated internet gateways, also known as ingress gateways, as the sole point of access between your VPC network’s resources and the public internet, you will have more control and visibility into the public traffic connecting to your resources. Modern container orchestration systems like Kubernetes have a very well-defined concept of ingress gateways, because they create many private network interfaces by default, which need to be exposed selectively.

How to Implement VPC Networks

Many cloud infrastructure providers enable you to create and add resources to a VPC network inside their data centers.

Note: If you are using DigitalOcean and would like to set up your own VPC gateway, you can follow How to Configure a Droplet as a VPC Gateway guide to learn how on Debian, Ubuntu, and CentOS-based servers.

Manually configuring your own private network can require advanced server configurations and networking knowledge. An alternative to setting up a VPC network is to use a VPN connection between your servers.

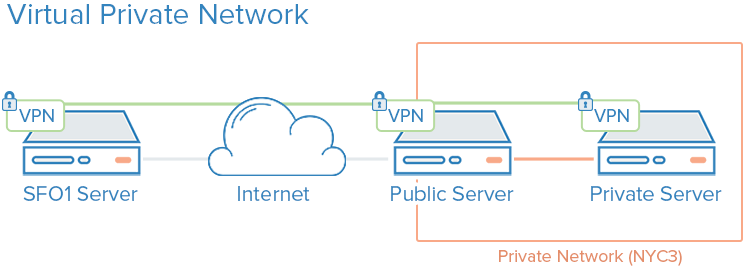

VPNs and Private Networking

A VPN, or virtual private network, is a way to create secure connections between remote computers and present the connection as if it were a local private network. This provides a way to configure your services as if they were on a private network and connect remote servers over secure connections.

For example, DigitalOcean private networks enable isolated communication between servers in the same account or team within the same region.

How Do VPNs Enhance Security?

Using a VPN is a way to map out a private network that only your servers can see. Communication will be fully private and secure. Other applications can be configured to pass their traffic over the virtual interface that the VPN software exposes. This way, only services that are meant to be used by clients on the public internet need to be exposed on the public network.

How to Implement VPNs

Using private networks usually requires you to make decisions about your network interfaces when first deploying your servers, and configuring your applications and firewall to prefer these interfaces. By comparison, deploying VPNs requires installing additional tools and creating additional network routes, but can typically be deployed on top of existing architecture. Each server on a VPN must have the shared security and configuration data needed to establish a VPN connection. After a VPN is up and running, applications must be configured to use the VPN tunnel.

If you are using Ubuntu or CentOS, you can follow How To Set Up and Configure an OpenVPN Server on Ubuntu 20.04 tutorial.

Wireguard is another popular VPN deployment. Generally, VPNs follow the same principle of limiting ingress to your cloud servers by implementing a series of private network interfaces behind a few entry points, but where VPC configurations are usually a core infrastructure consideration, VPNs can be deployed on a more ad-hoc basis.

Service Auditing

Good security involves analyzing your systems, understanding the available attack surfaces, and locking down the components as best as you can.

Service auditing is a way of knowing what services are running on a given system, which ports they are using for communication, and which protocols those services are speaking. This information can help you configure which services should be publicly accessible, firewall settings, monitoring, and alerting.

How Does Service Auditing Enhance Security?

Each running service, whether it is intended to be internal or public, represents an expanded attack surface for malicious users. The more services that you have running, the greater the chance of a vulnerability affecting your software.

Once you have a good idea of what network services are running on your machine, you can begin to analyze these services. When you perform a service audit, ask yourself the following questions about each running service:

- Should this service be running?

- Is the service running on network interfaces that it shouldn’t be running on?

- Should the service be bound to a public or private network interface?

- Are my firewall rules structured to pass legitimate traffic to this service?

- Are my firewall rules blocking traffic that is not legitimate?

- Do I have a method of receiving security alerts about vulnerabilities for each of these services?

This type of service audit should be standard practice when configuring any new server in your infrastructure. Performing service audits every few months will also help you catch any services with configurations that may have changed unintentionally.

How to Implement Service Auditing

To audit network services that are running on your system, use the ss command to list all the TCP and UDP ports that are in use on a server. An example command that shows the program name, PID, and addresses being used for listening for TCP and UDP traffic is:

- sudo ss -plunt

The p, l, u, n, and t options work as follows:

pshows the specific process using a given socket.lshows only sockets that are actively listening for connections.uincludes UDP sockets (in addition to TCP sockets).nshows numerical traffic values.tincludes TCP sockets (in addition to UDP sockets).

You will receive output similar to this:

OutputNetid State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

tcp LISTEN 0 128 0.0.0.0:22 0.0.0.0:* users:(("sshd",pid=812,fd=3))

tcp LISTEN 0 511 0.0.0.0:80 0.0.0.0:* users:(("nginx",pid=69226,fd=6),("nginx",pid=69225,fd=6))

tcp LISTEN 0 128 [::]:22 [::]:* users:(("sshd",pid=812,fd=4))

tcp LISTEN 0 511 [::]:80 [::]:* users:(("nginx",pid=69226,fd=7),("nginx",pid=69225,fd=7))

The main columns that need your attention are the Netid, Local Address:Port, and Process name columns. If the Local Address:Port is 0.0.0.0, then the service is accepting connections on all IPv4 network interfaces. If the address is [::] then the service is accepting connections on all IPv6 interfaces. In the example output above, SSH and Nginx are both listening on all public interfaces, on both IPv4 and IPv6 networking stacks.

You could decide if you want to allow SSH and Nginx to listen on both interfaces, or only on one or the other. Generally, you should disable services that are running on unused interfaces.

Unattended Updates

Keeping your servers up to date with patches is necessary to ensure a good base level of security. Servers that run out of date and insecure versions of software are responsible for a majority of security incidents, but regular updates can mitigate vulnerabilities and prevent attackers from gaining a foothold on your servers. Unattended updates allow the system to update a majority of packages automatically.

How Do Unattended Updates Enhance Security?

Implementing unattended, i.e. automatic, updates lowers the level of effort required to keep your servers secure and shortens the amount of time that your servers may be vulnerable to known bugs. In the event of a vulnerability that affects software on your servers, your servers will be vulnerable for however long it takes for you to run updates. Daily unattended upgrades will ensure that you don’t miss any packages, and that any vulnerable software is patched as soon as fixes are available.

How To Implement Unattended Updates

You can refer to How to Keep Ubuntu Servers Updated for an overview of implementing unattended updates on Ubuntu.

Public Key Infrastructure and SSL/TLS Encryption

Public key infrastructure, or PKI, refers to a system that is designed to create, manage, and validate certificates for identifying individuals and encrypting communication. SSL or TLS certificates can be used to authenticate different entities to one another. After authentication, they can also be used to establish encrypted communication.

How Does PKI Enhance Security?

Establishing a certificate authority (CA) and managing certificates for your servers allows each entity within your infrastructure to validate the other members’ identities and encrypt their traffic. This can prevent man-in-the-middle attacks where an attacker imitates a server in your infrastructure to intercept traffic.

Each server can be configured to trust a centralized certificate authority. Afterward, any certificate signed by this authority can be implicitly trusted.

How To Implement PKI

Configuring a certificate authority and setting up the other public key infrastructure can involve quite a bit of initial effort. Furthermore, managing certificates can create an additional administration burden when new certificates need to be created, signed, or revoked.

For many users, implementing a full-fledged public key infrastructure will only make sense as their infrastructure needs grow. Securing communications between components using a VPN may be a better intermediate measure until you reach a point where PKI is worth the extra administration costs.

If you would like to create your own certificate authority, you can refer to the How To Set Up and Configure a Certificate Authority (CA) guides depending on the Linux distribution that you are using.

Conclusion

The strategies outlined in this tutorial are an overview of some of the steps that you can take to improve the security of your systems. It is important to recognize that security measures decrease in their effectiveness the longer you wait to implement them. Security should not be an afterthought and must be implemented when you first provision your infrastructure. Once you have a secure base to build upon, you can then start deploying your services and applications with some assurances that they are running in a secure environment by default.

Even with a secure starting environment, keep in mind that security is an ongoing and iterative process. Always be sure to ask yourself what the security implications of any change might be, and what steps you can take to ensure that you are always creating secure default configurations and environments for your software.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

Tutorial Series: Getting Started With Cloud Computing

This curriculum introduces open-source cloud computing to a general audience along with the skills necessary to deploy applications and websites securely to the cloud.

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

Get our biweekly newsletter

Sign up for Infrastructure as a Newsletter.

Hollie's Hub for Good

Working on improving health and education, reducing inequality, and spurring economic growth? We'd like to help.

Become a contributor

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

While these are all fairly obvious if you’ve ever worked in industry, this is a great introduction to those who have not. Well written!

It would be really nice if there were an [end to end configuration] example with CoreOS and Docker and all the security already setup. Hope @digitalocean will produce one :)

I think you should add changing the SSH port as it is very effective against automated random attacks

I’m no hacker but, those seven samurai men team up there makes me so scared to pass and go inside, and mess with whoever vps that is.

If only I can delete that Image, then I’ll be not scared anymore.

Lol, it’s a witty image, that’s all I want to say.

Intrusion Detection is not hard. Install OSSEC. While there are some good changes one should make to the configuration files for maximum effect, it works very well straight out of the box.

http://www.ossec.net/

In the section, “File Auditing and Intrusion Detection Systems”, you say that IDS is difficult to admin. I am using a backup tool called the Barebones Encrypted File Storage System as a lightweight IDS. It creates incremental backups and sends me an e-mail with the list of changed files. It doesn’t stop changes from happening but simply reports them. If I need to roll back for any reason, I’ve got 31 days worth of incrementals to work with. I’ve used it on a few occasions that had nothing to do with system intrusion and more to do with administrator stupidity. Over the years of administrating Linux boxes, I’ve learned the hard way that backing up everything under /etc on a regular basis is extremely important. Getting extra mileage as an IDS is icing on the cake.

I m bill paid, but till did not open my website www.buytwofast.com, show page error, please solve my error Email:: buy2fast2018@gmail.com

This comment has been deleted

This comment has been deleted

One other thing you can do is access and error logging. You’ll be surprised how many vulns you can pickup from combined access and error logs of both applications and the underlying server (such as SSH tunnels).

How much you monitor also has a cost so be sensible with what, where and when you monitor as a process to feedback to security.